- Skip to main content

- Skip to primary sidebar

- Skip to footer

- QuestionPro

- Solutions Industries Gaming Automotive Sports and events Education Government Travel & Hospitality Financial Services Healthcare Cannabis Technology Use Case NPS+ Communities Audience Contactless surveys Mobile LivePolls Member Experience GDPR Positive People Science 360 Feedback Surveys

- Resources Blog eBooks Survey Templates Case Studies Training Help center

Home Market Research

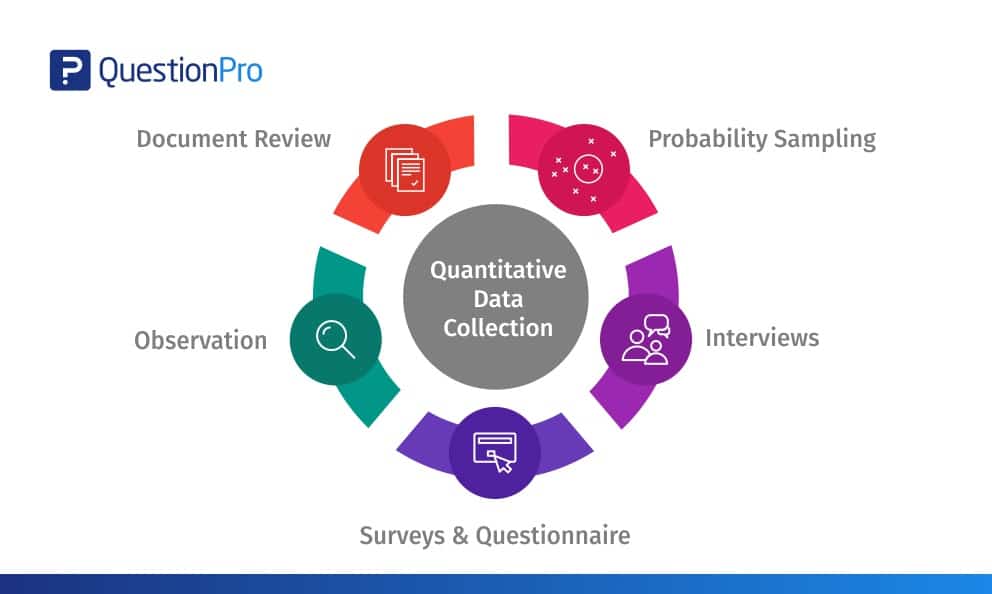

Quantitative Data Collection: Best 5 methods

In contrast to qualitative data , quantitative data collection is everything about figures and numbers. Researchers often rely on quantitative data when they intend to quantify attributes, attitudes, behaviors, and other defined variables with a motive to either back or oppose the hypothesis of a specific phenomenon by contextualizing the data obtained via surveying or interviewing the study sample.

Content Index

What is Quantitative Data Collection?

Importance of quantitative data collection, probability sampling, surveys/questionnaires, observations, document review in quantitative data collection.

Quantitative data collection refers to the collection of numerical data that can be analyzed using statistical methods. This type of data collection is often used in surveys, experiments, and other research methods. It measure variables and establish relationships between variables. The data collected through quantitative methods is typically in the form of numbers, such as response frequencies, means, and standard deviations, and can be analyzed using statistical software.

LEARN ABOUT: Research Process Steps

As a researcher, you do have the option to opt either for data collection online or use traditional data collection methods via appropriate research. Quantitative data collection is important for several reasons:

- Objectivity: Quantitative data collection provides objective and verifiable information, as the data is collected in a systematic and standardized manner.

- Generalizability: The results from quantitative data collection can be generalized to a larger population, making it an effective way to study large groups of people.

- Precision: Numerical data allows for precise measurement and unit of analysis , providing more accurate results than other data collection forms.

- Hypothesis testing: Quantitative data collection allows for testing hypotheses and theories, leading to a better understanding of the relationships between variables.

- Comparison: Quantitative data collection allows for data comparison and analysis. It can be useful in making decisions and identifying trends or patterns.

- Replicability: The numerical nature of quantitative data makes it easier to replicate research results. It is essential for building knowledge in a particular field.

Quantitative data collection provides valuable information for understanding complex phenomena and making informed decisions based on empirical evidence.

LEARN ABOUT: Best Data Collection Tools

Methods used for Quantitative Data Collection

A data that can be counted or expressed in numerical’s constitute the quantitative data. It is commonly used to study the events or levels of concurrence. And is collected through a Structured Question & structured questionnaire asking questions starting with “how much” or “how many.” As the quantitative data is numerical, it represents both definitive and objective data. Furthermore, quantitative information is much sorted for statistical analysis and mathematical analysis, making it possible to illustrate it in the form of charts and graphs.

Discrete and continuous are the two major categories of quantitative data where discreet data have finite numbers and the constant data values falling on a continuum possessing the possibility to have fractions or decimals. If research is conducted to find out the number of vehicles owned by the American household, then we get a whole number, which is an excellent example of discrete data. When research is limited to the study of physical measurements of the population like height, weight, age, or distance, then the result is an excellent example of continuous data.

Any traditional or online data collection method that helps in gathering numerical data is a proven method of collecting quantitative data.

LEARN ABOUT: Survey Sampling

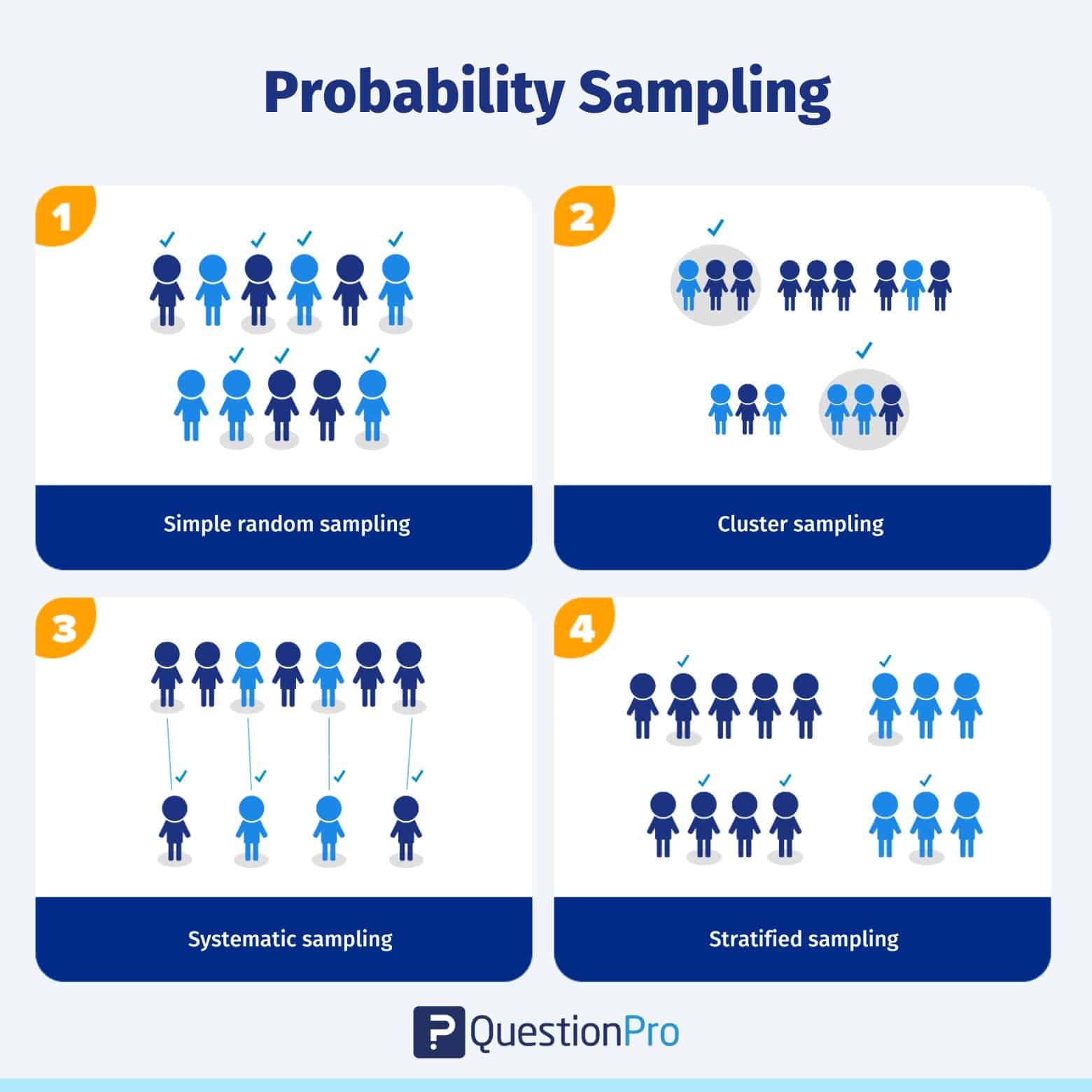

There are four significant types of probability sampling:

- Simple random sampling : More often, the targeted demographic is chosen for inclusion in the sample.

- Cluster sampling : Cluster sampling is a technique in which a population is divided into smaller groups or clusters, and a random sample of these clusters is selected. This method is used when it is impractical or expensive to obtain a random sample from the entire population .

- Systematic sampling : Any of the targeted demographic would be included in the sample, but only the first unit for inclusion in the sample is selected randomly, rest are selected in the ordered fashion as if one out of every ten people on the list .

- Stratified sampling : It allows selecting each unit from a particular group of the targeted audience while creating a sample. It is useful when the researchers are selective about including a specific set of people in the sample, i.e., only males or females, managers or executives, people working within a particular industry.

Interviewing people is a standard method used for data collection . However, the interviews conducted to collect quantitative data are more structured, wherein the researchers ask only a standard set of online questionnaires and nothing more than that.

There are three major types of interviews conducted for data collection

- Telephone interviews: For years, telephone interviews ruled the charts of data collection methods. Nowadays, there is a significant rise in conducting video interviews using the internet, Skype, or similar online video calling platforms.

- Face-to-face interviews: It is a proven technique to collect data directly from the participants. It helps in acquiring quality data as it provides a scope to ask detailed questions and probing further to collect rich and informative data. Literacy requirements of the participant are irrelevant as F2F surveys offer ample opportunities to collect non-verbal data through observation or to explore complex and unknown issues. Although it can be an expensive and time-consuming method, the response rates for F2F interviews are often higher.

- Computer-Assisted Personal Interviewing (CAPI): It is nothing but a similar setup of the face-to-face interview where the interviewer carries a desktop or laptop along with him at the time of interview to upload the data obtained from the interview directly into the database. CAPI saves a lot of time in updating and processing the data and also makes the entire process paperless as the interviewer does not carry a bunch of papers and questionnaires.

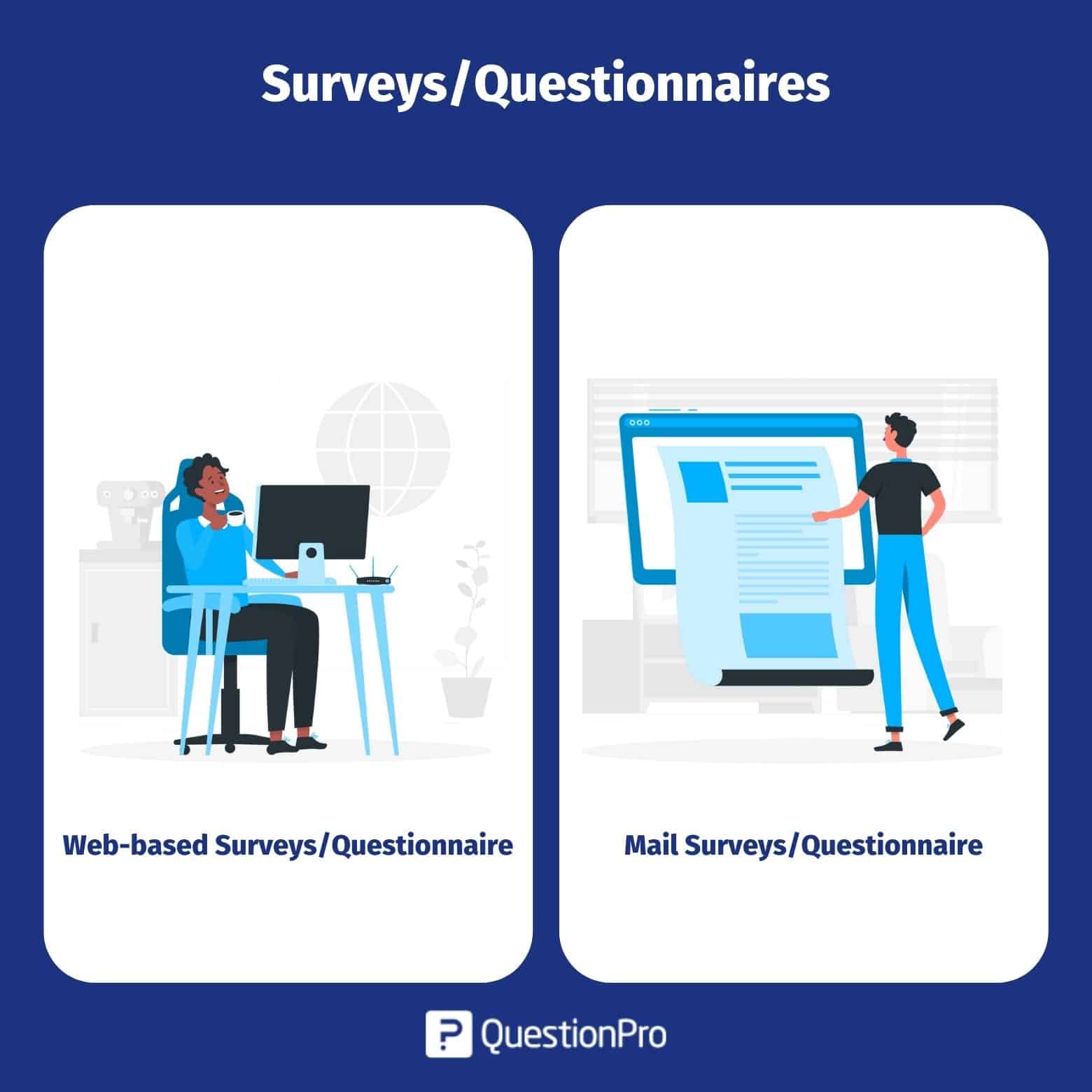

There are two significant types of survey questionnaires used to collect online data for quantitative market research.

- Web-based questionnaire : This is one of the ruling and most trusted methods for internet-based research or online research. In a web-based questionnaire, the receive an email containing the survey link, clicking on which takes the respondent to a secure online survey tool from where he/she can take the survey or fill in the survey questionnaire. Being a cost-efficient, quicker, and having a wider reach, web-based surveys are more preferred by the researchers. The primary benefit of a web-based questionnaire is flexibility. Respondents are free to take the survey in their free time using either a desktop, laptop, tablet, or mobile.

- Mail Questionnaire : In a mail questionnaire, the survey is mailed out to a host of the sample population, enabling the researcher to connect with a wide range of audiences. The mail questionnaire typically consists of a packet containing a cover sheet that introduces the audience about the type of research and reason why it is being conducted along with a prepaid return to collect data online. Although the mail questionnaire has a higher churn rate compared to other quantitative data collection methods, adding certain perks such as reminders and incentives to complete the survey help in drastically improving the churn rate. One of the major benefits of the mail questionnaire is all the responses are anonymous, and respondents are allowed to take as much time as they want to complete the survey and be completely honest about the answer without the fear of prejudice.

LEARN ABOUT: Steps in Qualitative Research

As the name suggests, it is a pretty simple and straightforward method of collecting quantitative data. In this method, researchers collect quantitative data through systematic observations by using techniques like counting the number of people present at the specific event at a particular time and a particular venue or number of people attending the event in a designated place. More often, for quantitative data collection, the researchers have a naturalistic observation approach. It needs keen observation skills and senses for getting the numerical data about the “what” and not about “why” and ”how.”

Naturalistic observation is used to collect both types of data; qualitative and quantitative. However, structured observation is more used to collect quantitative rather than qualitative data collection .

- Structured observation: In this type of observation method, the researcher has to make careful observations of one or more specific behaviors in a more comprehensive or structured setting compared to naturalistic or participant observation . In a structured observation, the researchers, rather than observing everything, focus only on very specific behaviors of interest. It allows them to quantify the behaviors they are observing. When the qualitative observations require a judgment on the part of the observers – it is often described as coding, which requires a clearly defining a set of target behaviors.

Document review is a process used to collect data after reviewing the existing documents. It is an efficient and effective way of gathering data as documents are manageable. Those are the practical resource to get qualified data from the past. Apart from strengthening and supporting the research by providing supplementary research data document review has emerged as one of the beneficial methods to gather quantitative research data.

Three primary document types are being analyzed for collecting supporting quantitative research data.

- Public Records: Under this document review, official, ongoing records of an organization are analyzed for further research. For example, annual reports policy manuals, student activities, game activities in the university, etc.

- Personal Documents: In contrast to public documents, this type of document review deals with individual personal accounts of individuals’ actions, behavior, health, physique, etc. For example, the height and weight of the students, distance students are traveling to attend the school, etc.

- Physical Evidence: Physical evidence or physical documents deal with previous achievements of an individual or of an organization in terms of monetary and scalable growth.

LEARN ABOUT: 12 Best Tools for Researchers

Quantitative data is not about convergent reasoning, but it is about divergent thinking. It deals with the numerical, logic, and an objective stance, by focusing on numeric and unchanging data. More often, data collection methods are used to collect quantitative research data, and the results are dependent on the larger sample sizes that are commonly representing the population researcher intend to study.

Although there are many other methods to collect quantitative data. Those mentioned above probability sampling, interviews, questionnaire observation, and document review are the most common and widely used methods for data collection.

With QuestionPro, you can precise results, and data analysis . QuestionPro provides the opportunity to collect data from a large number of participants. It increases the representativeness of the sample and providing more accurate results.

FREE TRIAL LEARN MORE

MORE LIKE THIS

Top 20 Employee Engagement Software Solutions

May 3, 2024

15 Best Customer Experience Software of 2024

May 2, 2024

Journey Orchestration Platforms: Top 11 Platforms in 2024

Top 12 Employee Pulse Survey Tools Unlocking Insights in 2024

May 1, 2024

Other categories

- Academic Research

- Artificial Intelligence

- Assessments

- Brand Awareness

- Case Studies

- Communities

- Consumer Insights

- Customer effort score

- Customer Engagement

- Customer Experience

- Customer Loyalty

- Customer Research

- Customer Satisfaction

- Employee Benefits

- Employee Engagement

- Employee Retention

- Friday Five

- General Data Protection Regulation

- Insights Hub

- Life@QuestionPro

- Market Research

- Mobile diaries

- Mobile Surveys

- New Features

- Online Communities

- Question Types

- Questionnaire

- QuestionPro Products

- Release Notes

- Research Tools and Apps

- Revenue at Risk

- Survey Templates

- Training Tips

- Uncategorized

- Video Learning Series

- What’s Coming Up

- Workforce Intelligence

- A/B Monadic Test

- A/B Pre-Roll Test

- Key Driver Analysis

- Multiple Implicit

- Penalty Reward

- Price Sensitivity

- Segmentation

- Single Implicit

- Category Exploration

- Competitive Landscape

- Consumer Segmentation

- Innovation & Renovation

- Product Portfolio

- Marketing Creatives

- Advertising

- Shelf Optimization

- Performance Monitoring

- Better Brand Health Tracking

- Ad Tracking

- Trend Tracking

- Satisfaction Tracking

- AI Insights

- Case Studies

quantilope is the Consumer Intelligence Platform for all end-to-end research needs

5 Methods of Data Collection for Quantitative Research

In this blog, read up on five different data collection techniques for quantitative research studies.

Quantitative research forms the basis for many business decisions. But what is quantitative data collection, why is it important, and which data collection methods are used in quantitative research?

Table of Contents:

- What is quantitative data collection?

- The importance of quantitative data collection

- Methods used for quantitative data collection

- Example of a survey showing quantitative data

- Strengths and weaknesses of quantitative data

What is quantitative data collection?

Quantitative data collection is the gathering of numeric data that puts consumer insights into a quantifiable context. It typically involves a large number of respondents - large enough to extract statistically reliable findings that can be extrapolated to a larger population.

The actual data collection process for quantitative findings is typically done using a quantitative online questionnaire that asks respondents yes/no questions, ranking scales, rating matrices, and other quantitative question types. With these results, researchers can generate data charts to summarize the quantitative findings and generate easily digestible key takeaways.

Back to Table of Contents

The importance of quantitative data collection

Quantitative data collection can confirm or deny a brand's hypothesis, guide product development, tailor marketing materials, and much more. It provides brands with reliable information to make decisions off of (i.e. 86% like lemon-lime flavor or just 12% are interested in a cinnamon-scented hand soap).

Compared to qualitative data collection, quantitative data allows for comparison between insights given higher base sizes which leads to the ability to have statistical significance. Brands can cut and analyze their dataset in a variety of ways, looking at their findings among different demographic groups, behavioral groups, and other ways of interest. It's also generally easier and quicker to collect quantitative data than it is to gather qualitative feedback, making it an important data collection tool for brands that need quick, reliable, concrete insights.

In order to make justified business decisions from quantitative data, brands need to recruit a high-quality sample that's reflective of their true target market (one that's comprised of all ages/genders rather than an isolated group). For example, a study into usage and attitudes around orange juice might include consumers who buy and/or drink orange juice at a certain frequency or who buy a variety of orange juice brands from different outlets.

Methods used for quantitative data collection

So knowing what quantitative data collection is and why it's important , how does one go about researching a large, high-quality, representative sample ?

Below are five examples of how to conduct your study through various data collection methods :

Online quantitative surveys

Online surveys are a common and effective way of collecting data from a large number of people. They tend to be made up of closed-ended questions so that responses across the sample are comparable; however, a small number of open-ended questions can be included as well (i.e. questions that require a written response rather than a selection of answers in a close-ended list). Open-ended questions are helpful to gather actual language used by respondents on a certain issue or to collect feedback on a view that might not be shown in a set list of responses).

Online surveys are quick and easy to send out, typically done so through survey panels. They can also appear in pop-ups on websites or via a link embedded in social media. From the participant’s point of view, online surveys are convenient to complete and submit, using whichever device they prefer (mobile phone, tablet, or computer). Anonymity is also viewed as a positive: online survey software ensures respondents’ identities are kept completely confidential.

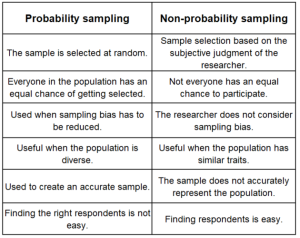

To gather respondents for online surveys, researchers have several options. Probability sampling is one route, where respondents are selected using a random selection method. As such, everyone within the population has an equal chance of getting selected to participate.

There are four common types of probability sampling .

- Simple random sampling is the most straightforward approach, which involves randomly selecting individuals from the population without any specific criteria or grouping.

- Stratified random sampling divides the population into subgroups (strata) and selects a random sample from each stratum. This is useful when a population includes subgroups that you want to be sure you cover in your research.

- Cluster sampling divides the population into clusters and then randomly selects some of the clusters to sample in their entirety. This is useful when a population is geographically dispersed and it would be impossible to include everyone.

- Systematic sampling begins with a random starting point and then selects every nth member of the population after that point (i.e. every 15th respondent).

Learn how to leverage AI to help generate your online quantitative survey inputs:

While online surveys are by far the most common way to collect quantitative data in today’s modern age, there are still some harder-to-reach respondents where other mediums can be beneficial; for example, those who aren’t tech-savvy or who don’t have a stable internet connection. For these audiences, offline surveys may be needed.

Offline quantitative surveys

Offline surveys (though much rarer to come across these days) are a way of gathering respondent feedback without digital means. This could be something like postal questionnaires that are sent out to a sample population and asked to return the questionnaire by mail (like the Census) or telephone surveys where questions are asked of respondents over the phone.

Offline surveys certainly take longer to collect data than online surveys and they can become expensive if the population is difficult to reach (requiring a higher incentive). As with online surveys, anonymity is protected, assuming the mail is not intercepted or lost.

Despite the major difference in data collection to an online survey approach, offline survey data is still reported on in an aggregated, numeric fashion.

In-person interviews are another popular way of researching or polling a population. They can be thought of as a survey but in a verbal, in-person, or virtual face-to-face format. The online format of interviews is becoming more popular nowadays, as it is cheaper and logistically easier to organize than in-person face-to-face interviews, yet still allows the interviewer to see and hear from the respondent in their own words.

Though many interviews are collected for qualitative research, interviews can also be leveraged quantitatively; like a phone survey, an interviewer runs through a survey with the respondent, asking mainly closed-ended questions (yes/no, multiple choice questions, or questions with rating scales that ask how strongly the respondent agrees with statements). The advantage of structured interviews is that the interviewer can pace the survey, making sure the respondent gives enough consideration to each question. It also adds a human touch, which can be more engaging for some respondents. On the other hand, for more sensitive issues, respondents may feel more inclined to complete a survey online for a greater sense of anonymity - so it all depends on your research questions, the survey topic, and the audience you're researching.

Observations

Observation studies in quantitative research are similar in nature to a qualitative ethnographic study (in which a researcher also observes consumers in their natural habitats), yet observation studies for quant research remain focused on the numbers - how many people do an action, how much of a product consumer pick up, etc.

For quantitative observations, researchers will record the number and types of people who do a certain action - such as choosing a specific product from a grocery shelf, speaking to a company representative at an event, or how many people pass through a certain area within a given timeframe. Observation studies are generally structured, with the observer asked to note behavior using set parameters. Structured observation means that the observer has to hone in on very specific behaviors, which can be quite nuanced. This requires the observer to use his/her own judgment about what type of behavior is being exhibited (e.g. reading labels on products before selecting them; considering different items before making the final choice; making a selection based on price).

Document reviews and secondary data sources

A fifth method of data collection for quantitative research is known as secondary research : reviewing existing research to see how it can contribute to understanding a new issue in question. This is in contrast to the primary research methods above, which is research that is specially commissioned and carried out for a research project.

There are numerous secondary data sources that researchers can analyze such as public records, government research, company databases, existing reports, paid-for research publications, magazines, journals, case studies, websites, books, and more.

Aside from using secondary research alone, secondary research documents can also be used in anticipation of primary research, to understand which knowledge gaps need to be filled and to nail down the issues that might be important to explore further in a primary research study. Back to Table of Contents

Example of a survey showing quantitative data

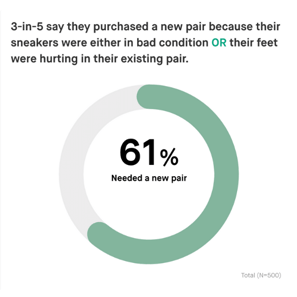

The below study shows what quantitative data might look like in a final study dashboard, taken from quantilope's Sneaker category insights study .

The study includes a variety of usage and attitude metrics around sneaker wear, sneaker purchases, seasonality of sneakers, and more. Check out some of the data charts below showing these quantitative data findings - the first of which even cuts the quantitative data findings by demographics.

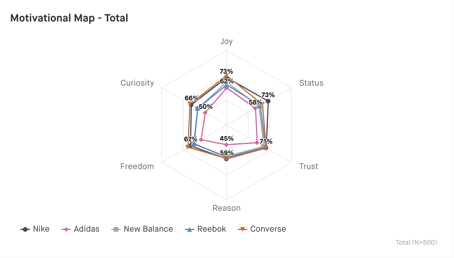

Beyond these basic usage and attitude (or, descriptive) data metrics, quantitative data also includes advanced methods - such as implicit association testing. See what these quantitative data charts look like from the same sneaker study below:

These are just a few examples of how a researcher or insights team might show their quantitative data findings. However, there are many ways to visualize quantitative data in an insights study, from bar charts, column charts, pie charts, donut charts, spider charts, and more, depending on what best suits the story your data is telling. Back to Table of Contents

Strengths and weaknesses of quantitative data collection

quantitative data is a great way to capture informative insights about your brand, product, category, or competitors. It's relatively quick, depending on your sample audience, and more affordable than other data collection methods such as qualitative focus groups. With quantitative panels, it's easy to access nearly any audience you might need - from something as general as the US population to something as specific as cannabis users . There are many ways to visualize quantitative findings, making it a customizable form of insights - whether you want to show the data in a bar chart, pie chart, etc.

For those looking for quick, affordable, actionable insights, quantitative studies are the way to go.

quantitative data collection, despite the many benefits outlined above, might also not be the right fit for your exact needs. For example, you often don't get as detailed and in-depth answers quantitatively as you would with an in-person interview, focus group, or ethnographic observation (all forms of qualitative research). When running a quantitative survey, it’s best practice to review your data for quality measures to ensure all respondents are ones you want to keep in your data set. Fortunately, there are a lot of precautions research providers can take to navigate these obstacles - such as automated data cleaners and data flags. Of course, the first step to ensuring high-quality results is to use a trusted panel provider. Back to Table of Contents

Quantitative research typically needs to undergo statistical analysis for it to be useful and actionable to any business. It is therefore crucial that the method of data collection, sample size, and sample criteria are considered in light of the research questions asked.

quantilope’s online platform is ideal for quantitative research studies. The online format means a large sample can be reached easily and quickly through connected respondent panels that effectively reach the desired target audience. Response rates are high, as respondents can take their survey from anywhere, using any device with internet access.

Surveys are easy to build with quantilope’s online survey builder. Simply choose questions to include from pre-designed survey templates or build your own questions using the platform’s drag & drop functionality (of which both options are fully customizable). Once the survey is live, findings update in real-time so that brands can get an idea of consumer attitudes long before the survey is complete. In addition to basic usage and attitude questions, quantilope’s suite of advanced research methodologies provides an AI-driven approach to many types of research questions. These range from exploring the features of products that drive purchase through a Key Driver Analysis , compiling the ideal portfolio of products using a TURF , or identifying the optimal price point for a product or service using a Price Sensitivity Meter (PSM) .

Depending on the type of data sought it might be worth considering a mixed-method approach, including both qual and quant in a single research study. Alongside quantitative online surveys, quantilope’s video research solution - inColor , offers qualitative research in the form of videoed responses to survey questions. inColor’s qualitative data analysis includes an AI-drive read on respondent sentiment, keyword trends, and facial expressions.

To find out more about how quantilope can help with any aspect of your research design and to start conducting high-quality, quantitative research, get in touch below:

Get in touch to learn more about quantitative research studies!

Related posts, non-probability sampling: when and how to use it effectively, survey results: how to analyze data and report on findings, how florida's natural leveraged better brand health tracking, quirk's virtual event: fab-brew-lous collaboration: product innovations from the melitta group.

Want to create or adapt books like this? Learn more about how Pressbooks supports open publishing practices.

3.3 Methods of Quantitative Data Collection

Data collection is the process of gathering information for research purposes. Data collection methods in quantitative research refer to the techniques or tools used to collect data from participants or units in a study. Data are the most important asset for any researcher because they provide the researcher with the knowledge necessary to confirm or refute their research hypothesis. 2 The choice of data collection method will depend on the research question, the study design, the type of data to be collected, and the available resources. There are two main types of data which are primary data and secondary data. 34 These data types and their examples are discussed below.

Data Sources

Secondary data

Secondary data is data that is already in existence and was collected for other purposes and not for the sole purpose of a researcher’s project. 34 These pre-existing data include data from surveys, administrative records, medical records, or other sources (databases, internet). Examples of these data sources include census data, vital registration (birth and death), registries of notifiable diseases, hospital data and health-related data such as the national health survey data and national drug strategy household survey. 2 While secondary data are population-based, quicker to access, and cheaper to collect than primary data, there are some drawbacks to this data source. Potential disadvantages include accuracy of the data, completeness, and appropriateness of the data, given that the data was collected for an alternative purpose. 2

Primary data

Primary data is collected directly from the study participants and used expressly for research purposes. 34 The data collected is specifically targeted at the research question, hypothesis and aims. Examples of primary data include observations and surveys (questionnaires). 34

- Observations: In quantitative research, observations entail systematically watching and recording the events or behaviours of interest. Observations can be used to collect information on variables that may be difficult to quantify through self-reported methods. Observations, for example, can be used to obtain clinical measurements involving the use of standardised instruments or tools to measure physical, cognitive, or other variables of interest. Other examples include experimental or laboratory studies that necessitate the collection of physiological data such as blood pressure, heart rate, urine, e.t.c. 2

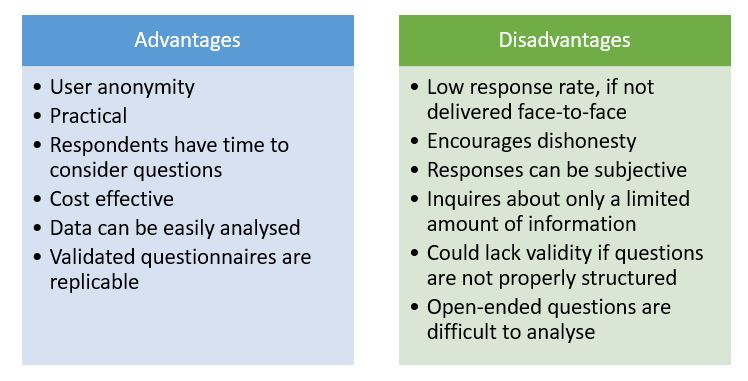

- Surveys: While observations are useful data collection methods, surveys are more commonly used data collection methods in healthcare research. 2, 34 Surveys or questionnaires are designed to seek specific information such as knowledge, beliefs, attitudes and behaviour from respondents. 2, 34 Surveys can be employed as a single research tool (as in a cross-sectional survey) or as part of clinical trials or epidemiological studies. 2, 34 They can be administered face-to-face, via telephone, paper-based, computer-based or a combination of the different methods. 2 Figure 3.7 outlines some advantages and disadvantages of questionnaires/surveys.

Designing a survey/questionnaire

A questionnaire is a research tool that consists of questions that are designed to collect information and generate statistical data from a specified group of people (target population). There are two main considerations in relation to design principles, and these are (1) content and (2) layout and sequence. 36 In terms of content, it is important to review the literature for related validated survey tools, as this saves time and allows for the comparison of results. Additionally, researchers need to minimise complexity by using simple direct language, including only relevant and accurate questions, with no jargon. 36 Concerning layout and sequence, there should be a logical flow of questions from general and easier to more sensitive ones, and the questionnaire should be as short as possible and NOT overcrowded. 36 The following steps can be used to develop a survey/ questionnaire.

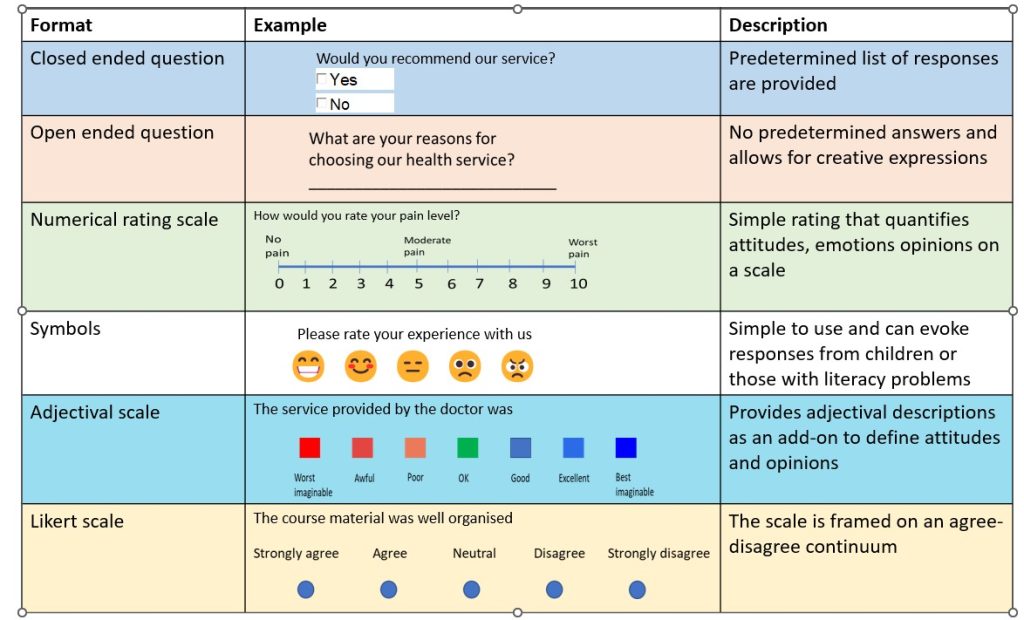

Question Formats

Open and closed-ended questions are the two main types of question formats. 2 Open-ended questions allow respondents to express their thoughts without being constrained by the available options. 2, 38 Open-ended questions are chosen if the options are many and the range of answers is unknown. 38

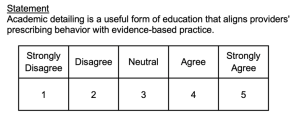

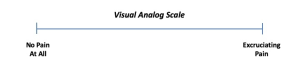

On the other hand, closed-ended questions provide respondents with alternatives and require that they select one or more options from a list. 38 The question type is favoured if the choices are few and the range of responses is well-known. 38 However, other question formats may be used when assessing things on a continuum, like attitudes and behaviour. These variables can be considered using rating scales like visual analogue scales, adjectival scales and Likert scales. 2 Figure 3.8 presents a visual representation of some question types, including open-ended, closed-ended, likert rating scales, symbols, and visual Analogue Scales.

It is important to carefully craft survey questions to ensure that they are clear, unbiased and accurately capture the information researchers seek to gather. Clearly written questions with consistency in wording increase the likelihood of obtaining accurate and reliable data. Poorly crafted questions, on the other hand, may sway respondents to answer in a particular way which can undermine the validity of the survey. The following are some general guidelines for question wording. 39

Be concise and clear: Ask succinct and precise questions, and do not use ambiguous and vague words. For example, do not ask a patient, “ how was your clinic experience ? What do you mean by clinic experience? Are you referring to their interactions with the nurses, doctors or physiotherapists?

Instead, consider using a better-phrased question such as “ please rate your experience with the doctor during your visit today ”.

Avoid double-barrelled questions. Some questions may have dual questions, for example: Do you think you should eat less and exercise more?

Instead, ask:

- Do you think you should eat less?

- Do you think you should exercise more?

Steer clear of questions that involve negatives: Negatively worded questions can be confusing. For example, I find it difficult to fall asleep unless I take sleeping pills .

A better phrase is, “sleeping pills make it easy for me to fall asleep.”

Ask for specific answers. It is better to ask for more precise information. For example, “what is your age in years?________ Is preferable to -Which age category do you belong to?

☐ <18 years

☐ 18 – 25 years

☐ 25 – 35 years

☐ > 35 years

The options above will give more room for errors because the options are not mutually exclusive (there are overlaps) and not exhaustive (there are older age groups above 35 years).

Avoid leading questions. Leading questions reduces objectivity and make respondents answer in a particular way. Questions related to values and beliefs should be neutrally phrased. For example, the question below is worded in a leading way – Conducting research is challenging. Does research training help to prepare you for your research project?

An appropriate alternative: Research training prepares me for my research project.

Strongly agree Agree Disagree Strongly disagree

An Introduction to Research Methods for Undergraduate Health Profession Students Copyright © 2023 by Faith Alele and Bunmi Malau-Aduli is licensed under a Creative Commons Attribution-NonCommercial 4.0 International License , except where otherwise noted.

Quantitative Methods

- Living reference work entry

- First Online: 11 June 2021

- Cite this living reference work entry

- Juwel Rana 2 , 3 , 4 ,

- Patricia Luna Gutierrez 5 &

- John C. Oldroyd 6

369 Accesses

1 Citations

Quantitative analysis ; Quantitative research methods ; Study design

Quantitative method is the collection and analysis of numerical data to answer scientific research questions. Quantitative method is used to summarize, average, find patterns, make predictions, and test causal associations as well as generalizing results to wider populations. It allows us to quantify effect sizes, determine the strength of associations, rank priorities, and weigh the strength of evidence of effectiveness.

Introduction

This entry aims to introduce the most common ways to use numbers and statistics to describe variables, establish relationships among variables, and build numerical understanding of a topic. In general, the quantitative research process uses a deductive approach (Neuman 2014 ; Leavy 2017 ), extrapolating from a particular case to the general situation (Babones 2016 ).

In practical ways, quantitative methods are an approach to studying a research topic. In research, the...

This is a preview of subscription content, log in via an institution to check access.

Access this chapter

Institutional subscriptions

Babones S (2016) Interpretive quantitative methods for the social sciences. Sociology. https://doi.org/10.1177/0038038515583637

Balnaves M, Caputi P (2001) Introduction to quantitative research methods: an investigative approach. Sage, London

Book Google Scholar

Brenner PS (2020) Understanding survey methodology: sociological theory and applications. Springer, Boston

Google Scholar

Creswell JW (2014) Research design: qualitative, quantitative, and mixed methods approaches. Sage, London

Leavy P (2017) Research design. The Gilford Press, New York

Mertens W, Pugliese A, Recker J (2018) Quantitative data analysis, research methods: information, systems, and contexts: second edition. https://doi.org/10.1016/B978-0-08-102220-7.00018-2

Neuman LW (2014) Social research methods: qualitative and quantitative approaches. Pearson Education Limited, Edinburgh

Treiman DJ (2009) Quantitative data analysis: doing social research to test ideas. Jossey-Bass, San Francisco

Download references

Author information

Authors and affiliations.

Department of Public Health, School of Health and Life Sciences, North South University, Dhaka, Bangladesh

Department of Biostatistics and Epidemiology, School of Health and Health Sciences, University of Massachusetts Amherst, MA, USA

Department of Research and Innovation, South Asia Institute for Social Transformation (SAIST), Dhaka, Bangladesh

Independent Researcher, Masatepe, Nicaragua

Patricia Luna Gutierrez

School of Behavioral and Health Sciences, Australian Catholic University, Fitzroy, VIC, Australia

John C. Oldroyd

You can also search for this author in PubMed Google Scholar

Corresponding author

Correspondence to Juwel Rana .

Editor information

Editors and affiliations.

Florida Atlantic University, Boca Raton, FL, USA

Ali Farazmand

Rights and permissions

Reprints and permissions

Copyright information

© 2021 Springer Nature Switzerland AG

About this entry

Cite this entry.

Rana, J., Gutierrez, P.L., Oldroyd, J.C. (2021). Quantitative Methods. In: Farazmand, A. (eds) Global Encyclopedia of Public Administration, Public Policy, and Governance. Springer, Cham. https://doi.org/10.1007/978-3-319-31816-5_460-1

Download citation

DOI : https://doi.org/10.1007/978-3-319-31816-5_460-1

Received : 31 January 2021

Accepted : 14 February 2021

Published : 11 June 2021

Publisher Name : Springer, Cham

Print ISBN : 978-3-319-31816-5

Online ISBN : 978-3-319-31816-5

eBook Packages : Springer Reference Economics and Finance Reference Module Humanities and Social Sciences Reference Module Business, Economics and Social Sciences

- Publish with us

Policies and ethics

- Find a journal

- Track your research

Quantitative Data Collection Methods

Quantitative research methods describe and measure the level of occurrences on the basis of numbers and calculations. Moreover, the questions of “how many?” and “how often?” are often asked in quantitative studies. Accordingly, quantitative data collection methods are based on numbers and mathematical calculations.

Quantitative research can be described as ‘entailing the collection of numerical data and exhibiting the view of relationship between theory and research as deductive, a predilection for natural science approach, and as having an objectivist conception of social reality’ [1] . In other words, quantitative studies mainly examine relationships between numerically measured variables with the application of statistical techniques.

Quantitative data collection methods are based on random sampling and structured data collection instruments. Findings of quantitative studies are usually easy to present, summarize, compare and generalize.

Qualitative studies , on the contrary, are usually based on non-random sampling methods and use non-quantifiable data such as words, feelings, emotions ect. Table below illustrates the main differences between qualitative and quantitative data collection and research methods:

Main differences between quantitative and qualitative methods

The most popular quantitative data collection methods include the following:

- Face-to-face interviews;

- Telephone interviews;

- Computer-Assisted Personal Interviewing (CAPI).

- Internet-based questionnaire;

- Mail questionnaire;

- Face-to-face survey.

- Observations . The type of observation that can be used to collect quantitative data is systematic, where the researcher counts the number of occurrences of phenomenon.

My e-book, The Ultimate Guide to Writing a Dissertation in Business Studies: a step by step approach contains a detailed, yet simple explanation of quantitative methods. The e-book explains all stages of the research process starting from the selection of the research area to writing personal reflection. Important elements of dissertations such as research philosophy, research approach, research design, methods of data collection and data analysis are explained in simple words. John Dudovskiy

[1] Bryman, A. & Bell, E. (2015) “Business Research Methods” 4 th edition, p.160

Popular searches

- How to Get Participants For Your Study

- How to Do Segmentation?

- Conjoint Preference Share Simulator

- MaxDiff Analysis

- Likert Scales

- Reliability & Validity

Request consultation

Do you need support in running a pricing or product study? We can help you with agile consumer research and conjoint analysis.

Looking for an online survey platform?

Conjointly offers a great survey tool with multiple question types, randomisation blocks, and multilingual support. The Basic tier is always free.

Catherine Chipeta

Fully-functional online survey tool with various question types, logic, randomisation, and reporting for unlimited number of surveys.

Monthly newsletter

Get the latest updates about market research, automated tools, product testing and pricing techniques.

We explore quantitative data collection methods’ best use, and the pros and cons of each to help you decide which method to use for your next quantitative study.

Survey Tool

There are many ways to categorise research methods, with most falling into the fields of either qualitative or quantitative.

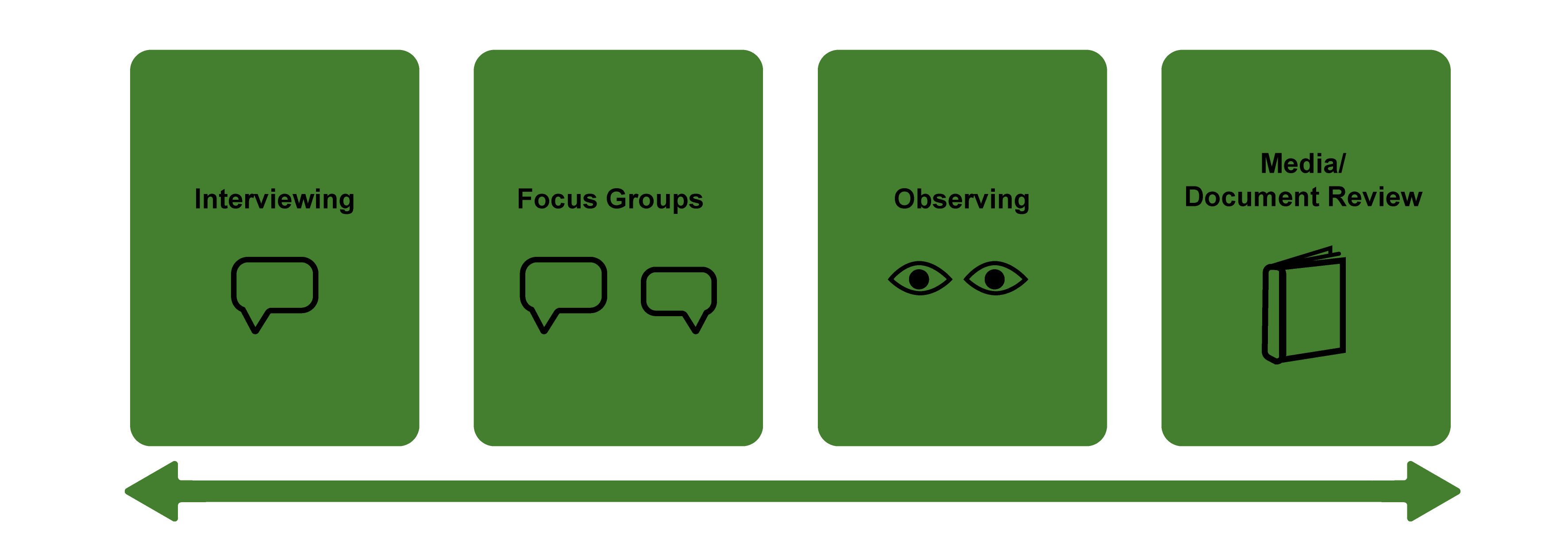

Qualitative research uses non-measurable sources of data and relies mostly on observation techniques to gain insights. It is mostly used to answer questions beginning with “why?” and how?”. Examples of qualitative data collection methods include focus groups, observation, written records, and individual interviews.

Quantitative research presents data in a numerical format, enabling researchers to evaluate and understand this data through statistical analysis . It answers questions such as “who?”, “when?” “what?”, and “where?”. Common examples include interviews , surveys , and case studies/document review. Generally, quantitative data tells us what respondents’ choices are and qualitative tells us why they made those choices.

Once you have determined which type of research you wish to undertake, it is time to select a data collection method. Whilst quantitative and qualitative collection methods often overlap, this article focuses on quantitative data collection methods.

The Nature of Quantitative Observation

As quantitative observation uses numerical measurement , its results are more accurate than qualitative observation methods, which cannot be measured.

To ensure accuracy and consistency, an appropriate sample size needs to be determined for quantitative research. A sample should include enough respondents to make general observations that are most reflective of the whole population.

The more credible the sample size, the more meaningful the insights that the market researcher can draw during the analysis process.

Quantitative surveys are a data collection tool used to gather close-ended responses from individuals and groups. Question types primarily include categorical (e.g. “yes/no”) and interval/ratio questions (e.g. rating-scale, Likert-scale ). They are used to gather information such based upon the behaviours, characteristics, or opinions , and demographic information such as gender, income, occupation.

Surveys are traditionally completed on pen-and-paper but these days are commonly found online , which is a more convenient method.

When to use

Surveys are an ideal choice when you want simple, Quick Feedback which easily translates into statistics for analysis. For example, “60% of respondents think price is the most important factor when making buying decisions”.

- Speedy collection: User-friendly, optimal length surveys are quick to complete and online responses are available instantly.

- Wide reach: Online survey invites can be sent out to hundreds of potential respondents at a time.

- Targeted respondents: Using online panels allows you to target the right respondents for your study based on demographics and other profiling information.

Disadvantages

- Less detail: Surveys often collect less detailed responses than other forms of collection due to the limited options available for respondents to choose.

- Design reliant: If survey design is not effective, the quality of responses will be diminished.

- Potential bias: If respondents feel compelled to answer a question in a particular way due to social or other reasons, this lowers the accuracy of results.

Quantitative interviews are like surveys in that they use a question-and-answer format. The major difference between the two methods is the recording process.

In interviews, respondents are read each question and answer option to them by an interviewer who records responses, whereas in surveys, the respondent reads each question and answers themselves, recording their own response.

For quantitative interviews to be effective, each question and answer must be asked the same way to each respondent, with little to no input from the interviewer.

Quantitative interviews work well when the market researcher is conducting fieldwork to scope potential respondents. For example, approaching buyers of a certain product at a supermarket.

- Higher responsiveness: Potential respondents are more likely to say ‘yes’ to a market researcher in-person than in other ways, e.g. a phone call.

- Clearer understanding: Interviews allow respondents to seek classification from the interviewer if they are confused by a question.

- Less downtime: The market researcher can collect data as soon as the interview is conducted, rather than wait to hear back from the respondent first.

- Interviewer effect: Having an interviewer present questions to the respondent poses the risk of influencing the way in which the respondent answers.

- Time consuming: Interviews usually take longer to complete than other methods, such as surveys.

- Less control: Interviews present more variables, such as tone and pace, which could affect data quality.

Secondary Data Collection Methods

Published case studies and online sources are forms of secondary data, that is, data which has already been prepared and compiled for analysis.

Case studies are descriptive or explanatory publications which detail specific individuals, groups, or events. Whilst case studies are conducted using qualitative methods such as direct observation and unstructured interviewing, researchers can gather statistical data published in these sources to gain quantitative insights.

Other forms of secondary data include journals, books, magazines, and government publications.

Secondary data collection methods are most appropriately used when the market researcher is exploring a topic which already has extensive information and data available and is looking for supplementary insights for guidance.

For example, a study on caffeine consumption habits could draw statistics from existing medical case studies.

- Easier collection: As secondary data is readily available, it is relatively easy to collect for further analysis.

- More credibility: If collected from reputable sources, secondary data can be trusted as accurate and of quality.

- Less expensive: Collecting secondary data often costs a lot less than if the same data were collected primarily.

- Differing context: Secondary data collected will not necessarily align with the market researcher’s research questions or objectives.

- Limited availability: The amount and detail of secondary data available for a particular research topic is varied and not dependable.

- Less control: As secondary data is originally collected externally, there is no control over the quality of available data on a topic.

Quantitative research produces the most accurate and meaningful insights for analysis.

Surveys are a common form of quantitative data collection and can be created and completed online, making them a convenient and accessible choice. However, they must be well-designed and executed to ensure accurate results.

Interviews are an ideal choice for in-person data collection and can improve respondents’ understanding of questions. Time and potential interview bias are drawbacks to this method.

Collecting secondary data is a relatively quick and inexpensive way of gathering supplementary insights for research but there is limited control over context, availability, and quality of the data.

Find the right respondents for your survey

We use quality panel providers to source respondents that suit your specific research needs.

Read these articles next:

How to use the clustering demo.

Easily segment your audience using preference data and other survey answers with the Clustering Demo.

How to perform smart sampling and data checking?

With 5,000+ projects performed on the platform, Conjointly market research experts have compiled common concerns regarding sampling and data checking and provide their suggestions.

How to improve your research with time series analysis

Time series analysis is a powerful tool for analysing data collected throughout an extended period. Here is how you can use it to understand changes in consumer and market trends, and make data-driven decisions.

Which one are you?

I am new to conjointly, i am already using conjointly, cookie consent.

Conjointly uses essential cookies to make our site work. We also use additional cookies in order to understand the usage of the site, gather audience analytics, and for remarketing purposes.

For more information on Conjointly's use of cookies, please read our Cookie Policy .

Do you want to be updated on new features from Conjointly?

We send an occasional email to keep our users informed about new developments on Conjointly : new types of analysis and features for quality insight.

Subscribe to updates from Conjointly

You can always unsubscribe later. Your email will not be shared with other companies.

Have a language expert improve your writing

Run a free plagiarism check in 10 minutes, automatically generate references for free.

- Knowledge Base

- Methodology

- Data Collection Methods | Step-by-Step Guide & Examples

Data Collection Methods | Step-by-Step Guide & Examples

Published on 4 May 2022 by Pritha Bhandari .

Data collection is a systematic process of gathering observations or measurements. Whether you are performing research for business, governmental, or academic purposes, data collection allows you to gain first-hand knowledge and original insights into your research problem .

While methods and aims may differ between fields, the overall process of data collection remains largely the same. Before you begin collecting data, you need to consider:

- The aim of the research

- The type of data that you will collect

- The methods and procedures you will use to collect, store, and process the data

To collect high-quality data that is relevant to your purposes, follow these four steps.

Table of contents

Step 1: define the aim of your research, step 2: choose your data collection method, step 3: plan your data collection procedures, step 4: collect the data, frequently asked questions about data collection.

Before you start the process of data collection, you need to identify exactly what you want to achieve. You can start by writing a problem statement : what is the practical or scientific issue that you want to address, and why does it matter?

Next, formulate one or more research questions that precisely define what you want to find out. Depending on your research questions, you might need to collect quantitative or qualitative data :

- Quantitative data is expressed in numbers and graphs and is analysed through statistical methods .

- Qualitative data is expressed in words and analysed through interpretations and categorisations.

If your aim is to test a hypothesis , measure something precisely, or gain large-scale statistical insights, collect quantitative data. If your aim is to explore ideas, understand experiences, or gain detailed insights into a specific context, collect qualitative data.

If you have several aims, you can use a mixed methods approach that collects both types of data.

- Your first aim is to assess whether there are significant differences in perceptions of managers across different departments and office locations.

- Your second aim is to gather meaningful feedback from employees to explore new ideas for how managers can improve.

Prevent plagiarism, run a free check.

Based on the data you want to collect, decide which method is best suited for your research.

- Experimental research is primarily a quantitative method.

- Interviews , focus groups , and ethnographies are qualitative methods.

- Surveys , observations, archival research, and secondary data collection can be quantitative or qualitative methods.

Carefully consider what method you will use to gather data that helps you directly answer your research questions.

When you know which method(s) you are using, you need to plan exactly how you will implement them. What procedures will you follow to make accurate observations or measurements of the variables you are interested in?

For instance, if you’re conducting surveys or interviews, decide what form the questions will take; if you’re conducting an experiment, make decisions about your experimental design .

Operationalisation

Sometimes your variables can be measured directly: for example, you can collect data on the average age of employees simply by asking for dates of birth. However, often you’ll be interested in collecting data on more abstract concepts or variables that can’t be directly observed.

Operationalisation means turning abstract conceptual ideas into measurable observations. When planning how you will collect data, you need to translate the conceptual definition of what you want to study into the operational definition of what you will actually measure.

- You ask managers to rate their own leadership skills on 5-point scales assessing the ability to delegate, decisiveness, and dependability.

- You ask their direct employees to provide anonymous feedback on the managers regarding the same topics.

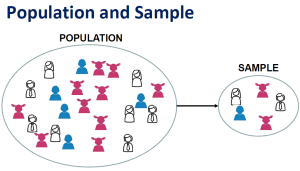

You may need to develop a sampling plan to obtain data systematically. This involves defining a population , the group you want to draw conclusions about, and a sample, the group you will actually collect data from.

Your sampling method will determine how you recruit participants or obtain measurements for your study. To decide on a sampling method you will need to consider factors like the required sample size, accessibility of the sample, and time frame of the data collection.

Standardising procedures

If multiple researchers are involved, write a detailed manual to standardise data collection procedures in your study.

This means laying out specific step-by-step instructions so that everyone in your research team collects data in a consistent way – for example, by conducting experiments under the same conditions and using objective criteria to record and categorise observations.

This helps ensure the reliability of your data, and you can also use it to replicate the study in the future.

Creating a data management plan

Before beginning data collection, you should also decide how you will organise and store your data.

- If you are collecting data from people, you will likely need to anonymise and safeguard the data to prevent leaks of sensitive information (e.g. names or identity numbers).

- If you are collecting data via interviews or pencil-and-paper formats, you will need to perform transcriptions or data entry in systematic ways to minimise distortion.

- You can prevent loss of data by having an organisation system that is routinely backed up.

Finally, you can implement your chosen methods to measure or observe the variables you are interested in.

The closed-ended questions ask participants to rate their manager’s leadership skills on scales from 1 to 5. The data produced is numerical and can be statistically analysed for averages and patterns.

To ensure that high-quality data is recorded in a systematic way, here are some best practices:

- Record all relevant information as and when you obtain data. For example, note down whether or how lab equipment is recalibrated during an experimental study.

- Double-check manual data entry for errors.

- If you collect quantitative data, you can assess the reliability and validity to get an indication of your data quality.

Data collection is the systematic process by which observations or measurements are gathered in research. It is used in many different contexts by academics, governments, businesses, and other organisations.

When conducting research, collecting original data has significant advantages:

- You can tailor data collection to your specific research aims (e.g., understanding the needs of your consumers or user testing your website).

- You can control and standardise the process for high reliability and validity (e.g., choosing appropriate measurements and sampling methods ).

However, there are also some drawbacks: data collection can be time-consuming, labour-intensive, and expensive. In some cases, it’s more efficient to use secondary data that has already been collected by someone else, but the data might be less reliable.

Quantitative research deals with numbers and statistics, while qualitative research deals with words and meanings.

Quantitative methods allow you to test a hypothesis by systematically collecting and analysing data, while qualitative methods allow you to explore ideas and experiences in depth.

Reliability and validity are both about how well a method measures something:

- Reliability refers to the consistency of a measure (whether the results can be reproduced under the same conditions).

- Validity refers to the accuracy of a measure (whether the results really do represent what they are supposed to measure).

If you are doing experimental research , you also have to consider the internal and external validity of your experiment.

In mixed methods research , you use both qualitative and quantitative data collection and analysis methods to answer your research question .

Operationalisation means turning abstract conceptual ideas into measurable observations.

For example, the concept of social anxiety isn’t directly observable, but it can be operationally defined in terms of self-rating scores, behavioural avoidance of crowded places, or physical anxiety symptoms in social situations.

Before collecting data , it’s important to consider how you will operationalise the variables that you want to measure.

Cite this Scribbr article

If you want to cite this source, you can copy and paste the citation or click the ‘Cite this Scribbr article’ button to automatically add the citation to our free Reference Generator.

Bhandari, P. (2022, May 04). Data Collection Methods | Step-by-Step Guide & Examples. Scribbr. Retrieved 6 May 2024, from https://www.scribbr.co.uk/research-methods/data-collection-guide/

Is this article helpful?

Pritha Bhandari

Other students also liked, qualitative vs quantitative research | examples & methods, triangulation in research | guide, types, examples, what is a conceptual framework | tips & examples.

Part I: Sampling, Data Collection, & Analysis in Quantitative Research

In this module, we will focus on how quantitative research collects and analyzes data, as well as methods for obtaining sample population.

- Levels of Measurement

- Reliability and Validity

- Population and Samples

- Common Data Collection Methods

- Data Analysis

- Statistical Significance versus Clinical Significance

Objectives:

- Describe levels of measurement

- Describe reliability and validity as applied to critical appraisal of research

- Differentiate methods of obtaining samples for population generalizability

- Describe common data collection methods in quantitative research

- Describe various data analysis methods in quantitative research

- Differentiate statistical significance versus clinical significance

Levels of measurement

Once researchers have collected their data (we will talk about data collection later in this module), they need methods to organize the data before they even start to think about statistical analyses. Statistical operations depend on a variable’s level of measurement. Think about this similarly to shuffling all of your bills in some type of organization before you pay them. With levels of measurement, we are precisely recording variables in a method to help organize them.

There are four levels of measurement:

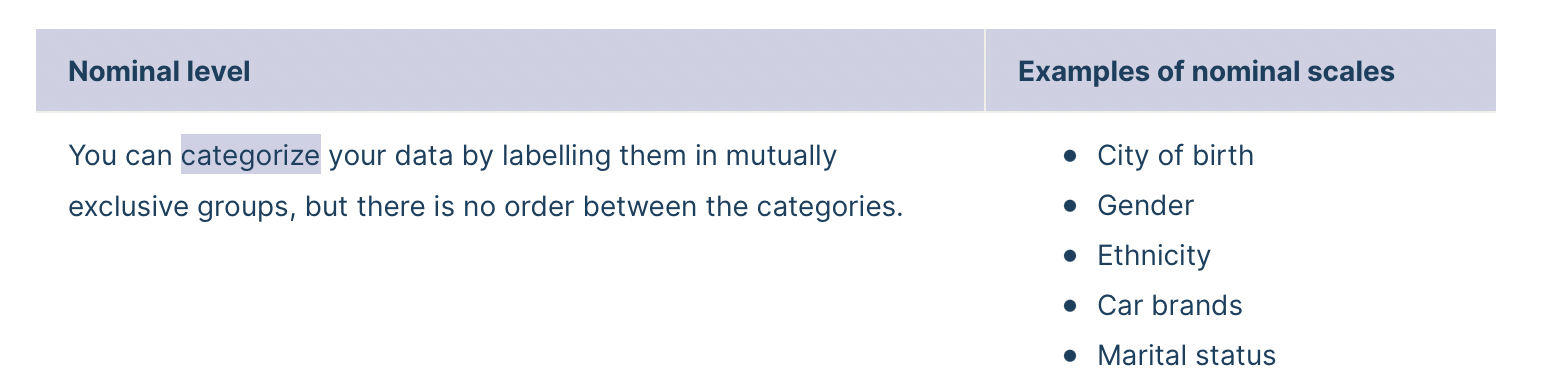

Nominal: The data can only be categorized

Ordinal: The data can be categorized and ranked

Interval: The data can be categorized, ranked, and evenly spaced

Ratio: The data can be categorized, ranked, even spaced, and has a natural zero

Going from lowest to highest, the 4 levels of measurement are cumulative. This means that they each take on the properties of lower levels and add new properties.

- A variable is nominal if the values could be interchanged (e.g. 1 = male, 2 = female OR 1 = female, 2 = male).

- A variable is ordinal if there is a quantitative ordering of values AND if there are a small number of values (e.g. excellent, good, fair, poor).

- A variable is usually considered interval if it is measured with a composite scale or test.

- A variable is ratio level if it makes sense to say that one value is twice as much as another (e.g. 100 mg is twice as much as 50 mg) (Polit & Beck, 2021).

Reliability and Validity as Applied to Critical Appraisal of Research

Reliability measures the ability of a measure to consistently measure the same way. Validity measures what it is supposed to measure. Do we have the need for both in research? Yes! If a variable is measured inaccurately, the data is useless. Let’s talk about why.

For example, let’s set out to measure blood glucose for our study. The validity is how well the measure can determine the blood glucose. If we used a blood pressure cuff to measure blood glucose, this would not be a valid measure. If we used a blood glucose meter, it would be a more valid measure. It does not stop there, however. What about the meter itself? Has it been calibrated? Are the correct sticks for the meter available? Are they expired? Does the meter have fresh batteries? Are the patient’s hands clean?

Reliability wants to know: Is the blood glucose meter measuring the same way, every time?

Validity is asking, “Does the meter measure what it is supposed to measure?” Construct validity: Does the test measure the concept that it’s intended to measure? Content validity: Is the test fully representative of what it aims to measure? Face validity: Does the content of the test appear to be suitable to its aims?

Leibold, 2020

Obtaining Samples for Population Generalizability

In quantitative research, a population is the entire group that the researcher wants to draw conclusions about.

A sample is the specific group that the researcher will actually collect data from. A sample is always a much smaller group of people than the total size of the population. For example, if we wanted to investigate heart failure, there would be no possible way to measure every single human with heart failure. Therefore, researchers will attempt to select a sample of that large population which would most likely reflect (AKA: be a representative sample) the larger population of those with heart failure. Remember, in quantitative research, the results should be generalizable to the population studied.

A researcher will specify population characteristics through eligibility criteria. This means that they consider which characteristics to include ( inclusion criteria ) and which characteristics to exclude ( exclusion criteria ).

For example, if we were studying chemotherapy in breast cancer subjects, we might specify:

- Inclusion Criteria: Postmenopausal women between the ages of 45 and 75 who have been diagnosed with Stage II breast cancer.

- Exclusion Criteria: Abnormal renal function tests since we are studying a combination of drugs that may be nephrotoxic. Renal function tests are to be performed to evaluate renal function and the threshold values that would disqualify the prospective subject is serum creatinine above 1.9 mg/dl.

Sampling Designs:

There are two broad classes of sampling in quantitative research: Probability and nonprobability sampling.

Probability sampling : As the name implies, probability sampling means that each eligible individual has a random chance (same probability) of being selected to participate in the study.

There are three types of probability sampling:

Simple random sampling : Every eligible participant is randomly selected (e.g. drawing from a hat).

Stratified random sampling : Eligible population is first divided into two or more strata (categories) from which randomization occurs (e.g. pollution levels selected from restaurants, bars with ordinances of state laws, and bars with no ordinances).

Systematic sampling : Involves the selection of every __ th eligible participant from a list (e.g. every 9 th person).

Nonprobability sampling : In nonprobability sampling, eligible participants are selected using a subjective (non-random) method.

There are four types of nonprobability sampling:

Convenience sampling : Participants are selected for inclusion in the sample because they are the easiest for the researcher to access. This can be due to geographical proximity, availability at a given time, or willingness to participate in the research.

Quota sampling : Participants are from a very tailored sample that’s in proportion to some characteristic or trait of a population. For example, the researcher could divide a population by the state they live in, income or education level, or sex. The population is divided into groups (also called strata) and samples are taken from each group to meet a quota.

Consecutive sampling : A sampling technique in which every subject meeting the criteria of inclusion is selected until the required sample size is achieved. Consecutive sampling is defined as a nonprobability technique where samples are picked at the ease of a researcher more like convenience sampling, only with a slight variation. Here, the researcher selects a sample or group of people, conducts research over a period, collects results, and then moves on to another sample.

Purposive sampling : A group of non-probability sampling techniques in which units are selected because they have characteristics that the researcher needs in their sample. In other words, units are selected “on purpose” in purposive sampling.

Common Data Collection Methods in Quantitative Research

There are various methods that researchers use to collect data for their studies. For nurse researchers, existing records are an important data source. Researchers need to decide if they will collect new data or use existing data. There is also a wealth of clinical data that can be used for non-research purposed to help answer clinical questions.

Let’s look at some general data collection methods and data sources in quantitative research.

Existing data could include medical records, school records, corporate diaries, letters, meeting minutes, and photographs. These are easy to obtain do not require participation from those being studied.

Collecting new data:

Let’s go over a few methods in which researcher can collect new data. These usually requires participation from those being studied.

Self-reports can be obtained via interviews or questionnaires . Closed-ended questions can be asked (“Within the past 6 months, were you ever a member of a fitness gym?” Yes/No) or open-ended questions such as “Why did you decide to join a fitness gym?” Important to remember (this sometimes throws students off) is that conducting interviews and questionnaires does not mean it is qualitative in nature! Do not let that throw you off in assessing whether a published article is quantitative or qualitative. The nature of the questions, however, may help to determine the type of research (quantitative or qualitative), as qualitative questions deal with ascertaining a very organic collection of people’s experiences in open-ended questions.

Advantages of questionnaires (compared to interviews):

- Questionnaires are less costly and are advantageous for geographically dispersed samples.

- Questionnaires offer the possibility of anonymity, which may be crucial in obtaining information about certain opinions or traits.

Advances of interviews (compared to questionnaires):

- Higher response rates

- Some people cannot fill out a questionnaire.

- Opportunities to clarify questions or to determine comprehension

- Opportunity to collect supplementary data through observation

Psychosocial scales are often utilized within questionnaires or interviews. These can help to obtain attitudes, perceptions, and psychological traits.

Likert Scales :

- Consist of several declarative statements ( items ) expressing viewpoints

- Responses are on an agree/disagree continuum (usually five or seven response options).

- Responses to items are summed to compute a total scale score.

Visual Analog Scale:

- Used to measure subjective experiences (e.g., pain, nausea)

- Measurements are on a straight line measuring 100 mm.

- End points labeled as extreme limits of sensation

Observational Methods include the observation method of data collection involves seeing people in a certain setting or place at a specific time and day. Essentially, researchers study the behavior of the individuals or surroundings in which they are analyzing. This can be controlled, spontaneous, or participant-based research .

When a researcher utilizes a defined procedure for observing individuals or the environment, this is known as structured observation. When individuals are observed in their natural environment, this is known as naturalistic observation. In participant observation, the researcher immerses himself or herself in the environment and becomes a member of the group being observed.

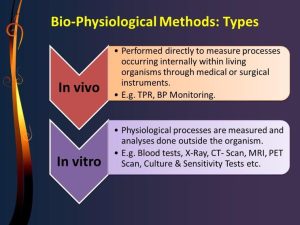

Biophysiologic Measures are defined as ‘those physiological and physical variables that require specialized technical instruments and equipment for their measurement’. Biophysiological measures are the most common instruments for collecting data in medical science studies. To collect valid and reliable data, it is critical to apply these measures appropriately.

- In vivo refers to when research or work is done with or within an entire, living organism. Examples can include studies in animal models or human clinical trials.

- In vitro is used to describe work that’s performed outside of a living organism. This usually involves isolated tissues, organs, or cells.

Let’s watch a video about Sampling and Data Collection that I made a couple of years ago.

Quantitative Data Collection

Quantitative methods to collect data involve measures and numerical information that can be further tested and analyzed with statistical methods. The most common forms of quantitative data collection methods are:

- Experiments

- Observation with instruments

- Spatial data

- Surveys with numerical scaled questions

Below are some resources from the UNT Libraries that provide guidance on quantitative data collection methods and sampling techniques commonly used in social science research.

Qualitative Data Collection

Qualitative data collection focuses on collecting information based on experience, thoughts, and feelings from your subjects or representations from artifacts in your discipline. The most common ways to collect qualitative data are:

- Examining artifacts (e.g. text, documents, images, video, audio, objects)

- Holding focus Group

- Conducting interviews

- Observing phenomena

- Conducting surveys with open-ended questions

Below are some resources from the UNT Libraries that provide guidance on qualitative data collection methods and sampling techniques commonly used in social science research.

- << Previous: Research Design

- Next: Data Analysis and Reporting >>

- Last Updated: Jan 26, 2024 9:43 AM

- URL: https://guides.library.unt.edu/rmss

Additional Links

UNT: Apply now UNT: Schedule a tour UNT: Get more info about the University of North Texas

UNT: Disclaimer | UNT: AA/EOE/ADA | UNT: Privacy | UNT: Electronic Accessibility | UNT: Required Links | UNT: UNT Home

An official website of the United States government

The .gov means it's official. Federal government websites often end in .gov or .mil. Before sharing sensitive information, make sure you're on a federal government site.

The site is secure. The https:// ensures that you are connecting to the official website and that any information you provide is encrypted and transmitted securely.

- Publications

- Account settings

- Browse Titles

NCBI Bookshelf. A service of the National Library of Medicine, National Institutes of Health.

National Research Council; Division of Behavioral and Social Sciences and Education; Commission on Behavioral and Social Sciences and Education; Committee on Basic Research in the Behavioral and Social Sciences; Gerstein DR, Luce RD, Smelser NJ, et al., editors. The Behavioral and Social Sciences: Achievements and Opportunities. Washington (DC): National Academies Press (US); 1988.

The Behavioral and Social Sciences: Achievements and Opportunities.

- Hardcopy Version at National Academies Press

5 Methods of Data Collection, Representation, and Analysis

This chapter concerns research on collecting, representing, and analyzing the data that underlie behavioral and social sciences knowledge. Such research, methodological in character, includes ethnographic and historical approaches, scaling, axiomatic measurement, and statistics, with its important relatives, econometrics and psychometrics. The field can be described as including the self-conscious study of how scientists draw inferences and reach conclusions from observations. Since statistics is the largest and most prominent of methodological approaches and is used by researchers in virtually every discipline, statistical work draws the lion’s share of this chapter’s attention.