Help | Advanced Search

Computer Science (since January 1993)

For a specific paper , enter the identifier into the top right search box.

- new (most recent mailing, with abstracts)

- recent (last 5 mailings)

- current month's cs listings

- specific year/month: 2024 2023 2022 2021 2020 2019 2018 2017 2016 2015 2014 2013 2012 2011 2010 2009 2008 2007 2006 2005 2004 2003 2002 2001 2000 1999 1998 1997 1996 1995 1994 1993 all months 01 (Jan) 02 (Feb) 03 (Mar) 04 (Apr) 05 (May) 06 (Jun) 07 (Jul) 08 (Aug) 09 (Sep) 10 (Oct) 11 (Nov) 12 (Dec)

- Catch-up: Changes since: 01 02 03 04 05 06 07 08 09 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 01 (Jan) 02 (Feb) 03 (Mar) 04 (Apr) 05 (May) 06 (Jun) 07 (Jul) 08 (Aug) 09 (Sep) 10 (Oct) 11 (Nov) 12 (Dec) 2024 2023 2022 2021 2020 2019 2018 2017 2016 2015 2014 2013 2012 2011 2010 2009 2008 2007 2006 2005 2004 2003 2002 2001 2000 1999 1998 1997 1996 1995 1994 1993 , view results without with abstracts

- Search within the cs archive

- Article statistics by year: 2024 2023 2022 2021 2020 2019 2018 2017 2016 2015 2014 2013 2012 2011 2010 2009 2008 2007 2006 2005 2004 2003 2002 2001 2000 1999 1998 1997 1996 1995 1994 1993

Categories within Computer Science

- cs.AI - Artificial Intelligence ( new , recent , current month ) Covers all areas of AI except Vision, Robotics, Machine Learning, Multiagent Systems, and Computation and Language (Natural Language Processing), which have separate subject areas. In particular, includes Expert Systems, Theorem Proving (although this may overlap with Logic in Computer Science), Knowledge Representation, Planning, and Uncertainty in AI. Roughly includes material in ACM Subject Classes I.2.0, I.2.1, I.2.3, I.2.4, I.2.8, and I.2.11.

- cs.CL - Computation and Language ( new , recent , current month ) Covers natural language processing. Roughly includes material in ACM Subject Class I.2.7. Note that work on artificial languages (programming languages, logics, formal systems) that does not explicitly address natural-language issues broadly construed (natural-language processing, computational linguistics, speech, text retrieval, etc.) is not appropriate for this area.

- cs.CC - Computational Complexity ( new , recent , current month ) Covers models of computation, complexity classes, structural complexity, complexity tradeoffs, upper and lower bounds. Roughly includes material in ACM Subject Classes F.1 (computation by abstract devices), F.2.3 (tradeoffs among complexity measures), and F.4.3 (formal languages), although some material in formal languages may be more appropriate for Logic in Computer Science. Some material in F.2.1 and F.2.2, may also be appropriate here, but is more likely to have Data Structures and Algorithms as the primary subject area.

- cs.CE - Computational Engineering, Finance, and Science ( new , recent , current month ) Covers applications of computer science to the mathematical modeling of complex systems in the fields of science, engineering, and finance. Papers here are interdisciplinary and applications-oriented, focusing on techniques and tools that enable challenging computational simulations to be performed, for which the use of supercomputers or distributed computing platforms is often required. Includes material in ACM Subject Classes J.2, J.3, and J.4 (economics).

- cs.CG - Computational Geometry ( new , recent , current month ) Roughly includes material in ACM Subject Classes I.3.5 and F.2.2.

- cs.GT - Computer Science and Game Theory ( new , recent , current month ) Covers all theoretical and applied aspects at the intersection of computer science and game theory, including work in mechanism design, learning in games (which may overlap with Learning), foundations of agent modeling in games (which may overlap with Multiagent systems), coordination, specification and formal methods for non-cooperative computational environments. The area also deals with applications of game theory to areas such as electronic commerce.

- cs.CV - Computer Vision and Pattern Recognition ( new , recent , current month ) Covers image processing, computer vision, pattern recognition, and scene understanding. Roughly includes material in ACM Subject Classes I.2.10, I.4, and I.5.

- cs.CY - Computers and Society ( new , recent , current month ) Covers impact of computers on society, computer ethics, information technology and public policy, legal aspects of computing, computers and education. Roughly includes material in ACM Subject Classes K.0, K.2, K.3, K.4, K.5, and K.7.

- cs.CR - Cryptography and Security ( new , recent , current month ) Covers all areas of cryptography and security including authentication, public key cryptosytems, proof-carrying code, etc. Roughly includes material in ACM Subject Classes D.4.6 and E.3.

- cs.DS - Data Structures and Algorithms ( new , recent , current month ) Covers data structures and analysis of algorithms. Roughly includes material in ACM Subject Classes E.1, E.2, F.2.1, and F.2.2.

- cs.DB - Databases ( new , recent , current month ) Covers database management, datamining, and data processing. Roughly includes material in ACM Subject Classes E.2, E.5, H.0, H.2, and J.1.

- cs.DL - Digital Libraries ( new , recent , current month ) Covers all aspects of the digital library design and document and text creation. Note that there will be some overlap with Information Retrieval (which is a separate subject area). Roughly includes material in ACM Subject Classes H.3.5, H.3.6, H.3.7, I.7.

- cs.DM - Discrete Mathematics ( new , recent , current month ) Covers combinatorics, graph theory, applications of probability. Roughly includes material in ACM Subject Classes G.2 and G.3.

- cs.DC - Distributed, Parallel, and Cluster Computing ( new , recent , current month ) Covers fault-tolerance, distributed algorithms, stabilility, parallel computation, and cluster computing. Roughly includes material in ACM Subject Classes C.1.2, C.1.4, C.2.4, D.1.3, D.4.5, D.4.7, E.1.

- cs.ET - Emerging Technologies ( new , recent , current month ) Covers approaches to information processing (computing, communication, sensing) and bio-chemical analysis based on alternatives to silicon CMOS-based technologies, such as nanoscale electronic, photonic, spin-based, superconducting, mechanical, bio-chemical and quantum technologies (this list is not exclusive). Topics of interest include (1) building blocks for emerging technologies, their scalability and adoption in larger systems, including integration with traditional technologies, (2) modeling, design and optimization of novel devices and systems, (3) models of computation, algorithm design and programming for emerging technologies.

- cs.FL - Formal Languages and Automata Theory ( new , recent , current month ) Covers automata theory, formal language theory, grammars, and combinatorics on words. This roughly corresponds to ACM Subject Classes F.1.1, and F.4.3. Papers dealing with computational complexity should go to cs.CC; papers dealing with logic should go to cs.LO.

- cs.GL - General Literature ( new , recent , current month ) Covers introductory material, survey material, predictions of future trends, biographies, and miscellaneous computer-science related material. Roughly includes all of ACM Subject Class A, except it does not include conference proceedings (which will be listed in the appropriate subject area).

- cs.GR - Graphics ( new , recent , current month ) Covers all aspects of computer graphics. Roughly includes material in all of ACM Subject Class I.3, except that I.3.5 is is likely to have Computational Geometry as the primary subject area.

- cs.AR - Hardware Architecture ( new , recent , current month ) Covers systems organization and hardware architecture. Roughly includes material in ACM Subject Classes C.0, C.1, and C.5.

- cs.HC - Human-Computer Interaction ( new , recent , current month ) Covers human factors, user interfaces, and collaborative computing. Roughly includes material in ACM Subject Classes H.1.2 and all of H.5, except for H.5.1, which is more likely to have Multimedia as the primary subject area.

- cs.IR - Information Retrieval ( new , recent , current month ) Covers indexing, dictionaries, retrieval, content and analysis. Roughly includes material in ACM Subject Classes H.3.0, H.3.1, H.3.2, H.3.3, and H.3.4.

- cs.IT - Information Theory ( new , recent , current month ) Covers theoretical and experimental aspects of information theory and coding. Includes material in ACM Subject Class E.4 and intersects with H.1.1.

- cs.LO - Logic in Computer Science ( new , recent , current month ) Covers all aspects of logic in computer science, including finite model theory, logics of programs, modal logic, and program verification. Programming language semantics should have Programming Languages as the primary subject area. Roughly includes material in ACM Subject Classes D.2.4, F.3.1, F.4.0, F.4.1, and F.4.2; some material in F.4.3 (formal languages) may also be appropriate here, although Computational Complexity is typically the more appropriate subject area.

- cs.LG - Machine Learning ( new , recent , current month ) Papers on all aspects of machine learning research (supervised, unsupervised, reinforcement learning, bandit problems, and so on) including also robustness, explanation, fairness, and methodology. cs.LG is also an appropriate primary category for applications of machine learning methods.

- cs.MS - Mathematical Software ( new , recent , current month ) Roughly includes material in ACM Subject Class G.4.

- cs.MA - Multiagent Systems ( new , recent , current month ) Covers multiagent systems, distributed artificial intelligence, intelligent agents, coordinated interactions. and practical applications. Roughly covers ACM Subject Class I.2.11.

- cs.MM - Multimedia ( new , recent , current month ) Roughly includes material in ACM Subject Class H.5.1.

- cs.NI - Networking and Internet Architecture ( new , recent , current month ) Covers all aspects of computer communication networks, including network architecture and design, network protocols, and internetwork standards (like TCP/IP). Also includes topics, such as web caching, that are directly relevant to Internet architecture and performance. Roughly includes all of ACM Subject Class C.2 except C.2.4, which is more likely to have Distributed, Parallel, and Cluster Computing as the primary subject area.

- cs.NE - Neural and Evolutionary Computing ( new , recent , current month ) Covers neural networks, connectionism, genetic algorithms, artificial life, adaptive behavior. Roughly includes some material in ACM Subject Class C.1.3, I.2.6, I.5.

- cs.NA - Numerical Analysis ( new , recent , current month ) cs.NA is an alias for math.NA. Roughly includes material in ACM Subject Class G.1.

- cs.OS - Operating Systems ( new , recent , current month ) Roughly includes material in ACM Subject Classes D.4.1, D.4.2., D.4.3, D.4.4, D.4.5, D.4.7, and D.4.9.

- cs.OH - Other Computer Science ( new , recent , current month ) This is the classification to use for documents that do not fit anywhere else.

- cs.PF - Performance ( new , recent , current month ) Covers performance measurement and evaluation, queueing, and simulation. Roughly includes material in ACM Subject Classes D.4.8 and K.6.2.

- cs.PL - Programming Languages ( new , recent , current month ) Covers programming language semantics, language features, programming approaches (such as object-oriented programming, functional programming, logic programming). Also includes material on compilers oriented towards programming languages; other material on compilers may be more appropriate in Architecture (AR). Roughly includes material in ACM Subject Classes D.1 and D.3.

- cs.RO - Robotics ( new , recent , current month ) Roughly includes material in ACM Subject Class I.2.9.

- cs.SI - Social and Information Networks ( new , recent , current month ) Covers the design, analysis, and modeling of social and information networks, including their applications for on-line information access, communication, and interaction, and their roles as datasets in the exploration of questions in these and other domains, including connections to the social and biological sciences. Analysis and modeling of such networks includes topics in ACM Subject classes F.2, G.2, G.3, H.2, and I.2; applications in computing include topics in H.3, H.4, and H.5; and applications at the interface of computing and other disciplines include topics in J.1--J.7. Papers on computer communication systems and network protocols (e.g. TCP/IP) are generally a closer fit to the Networking and Internet Architecture (cs.NI) category.

- cs.SE - Software Engineering ( new , recent , current month ) Covers design tools, software metrics, testing and debugging, programming environments, etc. Roughly includes material in all of ACM Subject Classes D.2, except that D.2.4 (program verification) should probably have Logics in Computer Science as the primary subject area.

- cs.SD - Sound ( new , recent , current month ) Covers all aspects of computing with sound, and sound as an information channel. Includes models of sound, analysis and synthesis, audio user interfaces, sonification of data, computer music, and sound signal processing. Includes ACM Subject Class H.5.5, and intersects with H.1.2, H.5.1, H.5.2, I.2.7, I.5.4, I.6.3, J.5, K.4.2.

- cs.SC - Symbolic Computation ( new , recent , current month ) Roughly includes material in ACM Subject Class I.1.

- cs.SY - Systems and Control ( new , recent , current month ) cs.SY is an alias for eess.SY. This section includes theoretical and experimental research covering all facets of automatic control systems. The section is focused on methods of control system analysis and design using tools of modeling, simulation and optimization. Specific areas of research include nonlinear, distributed, adaptive, stochastic and robust control in addition to hybrid and discrete event systems. Application areas include automotive and aerospace control systems, network control, biological systems, multiagent and cooperative control, robotics, reinforcement learning, sensor networks, control of cyber-physical and energy-related systems, and control of computing systems.

Research Topics & Ideas: CompSci & IT

50+ Computer Science Research Topic Ideas To Fast-Track Your Project

Finding and choosing a strong research topic is the critical first step when it comes to crafting a high-quality dissertation, thesis or research project. If you’ve landed on this post, chances are you’re looking for a computer science-related research topic , but aren’t sure where to start. Here, we’ll explore a variety of CompSci & IT-related research ideas and topic thought-starters, including algorithms, AI, networking, database systems, UX, information security and software engineering.

NB – This is just the start…

The topic ideation and evaluation process has multiple steps . In this post, we’ll kickstart the process by sharing some research topic ideas within the CompSci domain. This is the starting point, but to develop a well-defined research topic, you’ll need to identify a clear and convincing research gap , along with a well-justified plan of action to fill that gap.

If you’re new to the oftentimes perplexing world of research, or if this is your first time undertaking a formal academic research project, be sure to check out our free dissertation mini-course. In it, we cover the process of writing a dissertation or thesis from start to end. Be sure to also sign up for our free webinar that explores how to find a high-quality research topic.

Overview: CompSci Research Topics

- Algorithms & data structures

- Artificial intelligence ( AI )

- Computer networking

- Database systems

- Human-computer interaction

- Information security (IS)

- Software engineering

- Examples of CompSci dissertation & theses

Topics/Ideas: Algorithms & Data Structures

- An analysis of neural network algorithms’ accuracy for processing consumer purchase patterns

- A systematic review of the impact of graph algorithms on data analysis and discovery in social media network analysis

- An evaluation of machine learning algorithms used for recommender systems in streaming services

- A review of approximation algorithm approaches for solving NP-hard problems

- An analysis of parallel algorithms for high-performance computing of genomic data

- The influence of data structures on optimal algorithm design and performance in Fintech

- A Survey of algorithms applied in internet of things (IoT) systems in supply-chain management

- A comparison of streaming algorithm performance for the detection of elephant flows

- A systematic review and evaluation of machine learning algorithms used in facial pattern recognition

- Exploring the performance of a decision tree-based approach for optimizing stock purchase decisions

- Assessing the importance of complete and representative training datasets in Agricultural machine learning based decision making.

- A Comparison of Deep learning algorithms performance for structured and unstructured datasets with “rare cases”

- A systematic review of noise reduction best practices for machine learning algorithms in geoinformatics.

- Exploring the feasibility of applying information theory to feature extraction in retail datasets.

- Assessing the use case of neural network algorithms for image analysis in biodiversity assessment

Topics & Ideas: Artificial Intelligence (AI)

- Applying deep learning algorithms for speech recognition in speech-impaired children

- A review of the impact of artificial intelligence on decision-making processes in stock valuation

- An evaluation of reinforcement learning algorithms used in the production of video games

- An exploration of key developments in natural language processing and how they impacted the evolution of Chabots.

- An analysis of the ethical and social implications of artificial intelligence-based automated marking

- The influence of large-scale GIS datasets on artificial intelligence and machine learning developments

- An examination of the use of artificial intelligence in orthopaedic surgery

- The impact of explainable artificial intelligence (XAI) on transparency and trust in supply chain management

- An evaluation of the role of artificial intelligence in financial forecasting and risk management in cryptocurrency

- A meta-analysis of deep learning algorithm performance in predicting and cyber attacks in schools

Topics & Ideas: Networking

- An analysis of the impact of 5G technology on internet penetration in rural Tanzania

- Assessing the role of software-defined networking (SDN) in modern cloud-based computing

- A critical analysis of network security and privacy concerns associated with Industry 4.0 investment in healthcare.

- Exploring the influence of cloud computing on security risks in fintech.

- An examination of the use of network function virtualization (NFV) in telecom networks in Southern America

- Assessing the impact of edge computing on network architecture and design in IoT-based manufacturing

- An evaluation of the challenges and opportunities in 6G wireless network adoption

- The role of network congestion control algorithms in improving network performance on streaming platforms

- An analysis of network coding-based approaches for data security

- Assessing the impact of network topology on network performance and reliability in IoT-based workspaces

Topics & Ideas: Database Systems

- An analysis of big data management systems and technologies used in B2B marketing

- The impact of NoSQL databases on data management and analysis in smart cities

- An evaluation of the security and privacy concerns of cloud-based databases in financial organisations

- Exploring the role of data warehousing and business intelligence in global consultancies

- An analysis of the use of graph databases for data modelling and analysis in recommendation systems

- The influence of the Internet of Things (IoT) on database design and management in the retail grocery industry

- An examination of the challenges and opportunities of distributed databases in supply chain management

- Assessing the impact of data compression algorithms on database performance and scalability in cloud computing

- An evaluation of the use of in-memory databases for real-time data processing in patient monitoring

- Comparing the effects of database tuning and optimization approaches in improving database performance and efficiency in omnichannel retailing

Topics & Ideas: Human-Computer Interaction

- An analysis of the impact of mobile technology on human-computer interaction prevalence in adolescent men

- An exploration of how artificial intelligence is changing human-computer interaction patterns in children

- An evaluation of the usability and accessibility of web-based systems for CRM in the fast fashion retail sector

- Assessing the influence of virtual and augmented reality on consumer purchasing patterns

- An examination of the use of gesture-based interfaces in architecture

- Exploring the impact of ease of use in wearable technology on geriatric user

- Evaluating the ramifications of gamification in the Metaverse

- A systematic review of user experience (UX) design advances associated with Augmented Reality

- A comparison of natural language processing algorithms automation of customer response Comparing end-user perceptions of natural language processing algorithms for automated customer response

- Analysing the impact of voice-based interfaces on purchase practices in the fast food industry

Topics & Ideas: Information Security

- A bibliometric review of current trends in cryptography for secure communication

- An analysis of secure multi-party computation protocols and their applications in cloud-based computing

- An investigation of the security of blockchain technology in patient health record tracking

- A comparative study of symmetric and asymmetric encryption algorithms for instant text messaging

- A systematic review of secure data storage solutions used for cloud computing in the fintech industry

- An analysis of intrusion detection and prevention systems used in the healthcare sector

- Assessing security best practices for IoT devices in political offices

- An investigation into the role social media played in shifting regulations related to privacy and the protection of personal data

- A comparative study of digital signature schemes adoption in property transfers

- An assessment of the security of secure wireless communication systems used in tertiary institutions

Topics & Ideas: Software Engineering

- A study of agile software development methodologies and their impact on project success in pharmacology

- Investigating the impacts of software refactoring techniques and tools in blockchain-based developments

- A study of the impact of DevOps practices on software development and delivery in the healthcare sector

- An analysis of software architecture patterns and their impact on the maintainability and scalability of cloud-based offerings

- A study of the impact of artificial intelligence and machine learning on software engineering practices in the education sector

- An investigation of software testing techniques and methodologies for subscription-based offerings

- A review of software security practices and techniques for protecting against phishing attacks from social media

- An analysis of the impact of cloud computing on the rate of software development and deployment in the manufacturing sector

- Exploring the impact of software development outsourcing on project success in multinational contexts

- An investigation into the effect of poor software documentation on app success in the retail sector

CompSci & IT Dissertations/Theses

While the ideas we’ve presented above are a decent starting point for finding a CompSci-related research topic, they are fairly generic and non-specific. So, it helps to look at actual dissertations and theses to see how this all comes together.

Below, we’ve included a selection of research projects from various CompSci-related degree programs to help refine your thinking. These are actual dissertations and theses, written as part of Master’s and PhD-level programs, so they can provide some useful insight as to what a research topic looks like in practice.

- An array-based optimization framework for query processing and data analytics (Chen, 2021)

- Dynamic Object Partitioning and replication for cooperative cache (Asad, 2021)

- Embedding constructural documentation in unit tests (Nassif, 2019)

- PLASA | Programming Language for Synchronous Agents (Kilaru, 2019)

- Healthcare Data Authentication using Deep Neural Network (Sekar, 2020)

- Virtual Reality System for Planetary Surface Visualization and Analysis (Quach, 2019)

- Artificial neural networks to predict share prices on the Johannesburg stock exchange (Pyon, 2021)

- Predicting household poverty with machine learning methods: the case of Malawi (Chinyama, 2022)

- Investigating user experience and bias mitigation of the multi-modal retrieval of historical data (Singh, 2021)

- Detection of HTTPS malware traffic without decryption (Nyathi, 2022)

- Redefining privacy: case study of smart health applications (Al-Zyoud, 2019)

- A state-based approach to context modeling and computing (Yue, 2019)

- A Novel Cooperative Intrusion Detection System for Mobile Ad Hoc Networks (Solomon, 2019)

- HRSB-Tree for Spatio-Temporal Aggregates over Moving Regions (Paduri, 2019)

Looking at these titles, you can probably pick up that the research topics here are quite specific and narrowly-focused , compared to the generic ones presented earlier. This is an important thing to keep in mind as you develop your own research topic. That is to say, to create a top-notch research topic, you must be precise and target a specific context with specific variables of interest . In other words, you need to identify a clear, well-justified research gap.

Fast-Track Your Research Topic

If you’re still feeling a bit unsure about how to find a research topic for your Computer Science dissertation or research project, check out our Topic Kickstarter service.

You Might Also Like:

Investigating the impacts of software refactoring techniques and tools in blockchain-based developments.

Steps on getting this project topic

I want to work with this topic, am requesting materials to guide.

Information Technology -MSc program

It’s really interesting but how can I have access to the materials to guide me through my work?

That’s my problem also.

Investigating the impacts of software refactoring techniques and tools in blockchain-based developments is in my favour. May i get the proper material about that ?

BLOCKCHAIN TECHNOLOGY

I NEED TOPIC

Submit a Comment Cancel reply

Your email address will not be published. Required fields are marked *

Save my name, email, and website in this browser for the next time I comment.

- Print Friendly

- USF Research

- USF Libraries

Digital Commons @ USF > College of Engineering > Computer Science and Engineering > Theses and Dissertations

Computer Science and Engineering Theses and Dissertations

Theses/dissertations from 2023 2023.

Refining the Machine Learning Pipeline for US-based Public Transit Systems , Jennifer Adorno

Insect Classification and Explainability from Image Data via Deep Learning Techniques , Tanvir Hossain Bhuiyan

Brain-Inspired Spatio-Temporal Learning with Application to Robotics , Thiago André Ferreira Medeiros

Evaluating Methods for Improving DNN Robustness Against Adversarial Attacks , Laureano Griffin

Analyzing Multi-Robot Leader-Follower Formations in Obstacle-Laden Environments , Zachary J. Hinnen

Secure Lightweight Cryptographic Hardware Constructions for Deeply Embedded Systems , Jasmin Kaur

A Psychometric Analysis of Natural Language Inference Using Transformer Language Models , Antonio Laverghetta Jr.

Graph Analysis on Social Networks , Shen Lu

Deep Learning-based Automatic Stereology for High- and Low-magnification Images , Hunter Morera

Deciphering Trends and Tactics: Data-driven Techniques for Forecasting Information Spread and Detecting Coordinated Campaigns in Social Media , Kin Wai Ng Lugo

Automated Approaches to Enable Innovative Civic Applications from Citizen Generated Imagery , Hye Seon Yi

Theses/Dissertations from 2022 2022

Towards High Performing and Reliable Deep Convolutional Neural Network Models for Typically Limited Medical Imaging Datasets , Kaoutar Ben Ahmed

Task Progress Assessment and Monitoring Using Self-Supervised Learning , Sainath Reddy Bobbala

Towards More Task-Generalized and Explainable AI Through Psychometrics , Alec Braynen

A Multiple Input Multiple Output Framework for the Automatic Optical Fractionator-based Cell Counting in Z-Stacks Using Deep Learning , Palak Dave

On the Reliability of Wearable Sensors for Assessing Movement Disorder-Related Gait Quality and Imbalance: A Case Study of Multiple Sclerosis , Steven Díaz Hernández

Securing Critical Cyber Infrastructures and Functionalities via Machine Learning Empowered Strategies , Tao Hou

Social Media Time Series Forecasting and User-Level Activity Prediction with Gradient Boosting, Deep Learning, and Data Augmentation , Fred Mubang

A Study of Deep Learning Silhouette Extractors for Gait Recognition , Sneha Oladhri

Analyzing Decision-making in Robot Soccer for Attacking Behaviors , Justin Rodney

Generative Spatio-Temporal and Multimodal Analysis of Neonatal Pain , Md Sirajus Salekin

Secure Hardware Constructions for Fault Detection of Lattice-based Post-quantum Cryptosystems , Ausmita Sarker

Adaptive Multi-scale Place Cell Representations and Replay for Spatial Navigation and Learning in Autonomous Robots , Pablo Scleidorovich

Predicting the Number of Objects in a Robotic Grasp , Utkarsh Tamrakar

Humanoid Robot Motion Control for Ramps and Stairs , Tommy Truong

Preventing Variadic Function Attacks Through Argument Width Counting , Brennan Ward

Theses/Dissertations from 2021 2021

Knowledge Extraction and Inference Based on Visual Understanding of Cooking Contents , Ahmad Babaeian Babaeian Jelodar

Efficient Post-Quantum and Compact Cryptographic Constructions for the Internet of Things , Rouzbeh Behnia

Efficient Hardware Constructions for Error Detection of Post-Quantum Cryptographic Schemes , Alvaro Cintas Canto

Using Hyper-Dimensional Spanning Trees to Improve Structure Preservation During Dimensionality Reduction , Curtis Thomas Davis

Design, Deployment, and Validation of Computer Vision Techniques for Societal Scale Applications , Arup Kanti Dey

AffectiveTDA: Using Topological Data Analysis to Improve Analysis and Explainability in Affective Computing , Hamza Elhamdadi

Automatic Detection of Vehicles in Satellite Images for Economic Monitoring , Cole Hill

Analysis of Contextual Emotions Using Multimodal Data , Saurabh Hinduja

Data-driven Studies on Social Networks: Privacy and Simulation , Yasanka Sameera Horawalavithana

Automated Identification of Stages in Gonotrophic Cycle of Mosquitoes Using Computer Vision Techniques , Sherzod Kariev

Exploring the Use of Neural Transformers for Psycholinguistics , Antonio Laverghetta Jr.

Secure VLSI Hardware Design Against Intellectual Property (IP) Theft and Cryptographic Vulnerabilities , Matthew Dean Lewandowski

Turkic Interlingua: A Case Study of Machine Translation in Low-resource Languages , Jamshidbek Mirzakhalov

Automated Wound Segmentation and Dimension Measurement Using RGB-D Image , Chih-Yun Pai

Constructing Frameworks for Task-Optimized Visualizations , Ghulam Jilani Abdul Rahim Quadri

Trilateration-Based Localization in Known Environments with Object Detection , Valeria M. Salas Pacheco

Recognizing Patterns from Vital Signs Using Spectrograms , Sidharth Srivatsav Sribhashyam

Recognizing Emotion in the Wild Using Multimodal Data , Shivam Srivastava

A Modular Framework for Multi-Rotor Unmanned Aerial Vehicles for Military Operations , Dante Tezza

Human-centered Cybersecurity Research — Anthropological Findings from Two Longitudinal Studies , Anwesh Tuladhar

Learning State-Dependent Sensor Measurement Models To Improve Robot Localization Accuracy , Troi André Williams

Human-centric Cybersecurity Research: From Trapping the Bad Guys to Helping the Good Ones , Armin Ziaie Tabari

Theses/Dissertations from 2020 2020

Classifying Emotions with EEG and Peripheral Physiological Data Using 1D Convolutional Long Short-Term Memory Neural Network , Rupal Agarwal

Keyless Anti-Jamming Communication via Randomized DSSS , Ahmad Alagil

Active Deep Learning Method to Automate Unbiased Stereology Cell Counting , Saeed Alahmari

Composition of Atomic-Obligation Security Policies , Yan Cao Albright

Action Recognition Using the Motion Taxonomy , Maxat Alibayev

Sentiment Analysis in Peer Review , Zachariah J. Beasley

Spatial Heterogeneity Utilization in CT Images for Lung Nodule Classication , Dmitrii Cherezov

Feature Selection Via Random Subsets Of Uncorrelated Features , Long Kim Dang

Unifying Security Policy Enforcement: Theory and Practice , Shamaria Engram

PsiDB: A Framework for Batched Query Processing and Optimization , Mehrad Eslami

Composition of Atomic-Obligation Security Policies , Danielle Ferguson

Algorithms To Profile Driver Behavior From Zero-permission Embedded Sensors , Bharti Goel

The Efficiency and Accuracy of YOLO for Neonate Face Detection in the Clinical Setting , Jacqueline Hausmann

Beyond the Hype: Challenges of Neural Networks as Applied to Social Networks , Anthony Hernandez

Privacy-Preserving and Functional Information Systems , Thang Hoang

Managing Off-Grid Power Use for Solar Fueled Residences with Smart Appliances, Prices-to-Devices and IoT , Donnelle L. January

Novel Bit-Sliced In-Memory Computing Based VLSI Architecture for Fast Sobel Edge Detection in IoT Edge Devices , Rajeev Joshi

Edge Computing for Deep Learning-Based Distributed Real-time Object Detection on IoT Constrained Platforms at Low Frame Rate , Lakshmikavya Kalyanam

Establishing Topological Data Analysis: A Comparison of Visualization Techniques , Tanmay J. Kotha

Machine Learning for the Internet of Things: Applications, Implementation, and Security , Vishalini Laguduva Ramnath

System Support of Concurrent Database Query Processing on a GPU , Hao Li

Deep Learning Predictive Modeling with Data Challenges (Small, Big, or Imbalanced) , Renhao Liu

Countermeasures Against Various Network Attacks Using Machine Learning Methods , Yi Li

Towards Safe Power Oversubscription and Energy Efficiency of Data Centers , Sulav Malla

Design of Support Measures for Counting Frequent Patterns in Graphs , Jinghan Meng

Automating the Classification of Mosquito Specimens Using Image Processing Techniques , Mona Minakshi

Models of Secure Software Enforcement and Development , Hernan M. Palombo

Functional Object-Oriented Network: A Knowledge Representation for Service Robotics , David Andrés Paulius Ramos

Lung Nodule Malignancy Prediction from Computed Tomography Images Using Deep Learning , Rahul Paul

Algorithms and Framework for Computing 2-body Statistics on Graphics Processing Units , Napath Pitaksirianan

Efficient Viewshed Computation Algorithms On GPUs and CPUs , Faisal F. Qarah

Relational Joins on GPUs for In-Memory Database Query Processing , Ran Rui

Micro-architectural Countermeasures for Control Flow and Misspeculation Based Software Attacks , Love Kumar Sah

Efficient Forward-Secure and Compact Signatures for the Internet of Things (IoT) , Efe Ulas Akay Seyitoglu

Detecting Symptoms of Chronic Obstructive Pulmonary Disease and Congestive Heart Failure via Cough and Wheezing Sounds Using Smart-Phones and Machine Learning , Anthony Windmon

Toward Culturally Relevant Emotion Detection Using Physiological Signals , Khadija Zanna

Theses/Dissertations from 2019 2019

Beyond Labels and Captions: Contextualizing Grounded Semantics for Explainable Visual Interpretation , Sathyanarayanan Narasimhan Aakur

Empirical Analysis of a Cybersecurity Scoring System , Jaleel Ahmed

Phenomena of Social Dynamics in Online Games , Essa Alhazmi

A Machine Learning Approach to Predicting Community Engagement on Social Media During Disasters , Adel Alshehri

Interactive Fitness Domains in Competitive Coevolutionary Algorithm , ATM Golam Bari

Measuring Influence Across Social Media Platforms: Empirical Analysis Using Symbolic Transfer Entropy , Abhishek Bhattacharjee

A Communication-Centric Framework for Post-Silicon System-on-chip Integration Debug , Yuting Cao

Authentication and SQL-Injection Prevention Techniques in Web Applications , Cagri Cetin

Multimodal Emotion Recognition Using 3D Facial Landmarks, Action Units, and Physiological Data , Diego Fabiano

Robotic Motion Generation by Using Spatial-Temporal Patterns from Human Demonstrations , Yongqiang Huang

A GPU-Based Framework for Parallel Spatial Indexing and Query Processing , Zhila Nouri Lewis

A Flexible, Natural Deduction, Automated Reasoner for Quick Deployment of Non-Classical Logic , Trisha Mukhopadhyay

An Efficient Run-time CFI Check for Embedded Processors to Detect and Prevent Control Flow Based Attacks , Srivarsha Polnati

Force Feedback and Intelligent Workspace Selection for Legged Locomotion Over Uneven Terrain , John Rippetoe

Detecting Digitally Forged Faces in Online Videos , Neilesh Sambhu

Malicious Manipulation in Service-Oriented Network, Software, and Mobile Systems: Threats and Defenses , Dakun Shen

Advanced Search

- Email Notifications and RSS

- All Collections

- USF Faculty Publications

- Open Access Journals

- Conferences and Events

- Theses and Dissertations

- Textbooks Collection

Useful Links

- Rights Information

- SelectedWorks

- Submit Research

Home | About | Help | My Account | Accessibility Statement | Language and Diversity Statements

Privacy Copyright

Thank you for visiting nature.com. You are using a browser version with limited support for CSS. To obtain the best experience, we recommend you use a more up to date browser (or turn off compatibility mode in Internet Explorer). In the meantime, to ensure continued support, we are displaying the site without styles and JavaScript.

- View all journals

Computer science articles from across Nature Portfolio

Computer science is the study and development of the protocols required for automated processing and manipulation of data. This includes, for example, creating algorithms for efficiently searching large volumes of information or encrypting data so that it can be stored and transmitted securely.

Latest Research and Reviews

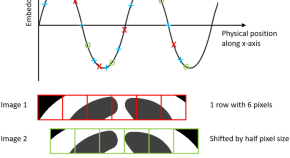

Co-ordinate-based positional embedding that captures resolution to enhance transformer’s performance in medical image analysis

- Badhan Kumar Das

- Gengyan Zhao

- Andreas Maier

A new method based on YOLOv5 and multiscale data augmentation for visual inspection in substation

- Junjie Chen

The benefits, risks and bounds of personalizing the alignment of large language models to individuals

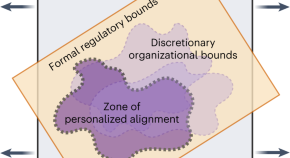

Tailoring the alignment of large language models (LLMs) to individuals is a new frontier in generative AI, but unbounded personalization can bring potential harm, such as large-scale profiling, privacy infringement and bias reinforcement. Kirk et al. develop a taxonomy for risks and benefits of personalized LLMs and discuss the need for normative decisions on what are acceptable bounds of personalization.

- Hannah Rose Kirk

- Bertie Vidgen

- Scott A. Hale

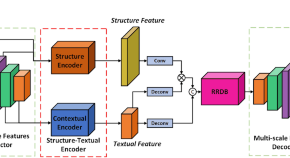

Dual-branch feature encoding framework for infrared images super-resolution reconstruction

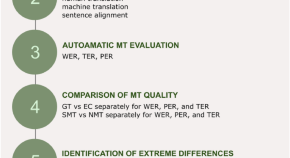

The use of residual analysis to improve the error rate accuracy of machine translation

- Ľubomír Benko

- Dasa Munkova

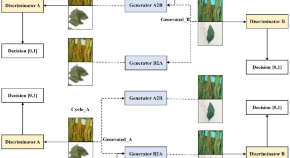

SM-CycleGAN: crop image data enhancement method based on self-attention mechanism CycleGAN

- Dabin Zhang

News and Comment

AI now beats humans at basic tasks — new benchmarks are needed, says major report

Stanford University’s 2024 AI Index charts the meteoric rise of artificial-intelligence tools.

- Nicola Jones

Medical artificial intelligence should do no harm

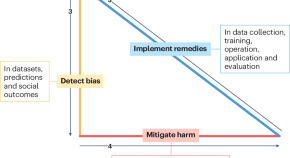

Bias and distrust in medicine have been perpetuated by the misuse of medical equations, algorithms and devices. Artificial intelligence (AI) can exacerbate these problems. However, AI also has potential to detect, mitigate and remedy the harmful effects of bias to build trust and improve healthcare for everyone.

- Melanie E. Moses

- Sonia M. Gipson Rankin

AI hears hidden X factor in zebra finch love songs

Machine learning detects song differences too subtle for humans to hear, and physicists harness the computing power of the strange skyrmion.

- Nick Petrić Howe

- Benjamin Thompson

Three reasons why AI doesn’t model human language

- Johan J. Bolhuis

- Stephen Crain

- Andrea Moro

Generative artificial intelligence in chemical engineering

Generative artificial intelligence will transform the way we design and operate chemical processes, argues Artur M. Schweidtmann.

- Artur M. Schweidtmann

Why scientists trust AI too much — and what to do about it

Some researchers see superhuman qualities in artificial intelligence. All scientists need to be alert to the risks this creates.

Quick links

- Explore articles by subject

- Guide to authors

- Editorial policies

DigitalCommons@University of Nebraska - Lincoln

Home > Computer Science > CSEARTICLES

Computer Science and Engineering, Department of

School of computing: faculty publications.

Studying Developer Eye Movements to Measure Cognitive Workload and Visual Effort for Expertise Assessment , SALWA D. ALJEHANE, Bonita Sharif, and JONATHAN I. MALETIC

Co-Existence with IEEE 802.11 Networks in the ISM Band Without Channel Estimation , Muhammad Naveed Aman, Muhammad Ishfaq, and Biplab Sikdar

Dynamic Resource Optimization for Energy-Efficient 6G-IoT Ecosystems , James Adu Ansere, Mohsin Kamal, Izaz Ahmad Khan, and Muhammad Naveed Aman

Towards Modeling Human Attention from Eye Movements for Neural Source Code Summarization , AAKASH BANSAL, Bonita Sharif, and COLLIN MCMILLAN

On Approximating Total Variation Distance , Arnab Bhattacharyya, Sutanu Gayen, Kuldeep S. Meel, Dimitrios Myrisiotis, A. Pavan, and N. V. Vinodchandran

Rapid: Region-Based Pointer Disambiguation , KHUSHBOO CHITRE, PIYUS KEDIA, and RAHUL PURANDARE

Dynamic Field Programmable Logic-Driven Soft Exosuit , Frances Cleary, Witawas Srisa-an, David C. Henshall, and Sasitharan Balasubramaniam

Convolutional Neural Networks Analysis Reveals Three Possible Sources of Bronze Age Writings between Greece and India , Shruti Daggumati and Peter Z. Revesz

EmergeNet: A novel deep-learning based ensemble segmentation model for emergence timing detection of coleoptile , Aankit Das, Sruti Das Choudhury, Amit Kumar Das, Ashok Samal, and Tala Awada

Network Slicing via Transfer Learning aided Distributed Deep Reinforcement Learning , Tianlun Hu, Qi Liao, Qiang Liu, and Georg Carle

Relative Comparison of Modern Computing to Computer Technology of Ages , Iwasan D. Kejawa Dr. and Hailly Rubio Ms.

Conversion of fat to cellular fuel—Fatty acids 𝛽-oxidation model , Sylwester M. Kloska, Krzysztof Pałczyński, Tomasz Marciniak, Tomasz Talaśka, Marissa Miller, Beata J. Wysocki, Paul Davis, and Tadeusz A. Wysocki

AgRIS: wind-adaptive wideband reconfigurable intelligent surfaces for resilient wireless agricultural networks at millimeter-wave spectrum , Shuai Nie and M. C. Vuran

Perceptual cue-guided adaptive image downscaling for enhanced semantic segmentation on large document images , Chulwoo Pack, Leen-Kiat Soh, and Elizabeth Lorang

Ethical Design of Computers: From Semiconductors to IoT and Artificial Intelligence , Sudeep Pasricha and Marilyn Wolf

OSC-CO 2 : coattention and cosegmentation framework for plant state change with multiple features , Rubi Quiñones, Ashok Samal, Sruti Das Choudhury, and Francisco Muñoz-Arriola

A Generalization of the Chomsky-Halle Phonetic Representation using Real Numbers for Robust Speech Recognition in Noisy Environments , Peter Z. Revesz

A Markovian Error Model for False Negatives in DNN-based Perception-Driven Control Systems , Kruttidipta Samal, Thomas Walton, Tran Hoang-Dung, and Marilyn Wolf

3DGAUnet: 3D Generative Adversarial Networks with a 3D U-Net Based Generator to Achieve the Accurate and Effective Synthesis of Clinical Tumor Image Data for Pancreatic Cancer , Yu Shi, Hannah Tang, Michael J. Baine, Michael A. Hollingsworth, Huijing Du, Dandan Zheng, Chi Zhang, and Hongfeng Yu

Revealing gene regulation-based neural network computing in bacteria , Samitha S. Somathilaka, Sasitharan Balasubramaniam, Daniel P. Martins, and Xu Li

Extending the breadth of saliva metabolome fngerprinting by smart template strategies and efective pattern realignment on comprehensive two‑dimensional gas chromatographic data , Simone Squara, Friederike Manig, Thomas Henle, Michael Hellwig, Andrea Caratti, Carlo Bicchi, Stephen E. Reichenbach, Qingping Tao, Massimo Collino, and Chiara Cordero

MFA-DVR: direct volume rendering of MFA models , Jianxin Sun, David Lenz, Hongfeng Yu, and Tom Peterka

Metamobility: Connecting Future Mobility with Metaverse , Haoxin Wang, Ziran Wang, Dawei Chen, Qiang Liu, Hongyu Ke, and Kyungtae Han

A Light-weight Technique to Detect GPS Spoofing using Attenuated Signal Envelopes , Xiao Wei, Muhammad Naveed Aman, and Biplab Sikdar

From Laboratory to Field: Unsupervised Domain Adaptation for Plant Disease Recognition in the Wild , Xinlu Wu, Xijian Fan, Peng Luo, Sruti Das Choudhury, Tardi Tjahjadi, and Chunhua Hu

Leaf-Counting in Monocot Plants Using Deep Regression Models , Xinyan Xie, Yufeng Ge, Harkamal Walia, Jinliang Yang, and Hongfeng Yu

Next-Generation Sequencing Data-Based Association Testing of a Group of Genetic Markers for Complex Responses Using a Generalized Linear Model Framework , Zheng Xu, Song Yan, Cong Wu, Qing Duan, Sixia Chen, and Yun Li

Efficient Two-Stage Analysis for Complex Trait Association with Arbitrary Depth Sequencing Data , Zheng Xu, Song Yan, Shuai Yuan, Cong Wu, Sixia Chen, and Zifang Guo

A Roadmap for the Human Gut Cell Atlas , Matthias Zilbauer, Kylie R. James, Mandeep Kaur, Sebastian Pott, Zhixin Li, Albert Burger, Jay R. Thiagarajah, Joseph Burclaff, Frode L. Jahnsen, Francesca Perrone, Alexander D. Ross, Gianluca Matteoli, Nathalie Stakenborg, Tomohisa Sujino, Andreas Moor, Raquel Bartolome-Casado, Espen S. Bækkevold, Ran Zhou, Bingqing Xie, Ken S. Lau, Shahida Din, Scott T. Magness, Qiuming Yao, Semir Beyaz, Mark Arends, Alexandre Denadai-Souza, Lori A. Coburn, Jellert T. Gaublomme, Richard Baldock, Irene Papatheodorou, Jose Ordovas-Montanes, Guy Boeckxstaens, Anna Hupalowska, and Sarah A. Teichmann

MR-PIPA: An Integrated Multi-level RRAM (HfOx) based Processing-In-Pixel Accelerator , Minhaz Abedin, Arman Roohi, Maximilian Liehr, Nathaniel Cady, and Shaahin Angizi

Enabling Intelligent IoTs for Histopathology Image Analysis Using Convolutional Neural Networks , Mohammed H. Alali, Arman Roohi, Shaahin Angizi, and Jitender S. Deogun

Realizing Molecular Machine Learning through Communications for Biological AI: Future Directions and Challenges , Sasitharan Balasubramaniam, Samitha Somathilaka, Sehee Sun, Adrian Ratwatte, and Massimiliano Pierobon

ubjective Information and Survival in a Simulated Biological System , Tyler S. Barker, Massimiliano Pierobon, and Peter J. Thomas

ICEBAR: Feedback-Driven Iterative Repair of Alloy Specifications , Simón Gutiérrez Brida, Germán Regis, Guolong Zheng, Hamid Bagheri, ThanhVu Nguyen, Nazareno Aguirre, and Marcelo Frias

Security, Trust and Privacy for Cloud, Fog and Internet of Things , Chien-Ming Chen, Shehzad Ashraf Chaudhry, Kuo-Hui Yeh, and Muhammad Naveed Aman

The Road Not Taken: Exploring Alias Analysis Based Optimizations Missed by the Compiler , KHUSHBOO CHITRE, PIYUS KEDIA, and RAHUL PURANDARE

Pitfalls and Guidelines for Using Time-Based Git Data , Samuel W. Flint, Jigyasa Chauhan, and Robert Dyer

Quasi-Spherical Absorbing Receiver Model of Glioblastoma Cells For Exosome-based Molecular Communications , Caio Fonseca, Michael Taynan Barros, Andreani Odysseos, Srivatsan Kidambi, and Sasitharan Balasubramaniam

Incoherent and Online Dictionary Learning Algorithm for Motion Prediction , Farrukh Hafeez, Usman Ullah Sheikh, Asif Iqbal, and Muhammad Naveed Aman

Inter-Cell Slicing Resource Partitioning via Coordinated Multi-Agent Deep Reinforcement Learning , Tianlun Hu, Qi Liao, Qiang Liu, Dan Wellington, and Georg Carle

Using deep learning to detect digitally encoded DNA trigger for Trojan malware in Bio‑Cyber attacks , M. S. Islam, S. Ivanov, H. Awan, J. Drohan, Sasitharan Balasubramaniam, L. Coffey, Srivatsan Kidambi, and W. Sri-saan

Decision-Theoretic Planning with Communication in Open Multiagent Systems , Anirudh Kakarlapudi, Gayathri Anil, Adam Eck, Prashant Doshi, and Leen-Kiat Soh

Society Dilemma of Computer Technology Management in Today's World , Iwasan D. Kejawa Ed.D

Deep Reinforcement Learning for End-to-End Network Slicing: Challenges and Solutions , Qiang Liu, Nakjung Choi, and Tao Han

Real-Time Dynamic Map with Crowdsourcing Vehicles in Edge Computing , Qiang Liu, Tao Han, Jiang (Linda) Xie, and BaekGyu Kim

Internal Model Control (IMC)-Based Active and Reactive Power Control of Brushless Double-Fed Induction Generator with Notch Filter , Ahsanullah Memon, Mohd Wazir Bin Mustafa, Zohaib Hussain Laghari, Touqeer Ahmed Jumani, Waqas Anjum, Shafi Ullah, and Muhammad Naveed Aman

Systems-Based Approach for Optimization of Assembly-Free Bacterial MLST Mapping , Natasha Pavlovikj, Joao Carlos Gomes-Neto, Jitender Deogun, and Andrew Benson

Room-temperature polariton quantum fluids in halide perovskites , Kai Peng, Renjie Tao, Louis Haeberlé, Quanwei Li, Dafei Jin, Graham R. Fleming, Stéphane Kéna-Cohen, Xiang Zhang, and Wei Bao

What Makes the Article “Condition Monitoring and Fault Diagnosis of Electrical Motors—A Review” So Popular? , Wei Qiao

Decipherment Challenges Due to Tamga and Letter Mix-Ups in an Old Hungarian Runic Inscription from the Altai Mountains , Peter Revesz

Profiling a Community-Specific Function Landscape for Bacterial Peptides Through Protein-Level Meta-Assembly and Machine Learning , Mitra Vajjala, Brady Johnson, Lauren Kasparek, Michael Leuze, and Qiuming Yao

Nanomechanical Resonators: Toward Atomic Scale , Bo Xu, Pengcheng Zhang, Jiankai Zhu, Zuheng Liu, Alexander Eichler, Xu-Qian Zheng, Jaesung Lee, Aneesh Dash, Swapnil More, Song Wu, Yanan Wang, Hao Jia, Akshay Naik, Adrian Bachtold, Rui Yang, Philip X.-L. Feng, and Zenghui Wang

Neural Network Repair with Reachability Analysis , Xiaodong Yang, Tom Yamaguchi, Tran Hoang-Dung, Bardh Hoxha, Taylor T. Johnson, and Danil Prokhorov

High throughput analysis of leaf chlorophyll content in sorghum using RGB, hyperspectral, and fluorescence imaging and sensor fusion , Huichun Zhang; Yufeng Ge; Xinyan Xie; Abbas Atefi; Nuwan Wijewardane,; and Suresh Thapa

Rethinking Sampled-Data Control for Unmanned Aircraft Systems , Xinkai Zhang and Justin M. Bradley

Deja Vu: semantics-aware recording and replay of high-speed eye tracking and interaction data to support cognitive studies of software engineering tasks—methodology and analyses , Vlas Zyrianov, Cole S. Peterson, Drew T. Guarnera, Joshua Behler, Praxis Weston, Bonita Sharif Ph.D., and Jonathan I. Maletic

Visual Growth Tracking for Automated Leaf Stage Monitoring Based on Image Sequence Analysis , Srinidhi Bashyam, Sruti Das Choudhury, Ashok Samal, and Tala Awada

Aerial Flight Paths for Communication , Alisha Bevins and Brittany Duncan

Facility Location Games with Ordinal Preferences , Hau Chan, Minming Li, and Chenhao Wang

Fingerlings mass estimation: A comparison between deep and shallow learning algorithms , Adair da Silva Oliveira Junior, Diego André Sant’Ana, Marcio Carneiro Brito Pache, Vanir Garcia, Vanessa Aparecida de Moares Weber, Gilberto Astolfi, Fabricio de Lima Weber, Geazy Vilharva Menezes, Gabriel Kirsten Menezes, Pedro Lucas França Albuquerque, Celso Soares Costa, Eduardo Quirino Arguelho de Queiroz, João Victor Araújo Rozales, Milena Wolff Ferreira, Marco Hiroshi Naka, and Hemerson Pistori

FIRE SUPPRESSION AND IGNITION WITH UNMANNED AERIAL VEHICLES , Carrick Detweiler, Sebastian Elbaum, James Higgins, Christian Laney, Craig Allen, Dirac L. Twidwell Jr, and Evan Michale Beachly

HyperSeed: An End-to-End Method to Process Hyperspectral Images of Seeds , Tian Gao, Anil Kumar Nalini Chandran, Puneet Paul, Harkamal Walia, and Hongfeng Yu

Novel 3D Imaging Systems for High-Throughput Phenotyping of Plants , Tian Gao, Feiyu Zhu, Puneet Paul, Jaspreet Sandhu, Henry Akrofi Doku, Jianxin Sun, Yu Pan, Paul Staswick, Harkamal Walia, and Hongfeng Yu

Human body-fluid proteome: quantitative profiling and computational prediction , Lan Huang, Dan Shao, Yan Wang, Xueteng Cui, Yufei Li, Qian Chen, and Juan Cui

University of Nebraska unmanned aerial system (UAS) profiling during the LAPSE-RATE field campaign , Ashraful Islam, Ajay Shankar, Adam Houston, and Carrick Detweiler

The Integral of Education Technology in the Society , Prof. Iwasan D. Kejawa Ed.D

Optimal Container Migration for Mobile Edge Computing: Algorithm, System Design and Implementation , Taewoon Kim, Motassem Al-Tarazi, Jenn-Wei Lin, and Wooyeol Choi

Microfluidic-based Bacterial Molecular Computing on a Chip , Daniel P. Martins; Michael Taynnan Barros; Benjamin O'Sullivan; Ian Seymour; Alan O'Riordan; Lee Coffey; Joseph Sweeney; and Sasitharan Balasubramaniam,

A Task-Driven Feedback Imager with Uncertainty Driven Hybrid Control , Burhan A. Mudassar, Priyabrata Saha, Marilyn Wolf, and Saibal Mukhopadhyay

Model Counting meets F 0 Estimation , A. PAVAN, N. V. VINODCHANDRAN, ARNAB BHATTACHARYYA, and KULDEEP S. MEEL

Multi-feature data repository development and analytics for image cosegmentation in high-throughput plant phenotyping , Rubi Quiñones, Francisco Munoz-Arriola, Sruti Das Choudhury, and Ashok Samal

A tiling algorithm-based string similarity measure , Peter Revesz

Combined Untargeted and Targeted Fingerprinting by Comprehensive Two-Dimensional Gas Chromatography to Track Compositional Changes on Hazelnut Primary Metabolome during Roasting , Marta Cialiè Rosso, Federico Stilo, Carlo Bicchi, Melanie Charron, Ginevra Rosso, Roberto Menta, Stephen Reichenbach, Christoph H. Weinert, Carina I. Mack, Sabine E. Kulling, and Chiara Cordero

DeepSec: a deep learning framework for secreted protein discovery in human body fluids , Dan Shao, Lan Huang, Yan Wang, Kai He, Xueteng Cui, Yao Wang, Qin Ma, and Juan Cui

A study of the generalizability of self-supervised representations , Atharva Tendle and Mohammad Rashedul Hasan

PhenoImage : An open-source graphical user interface for plant image analysis , Feiyu Zhu, Manny Saluja, Jaspinder Singh, Puneet Paul, Scott E. Sattler, Paul Staswick, Harkamal Walia, and Hongfeng Yu

SYSTEMSANDMETHODSFORREDUCING THE ACTUATION VOLTAGE FOR ELECTROSTATIC MEMS DEVICES , Fadi M. Alsaleem and Mohammad H. Hasan

Comparative evaluation of machine learning models for groundwater quality assessment , Shine Bedi, Ashok Samal, Chittaranjan Ray, and Daniel D. Snow

Validating a CS Attitudes Instrument , Ryan Bockmon, Stephen Cooper, Jonathan Gratch, and Mohsen Dorodchi

A CS1 Spatial Skills Intervention and the Impact on Introductory Programming Abilities , Ryan Bockmon, Stephen Cooper, William Koperski, Jonathan Gratch, Sheryl Sorby, and Mohsen Dorodchi

Elucidation of molecular links between obesity and cancer through microRNA regulation , Haluk Dogan, Jiang Shu, Zeynep M. Hakguder, Zheng Xu, and Juan Cui

Variability in the Effectiveness of Psychological Interventions based on Machine Learning in STEM Education , Mohammad Hasan and Bilal Khan

Resource Allocation and QoS Guarantees for Real World IP Traffic in Integrated XG-PON and IEEE802.11e EDCA Networks , Ravneet Kaur, Akshita Gupta, Anand Srivastava, Bijoy Chand Chatterjee, Abhijit Mitra, Byrav Ramamurthy, and Vivek Ashok Bohara

Global Technology Economic Analysis Paradigm , Iwasan D. Kejawa Ed.D

THE EFFECTS OF COMPUTER AND INFORMATION TECHNOLOGY ON EDUCATION , Iwasan D. Kejawa Ed.D

Development and Validation of the Computational Thinking Concepts and Skills Test , Markeya S. Peteranetz, Patrick M. Morrow, and Leen-Kiat Soh

A Multi-level Analysis of the Relationship between Instructional Practices and Retention in Computer Science , Markeya S. Peteranetz and Leen-Kiat Soh

ELECTROMAGNETIC POWER CONVERTER , Wei Qiao, Liyan Qu, and Haosen Wang

Estimating the maximum rise in temperature according to climate models using abstract interpretation , Peter Revesz and Robert J. Woodward

Paired Trial Classification: A Novel Deep Learning Technique for MVPA , Jacob M. Williams; Ashok Samal; Prahalada K. Rao; and Matthew R, Johnson

Platinum: Reusing Constraint Solutions in Bounded Analysis of Relational Logic , Guolong Zheng, Hamid Bagheri, Gregg Rothermel, and Jianghao Wang

Microbiome-Gut-Brain Axis as a Biomolecular Communication Network for the Internet of Bio-NanoThings , Ian F. Akyildiz, Jiande Chen, Maysam Ghovanloo, Ulkuhan Guler, Tevhide Ozkaya-Ahmadov, Massimiliano Pierobon, A Faith Sarioglu, and Bige D. Unluturk

Intercomparison of Small Unmanned Aircraft System (sUAS) Measurements for Atmospheric Science during the LAPSE-RATE Campaign , Lindsay Barbieri, Stephan T. Kral, Sean C. C. Bailey, Amy E. Frazier, Jamey D. Jacob, Joachim Reuder, David Brus, Phillip B. Chilson, Christopher Crick, Carrick Detweiler, Abhiram Doddi, Jack Elston, Hosein Foroutan, Javier Gonzalez-Rocha, Brian R. Greene, Marcelo I. Guzman, Adam L. Houston, Ashraful Islam, Osku Kemppinen, Dale Lawrence, Elizabeth A. Pillar-Little, Shane D. Ross, Michael Sama, David G. Schmale III, Travis J. Schuyler, Ajay Shankar, Suzanne W. Smith, Sean Waugh, Cory Dixon, Steve Borenstein, and Gijs de Boer

Advanced Search

Search Help

- Notify me via email or RSS

- Administrator Resources

- How to Cite Items From This Repository

- Copyright Information

- Collections

- Disciplines

Author Corner

- Guide to Submitting

- Submit your paper or article

- Computer Science Website

Home | About | FAQ | My Account | Accessibility Statement

Privacy Copyright

- IEEE CS Standards

- Career Center

- Subscribe to Newsletter

- IEEE Standards

- For Industry Professionals

- For Students

- Launch a New Career

- Membership FAQ

- Membership FAQs

- Membership Grades

- Special Circumstances

- Discounts & Payments

- Distinguished Contributor Recognition

- Grant Programs

- Find a Local Chapter

- Find a Distinguished Visitor

- Find a Speaker on Early Career Topics

- Technical Communities

- Collabratec (Discussion Forum)

- Start a Chapter

- My Subscriptions

- My Referrals

- Computer Magazine

- ComputingEdge Magazine

- Let us help make your event a success. EXPLORE PLANNING SERVICES

- Events Calendar

- Calls for Papers

- Conference Proceedings

- Conference Highlights

- Top 2024 Conferences

- Conference Sponsorship Options

- Conference Planning Services

- Conference Organizer Resources

- Virtual Conference Guide

- Get a Quote

- CPS Dashboard

- CPS Author FAQ

- CPS Organizer FAQ

- Find the latest in advanced computing research. VISIT THE DIGITAL LIBRARY

- Open Access

Tech News Blog

- Author Guidelines

- Reviewer Information

- Guest Editor Information

- Editor Information

- Editor-in-Chief Information

- Volunteer Opportunities

- Video Library

- Member Benefits

- Institutional Library Subscriptions

- Advertising and Sponsorship

- Code of Ethics

- Educational Webinars

- Online Education

- Certifications

- Industry Webinars & Whitepapers

- Research Reports

- Bodies of Knowledge

- CS for Industry Professionals

Resource Library

- Newsletters

- Women in Computing

- Digital Library Access

- Organize a Conference

- Run a Publication

- Become a Distinguished Speaker

- Participate in Standards Activities

- Peer Review Content

- Author Resources

- Publish Open Access

- Society Leadership

- Boards & Committees

- Local Chapters

- Governance Resources

- Conference Publishing Services

- Chapter Resources

- About the Board of Governors

- Board of Governors Members

- Diversity & Inclusion

- Open Volunteer Opportunities

- Award Recipients

- Student Scholarships & Awards

- Nominate an Election Candidate

- Nominate a Colleague

- Corporate Partnerships

- Conference Sponsorships & Exhibits

- Advertising

- Recruitment

- Publications

- Education & Career

Discover IEEE Computer Society Publications

Unlock peer-reviewed research and expert commentary from the world’s trusted resource for computer science and engineering information., peer-reviewed magazines & journals.

We are the home to prestigious publications that deliver insights from the brightest minds in computing.

Full Range of Topics

High Impact Factors

Award-Winning Special Issues

Digital Library with 840,000 Articles

Publications by Topic

- IEEE Open Journal of the Computer Society

- IEEE Transactions on Computers

- IEEE Intelligent Systems

- IEEE Transactions on Pattern Analysis and Machine Intelligence

- IEEE/ACM Transactions on Computational Biology and Bioinformatics

- IEEE Transactions on Emerging Topics in Computing

- IEEE Computer Graphics and Applications

- IEEE MultiMedia

- IEEE Transactions on Visualization and Computer Graphics

- IEEE Computer Architecture Letters

- IEEE Annals of the History of Computing

- IEEE Transactions on Affective Computing

- IT Professional

- IEEE Internet Computing

- IEEE Transactions on Big Data

- IEEE Transactions on Cloud Computing

- IEEE Transactions on Knowledge and Data Engineering

- IEEE Transactions on Services Computing

- IEEE Pervasive Computing

- IEEE Transactions on Mobile Computing

- IEEE Transactions on Parallel and Distributed Systems

- Computing in Science & Engineering

- IEEE Security & Privacy

- IEEE Transactions on Dependable and Secure Computing

- IEEE Transactions on Privacy

- IEEE Software

- IEEE Transactions on Software Engineering

- IEEE Transactions on Sustainable Computing

Impact Factors

Impact factor (IF) measures how often a publication’s articles are cited and indicates its influence within a scientific community. IFs are reported by Clarivate Analytics Journal Citation Reports .

- IEEE Transactions on Pattern Analysis and Machine Intelligence earned a 2022 IF of 23.6 —one of the highest of all artificial intelligence journals.

- Eleven Computer Society journals hold the coveted top IF ranking in their specialty field.

Recent Awards

- 2022 Mahoney Prize - IEEE Annals of the History of Computing , "Computing Capitalisms"

- 2021 APEX Award for Publication Excellence - IEEE Security & Privacy , "The Future of Cybersecurity Policy"

- Computer , "Technology Predictions"

- IEEE Security & Privacy , "Smart Cities: Requirements for Security, Privacy, and Trust"

- IEEE Software , "The Diversity Crisis in Software Development"

Computer Society Digital Library

All our magazines, journals, and conference proceedings can be found in the Computer Society Digital Library (CSDL) and the IEEE Xplore ® digital library.

Many universities and institutions already have a subscription. Contact your librarian for details.

Individuals can access the CSDL at a discounted rate with IEEE Computer Society Membership . All Student members receive full access to the CSDL at no extra cost. Professional members receive 18 article downloads and can add full CSDL access for one flat rate using promo code CSDLTRACK . Professional members also have the option of subscribing to one or more publications within the CSDL.

- Individual Subscriptions

- Institutional Subscriptions

"Being the largest and most comprehensive collection of computer science resources available, the Computer Society Digital Library is a beacon of hope for academic libraries...”

— Manayer Ali Ahmed Naseeb, Director, Ahlia University

- View Calls for Papers

- Read Author Guidelines

- 8 Things Authors Should Know before Publishing

- Common Writing and Publishing Mistakes

- Publish Safely with Open Access Journals

- IEEE DataPort (Free Subscription for Members)

Peer Review Volunteer Resources

- Reviewer Resources

- Editor Resources

- Guest Editor Resources

- Editor-in-Chief Resources

Open Access Research

Our first Gold Open Access (OA) journal, the IEEE Open Journal of the Computer Society (OJ-CS) and our second, IEEE Transactions on Privacy , are dedicated to publishing high-impact articles on emerging topics and trends in all aspects of computing and privacy, respectfully. Both publications provide a rapid review cycle for authors looking to publish their research and are fully compliant with funder mandates, including Plan S. OJ-CS and TP content are available for free in the IEEE Computer Society Digital Library (CSDL) and the IEEE Xplore ® digital library.

All our publications offer authors the opportunity to publish OA. Learn about hybrid publications.

Thank You to Our Volunteers!

Our publications are led by computing professionals from around the world.

View Volunteering Opportunities

More News & Research

Computingedge newsletter.

Access insightful content from 12 magazines, all in one FREE monthly subscription available to both members and non-members.

Colloquium Abstracts

Explore a sampling of recently published abstracts from our journals, offered as a complimentary benefit for periodical subscribers.

Read expert commentary and analysis on today’s cutting-edge advances in computer technology in a freely available online format.

Find career guides, technology predictions, and high-level summaries of the latest developments and discoveries in computing.

- Name * First Last

- Country/Region * Country/Region Afghanistan Albania Algeria Andorra Angola Anguilla Antigua and Barbuda Argentina Armenia Aruba Australia Austria Azerbaijan Bahamas Bahrain Bangladesh Barbados Belarus Belgium Belize Benin Bermuda Bhutan Bolivia Bonaire, Sint Eustatius, Saba Bosnia and Herzegovina Botswana Brazil Brunei Darussalam Bulgaria Burkina Faso Burundi Cabo Verde Cambodia Cameroon Canada Cayman Islands Central African Republic Chad Chile China Colombia Comoros Congo Congo, Democratic Republic of Cook Islands Costa Rica Cote d'Ivoire Croatia Cuba Curacao Cyprus Czech Republic Denmark Djibouti Dominica Dominican Republic Ecuador Egypt El Salvador Equatorial Guinea Eritrea Estonia Eswatini Ethiopia Falkland Islands (Malvinas) Faroe Islands Fiji Finland France French Guiana French Polynesia Gabon Gambia Georgia Germany Ghana Gibraltar Greece Greenland Grenada Guadeloupe Guatemala Guinea Guinea-Bissau Guyana Haiti Honduras Hong Kong Hungary Iceland India Indonesia Iran, Islamic Republic of Iraq Ireland Isle of Man Israel Italy Jamaica Japan Jordan Kazakhstan Kenya Kiribati Korea (North) Korea, Republic of Kosovo Kuwait Kyrgyzstan Laos Latvia Lebanon Lesotho Liberia Libya Liechtenstein Lithuania Luxembourg Macao Madagascar Malawi Malaysia Maldives Mali Malta Martinique Mauritania Mauritius Mayotte Mexico Moldova, Republic of Monaco Mongolia Montenegro Montserrat Morocco Mozambique Myanmar Namibia Nauru Nepal Netherlands New Caledonia New Zealand Nicaragua Niger Nigeria Niue Norfolk Island North Macedonia Norway Oman Pakistan Palestine, State of Panama Papua New Guinea Paraguay Peru Philippines Pitcairn Poland Portugal Qatar Reunion Romania Russian Federation Rwanda Saint Kitts and Nevis Saint Lucia Samoa San Marino Sao Tome and Principe Saudi Arabia Senegal Serbia Seychelles Sierra Leone Singapore Sint Maarten Slovakia Slovenia Solomon Islands Somalia South Africa South Sudan Spain Sri Lanka St. Helena St. Vincent and the Grenadines Sudan Suriname Svalbard and Jan Mayen Sweden Switzerland Syrian Arab Republic Taiwan Tajikistan Tanzania, United Republic of Thailand Timor-Leste Togo Tokelau Tonga Trinidad and Tobago Tunisia Turkey Turkmenistan Turks And Caicos Islands Tuvalu Uganda Ukraine United Arab Emirates United Kingdom Uruguay USA Uzbekistan Vatican City Venezuela Vietnam Virgin Islands (British) Wallis And Futuna Western Sahara Yemen Zambia Zimbabwe

- I agree to the IEEE Privacy Policy .*

Sign up for our newsletter.

EMAIL ADDRESS

IEEE COMPUTER SOCIETY

- Board of Governors

- IEEE Support Center

DIGITAL LIBRARY

- Librarian Resources

COMPUTING RESOURCES

- Courses & Certifications

COMMUNITY RESOURCES

- Conference Organizers

- Communities

BUSINESS SOLUTIONS

- Conference Sponsorships & Exhibits

- Digital Library Institutional Subscriptions

- Accessibility Statement

- IEEE Nondiscrimination Policy

- XML Sitemap

©IEEE — All rights reserved. Use of this website signifies your agreement to the IEEE Terms and Conditions.

A not-for-profit organization, the Institute of Electrical and Electronics Engineers (IEEE) is the world's largest technical professional organization dedicated to advancing technology for the benefit of humanity.

Open research in computer science

Spanning networks and communications to security and cryptology to big data, complexity, and analytics, SpringerOpen and BMC publish one of the leading open access portfolios in computer science. Learn about our journals and the research we publish here on this page.

Highly-cited recent articles

Spotlight on.

EPJ Data Science

See how EPJ Data Science brings attention to data science

Reasons to publish in Human-centric Computing and Information Sciences

Download this handy infographic to see all the reasons why Human-centric Computing and Information Sciences is a great place to publish.

We've asked a few of our authors about their experience of publishing with us.

What authors say about publishing in our journals:

Fast, transparent, and fair. - EPJ Data Science Easy submission process through online portal. - Journal of Cloud Computing Patient support and constant reminder at every phase. - Journal of Cloud Computing Quick and relevant. - Journal of Big Data

How to Submit Your Manuscript

Your browser needs to have JavaScript enabled to view this video

Computer science blog posts

Read the latest from the SpringerOpen blog

The SpringerOpen blog highlights recent noteworthy research of general interest published in our open access journals.

Failed to load RSS feed.

Search form

Review of computer engineering studies.

- ISSN: 2369-0755 (print); 2369-0763 (online)

- Indexing & Archiving: EBSCOhost Cabell's Directory Publons ScienceOpen Google Scholar Index Copernicus CrossRef Portico Microsoft Academic CNKI Scholar Baidu Scholar

- Subject: Computer Sciences Engineering

Review of Computer Engineering Studies (RCES) is an international, scholarly and peer-reviewed journal dedicated to providing scientists, engineers and technicians with the latest developments on computer science. The journal offers a window into the most recent discoveries in four categories, namely, computing (computing theory, scientific computing, cloud computing, high-performance computing, numerical and symbolic computing), system (database systems, real-time systems, operating systems, warning systems, decision support systems, information processes and systems, systems integration), intelligence (robotics, bio-informatics, web intelligence, artificial intelligence), and application (security, networking, software engineering, pattern recognition, e-science and e-commerce, signal and image processing, control theory and applications). The editorial board welcomes original, substantiated papers on the above topics, which could provoke new science interest and benefit those devoted to computer science. The journal is published quarterly by the IIETA.

The RCES is an open access journal. All contents are available for free, that is, users are entitled to read, download, duplicate, distribute, print, search or link to the full texts of the articles in this journal without prior consent from the publisher or the author.

Focus and Scope

The RCES welcomes original research papers, technical notes and review articles on the following disciplines:

- Computing theory

- Scientific computing

- Cloud computing

- High-performance computing

- Numerical and symbolic computing

- Database systems

- Real-time systems

- Operating systems

- Warning systems

- Decision support systems

- Information processes and systems

- Systems integration

- Bio-informatics

- Web intelligence

- Artificial intelligence

- Software engineering

- Pattern recognition

- E-science and e-commerce

- Signal and image processing

- Control theory and applications

Publication Frequency

The RCES is published quarterly by the IIETA, with four regular issues (excluding special issues) and one volume per year.

Peer Review Statement

The IIETA adopts a double blind review process. Once submitted, a paper dealing with suitable topics will be sent to the editor-in-chief or managing editor, and then be reviewed by at least two experts in the relevant field. The reviewers are either members of our editorial board or special external experts invited by the journal. In light of the reviewers’ comments, the editor-in-chief or managing editor will make the final decision over the publication, and return the decision to the author.

There are four possible decisions concerning the paper: acceptance, minor revision, major revision and rejection. Acceptances means the paper will be published directly without any revision. Minor revision means the author should make minor changes to the manuscript according to reviewers’ comments and submit the revised version to the IIETA. The revised version will be accepted or rejected at the discretion of the editor-in-chief or managing editor. Major revision means the author should modify the manuscript significantly according to reviewers’ comments and submit the revised version to the IIETA. The revised version will be accepted or rejected at the discretion of the editor-in-chief or managing editor. Rejection means the submitted paper will not be published.

If a paper is accepted, the editor-in-chief or managing editor will send an acceptance letter to the author, and ask the author to prepare the paper in MS Word using the template of IIETA.

Plagiarism Policy

Plagiarism is committed when one author uses another work without permission, credit, or acknowledgment. Plagiarism takes different forms, from literal copying to paraphrasing the work of another. The IIETA uses CrossRef to screen for unoriginal material. Authors submitting to an IIETA journal should be aware that their paper may be submitted to CrossRef at any point during the peer-review or production process. Any allegations of plagiarism made to a journal will be investigated by the editor-in-chief or managing editor. If the allegations appear to be founded, we will request all named authors of the paper to give an explanation of the overlapping material. If the explanation is not satisfactory, we will reject the submission, and may also reject future submissions.

For instructions on citing any of IIETA’s journals as well as our Ethics Statement , see Policies and Standards .

Indexing Information

Web: https://www.portico.org/

- Index Copernicus

Web: http://journals.indexcopernicus.com

- Google Scholar

Web: http://scholar.google.com

- CNKI Scholar

Web: http://scholar.cnki.net

Included in

- Crossref.org

Web: https://www.crossref.org/

ISSN: 2369-0755 (print); 2369-0763 (online)

For Submission Inquiry

Email: [email protected]

- Just Published

- Featured Articles

- Most Read and Cited

- Winters’ Multiplicative Model Based Analysis of the Development and Prospects of New Energy Electric Vehicles in China Zhishuo Jin, Yubing Qian

- Agent-based Analysis and Simulation of Online Shopping Behavior in the Context of Online Promotion Xiaoyi Deng

- Study of Two Kinds of Analysis Methods of Intrusion Tolerance System State Transition Model Zhiyong Luo, Xu Yang, Guanglu Sun, Zhiqiang Xie

- A Review on Automated Billing for Smart Shopping System Using IOT Priyanka S. Sahare, Anup Gade, Jayant Rohankar