Have a language expert improve your writing

Run a free plagiarism check in 10 minutes, generate accurate citations for free.

- Knowledge Base

Methodology

- Content Analysis | Guide, Methods & Examples

Content Analysis | Guide, Methods & Examples

Published on July 18, 2019 by Amy Luo . Revised on June 22, 2023.

Content analysis is a research method used to identify patterns in recorded communication. To conduct content analysis, you systematically collect data from a set of texts, which can be written, oral, or visual:

- Books, newspapers and magazines

- Speeches and interviews

- Web content and social media posts

- Photographs and films

Content analysis can be both quantitative (focused on counting and measuring) and qualitative (focused on interpreting and understanding). In both types, you categorize or “code” words, themes, and concepts within the texts and then analyze the results.

Table of contents

What is content analysis used for, advantages of content analysis, disadvantages of content analysis, how to conduct content analysis, other interesting articles.

Researchers use content analysis to find out about the purposes, messages, and effects of communication content. They can also make inferences about the producers and audience of the texts they analyze.

Content analysis can be used to quantify the occurrence of certain words, phrases, subjects or concepts in a set of historical or contemporary texts.

Quantitative content analysis example

To research the importance of employment issues in political campaigns, you could analyze campaign speeches for the frequency of terms such as unemployment , jobs , and work and use statistical analysis to find differences over time or between candidates.

In addition, content analysis can be used to make qualitative inferences by analyzing the meaning and semantic relationship of words and concepts.

Qualitative content analysis example

To gain a more qualitative understanding of employment issues in political campaigns, you could locate the word unemployment in speeches, identify what other words or phrases appear next to it (such as economy, inequality or laziness ), and analyze the meanings of these relationships to better understand the intentions and targets of different campaigns.

Because content analysis can be applied to a broad range of texts, it is used in a variety of fields, including marketing, media studies, anthropology, cognitive science, psychology, and many social science disciplines. It has various possible goals:

- Finding correlations and patterns in how concepts are communicated

- Understanding the intentions of an individual, group or institution

- Identifying propaganda and bias in communication

- Revealing differences in communication in different contexts

- Analyzing the consequences of communication content, such as the flow of information or audience responses

Prevent plagiarism. Run a free check.

- Unobtrusive data collection

You can analyze communication and social interaction without the direct involvement of participants, so your presence as a researcher doesn’t influence the results.

- Transparent and replicable

When done well, content analysis follows a systematic procedure that can easily be replicated by other researchers, yielding results with high reliability .

- Highly flexible

You can conduct content analysis at any time, in any location, and at low cost – all you need is access to the appropriate sources.

Focusing on words or phrases in isolation can sometimes be overly reductive, disregarding context, nuance, and ambiguous meanings.

Content analysis almost always involves some level of subjective interpretation, which can affect the reliability and validity of the results and conclusions, leading to various types of research bias and cognitive bias .

- Time intensive

Manually coding large volumes of text is extremely time-consuming, and it can be difficult to automate effectively.

If you want to use content analysis in your research, you need to start with a clear, direct research question .

Example research question for content analysis

Is there a difference in how the US media represents younger politicians compared to older ones in terms of trustworthiness?

Next, you follow these five steps.

1. Select the content you will analyze

Based on your research question, choose the texts that you will analyze. You need to decide:

- The medium (e.g. newspapers, speeches or websites) and genre (e.g. opinion pieces, political campaign speeches, or marketing copy)

- The inclusion and exclusion criteria (e.g. newspaper articles that mention a particular event, speeches by a certain politician, or websites selling a specific type of product)

- The parameters in terms of date range, location, etc.

If there are only a small amount of texts that meet your criteria, you might analyze all of them. If there is a large volume of texts, you can select a sample .

2. Define the units and categories of analysis

Next, you need to determine the level at which you will analyze your chosen texts. This means defining:

- The unit(s) of meaning that will be coded. For example, are you going to record the frequency of individual words and phrases, the characteristics of people who produced or appear in the texts, the presence and positioning of images, or the treatment of themes and concepts?

- The set of categories that you will use for coding. Categories can be objective characteristics (e.g. aged 30-40 , lawyer , parent ) or more conceptual (e.g. trustworthy , corrupt , conservative , family oriented ).

Your units of analysis are the politicians who appear in each article and the words and phrases that are used to describe them. Based on your research question, you have to categorize based on age and the concept of trustworthiness. To get more detailed data, you also code for other categories such as their political party and the marital status of each politician mentioned.

3. Develop a set of rules for coding

Coding involves organizing the units of meaning into the previously defined categories. Especially with more conceptual categories, it’s important to clearly define the rules for what will and won’t be included to ensure that all texts are coded consistently.

Coding rules are especially important if multiple researchers are involved, but even if you’re coding all of the text by yourself, recording the rules makes your method more transparent and reliable.

In considering the category “younger politician,” you decide which titles will be coded with this category ( senator, governor, counselor, mayor ). With “trustworthy”, you decide which specific words or phrases related to trustworthiness (e.g. honest and reliable ) will be coded in this category.

4. Code the text according to the rules

You go through each text and record all relevant data in the appropriate categories. This can be done manually or aided with computer programs, such as QSR NVivo , Atlas.ti and Diction , which can help speed up the process of counting and categorizing words and phrases.

Following your coding rules, you examine each newspaper article in your sample. You record the characteristics of each politician mentioned, along with all words and phrases related to trustworthiness that are used to describe them.

5. Analyze the results and draw conclusions

Once coding is complete, the collected data is examined to find patterns and draw conclusions in response to your research question. You might use statistical analysis to find correlations or trends, discuss your interpretations of what the results mean, and make inferences about the creators, context and audience of the texts.

Let’s say the results reveal that words and phrases related to trustworthiness appeared in the same sentence as an older politician more frequently than they did in the same sentence as a younger politician. From these results, you conclude that national newspapers present older politicians as more trustworthy than younger politicians, and infer that this might have an effect on readers’ perceptions of younger people in politics.

Receive feedback on language, structure, and formatting

Professional editors proofread and edit your paper by focusing on:

- Academic style

- Vague sentences

- Style consistency

See an example

If you want to know more about statistics , methodology , or research bias , make sure to check out some of our other articles with explanations and examples.

- Normal distribution

- Measures of central tendency

- Chi square tests

- Confidence interval

- Quartiles & Quantiles

- Cluster sampling

- Stratified sampling

- Thematic analysis

- Cohort study

- Peer review

- Ethnography

Research bias

- Implicit bias

- Cognitive bias

- Conformity bias

- Hawthorne effect

- Availability heuristic

- Attrition bias

- Social desirability bias

Cite this Scribbr article

If you want to cite this source, you can copy and paste the citation or click the “Cite this Scribbr article” button to automatically add the citation to our free Citation Generator.

Luo, A. (2023, June 22). Content Analysis | Guide, Methods & Examples. Scribbr. Retrieved April 9, 2024, from https://www.scribbr.com/methodology/content-analysis/

Is this article helpful?

Other students also liked

Qualitative vs. quantitative research | differences, examples & methods, descriptive research | definition, types, methods & examples, reliability vs. validity in research | difference, types and examples, "i thought ai proofreading was useless but..".

I've been using Scribbr for years now and I know it's a service that won't disappoint. It does a good job spotting mistakes”

Skip to content

Read the latest news stories about Mailman faculty, research, and events.

Departments

We integrate an innovative skills-based curriculum, research collaborations, and hands-on field experience to prepare students.

Learn more about our research centers, which focus on critical issues in public health.

Our Faculty

Meet the faculty of the Mailman School of Public Health.

Become a Student

Life and community, how to apply.

Learn how to apply to the Mailman School of Public Health.

Content Analysis

Content analysis is a research tool used to determine the presence of certain words, themes, or concepts within some given qualitative data (i.e. text). Using content analysis, researchers can quantify and analyze the presence, meanings, and relationships of such certain words, themes, or concepts. As an example, researchers can evaluate language used within a news article to search for bias or partiality. Researchers can then make inferences about the messages within the texts, the writer(s), the audience, and even the culture and time of surrounding the text.

Description

Sources of data could be from interviews, open-ended questions, field research notes, conversations, or literally any occurrence of communicative language (such as books, essays, discussions, newspaper headlines, speeches, media, historical documents). A single study may analyze various forms of text in its analysis. To analyze the text using content analysis, the text must be coded, or broken down, into manageable code categories for analysis (i.e. “codes”). Once the text is coded into code categories, the codes can then be further categorized into “code categories” to summarize data even further.

Three different definitions of content analysis are provided below.

Definition 1: “Any technique for making inferences by systematically and objectively identifying special characteristics of messages.” (from Holsti, 1968)

Definition 2: “An interpretive and naturalistic approach. It is both observational and narrative in nature and relies less on the experimental elements normally associated with scientific research (reliability, validity, and generalizability) (from Ethnography, Observational Research, and Narrative Inquiry, 1994-2012).

Definition 3: “A research technique for the objective, systematic and quantitative description of the manifest content of communication.” (from Berelson, 1952)

Uses of Content Analysis

Identify the intentions, focus or communication trends of an individual, group or institution

Describe attitudinal and behavioral responses to communications

Determine the psychological or emotional state of persons or groups

Reveal international differences in communication content

Reveal patterns in communication content

Pre-test and improve an intervention or survey prior to launch

Analyze focus group interviews and open-ended questions to complement quantitative data

Types of Content Analysis

There are two general types of content analysis: conceptual analysis and relational analysis. Conceptual analysis determines the existence and frequency of concepts in a text. Relational analysis develops the conceptual analysis further by examining the relationships among concepts in a text. Each type of analysis may lead to different results, conclusions, interpretations and meanings.

Conceptual Analysis

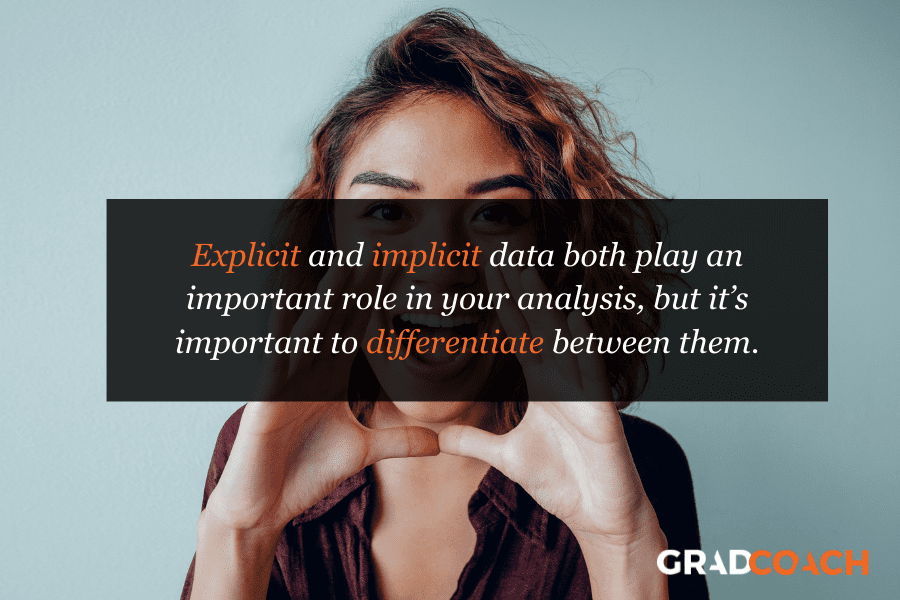

Typically people think of conceptual analysis when they think of content analysis. In conceptual analysis, a concept is chosen for examination and the analysis involves quantifying and counting its presence. The main goal is to examine the occurrence of selected terms in the data. Terms may be explicit or implicit. Explicit terms are easy to identify. Coding of implicit terms is more complicated: you need to decide the level of implication and base judgments on subjectivity (an issue for reliability and validity). Therefore, coding of implicit terms involves using a dictionary or contextual translation rules or both.

To begin a conceptual content analysis, first identify the research question and choose a sample or samples for analysis. Next, the text must be coded into manageable content categories. This is basically a process of selective reduction. By reducing the text to categories, the researcher can focus on and code for specific words or patterns that inform the research question.

General steps for conducting a conceptual content analysis:

1. Decide the level of analysis: word, word sense, phrase, sentence, themes

2. Decide how many concepts to code for: develop a pre-defined or interactive set of categories or concepts. Decide either: A. to allow flexibility to add categories through the coding process, or B. to stick with the pre-defined set of categories.

Option A allows for the introduction and analysis of new and important material that could have significant implications to one’s research question.

Option B allows the researcher to stay focused and examine the data for specific concepts.

3. Decide whether to code for existence or frequency of a concept. The decision changes the coding process.

When coding for the existence of a concept, the researcher would count a concept only once if it appeared at least once in the data and no matter how many times it appeared.

When coding for the frequency of a concept, the researcher would count the number of times a concept appears in a text.

4. Decide on how you will distinguish among concepts:

Should text be coded exactly as they appear or coded as the same when they appear in different forms? For example, “dangerous” vs. “dangerousness”. The point here is to create coding rules so that these word segments are transparently categorized in a logical fashion. The rules could make all of these word segments fall into the same category, or perhaps the rules can be formulated so that the researcher can distinguish these word segments into separate codes.

What level of implication is to be allowed? Words that imply the concept or words that explicitly state the concept? For example, “dangerous” vs. “the person is scary” vs. “that person could cause harm to me”. These word segments may not merit separate categories, due the implicit meaning of “dangerous”.

5. Develop rules for coding your texts. After decisions of steps 1-4 are complete, a researcher can begin developing rules for translation of text into codes. This will keep the coding process organized and consistent. The researcher can code for exactly what he/she wants to code. Validity of the coding process is ensured when the researcher is consistent and coherent in their codes, meaning that they follow their translation rules. In content analysis, obeying by the translation rules is equivalent to validity.

6. Decide what to do with irrelevant information: should this be ignored (e.g. common English words like “the” and “and”), or used to reexamine the coding scheme in the case that it would add to the outcome of coding?

7. Code the text: This can be done by hand or by using software. By using software, researchers can input categories and have coding done automatically, quickly and efficiently, by the software program. When coding is done by hand, a researcher can recognize errors far more easily (e.g. typos, misspelling). If using computer coding, text could be cleaned of errors to include all available data. This decision of hand vs. computer coding is most relevant for implicit information where category preparation is essential for accurate coding.

8. Analyze your results: Draw conclusions and generalizations where possible. Determine what to do with irrelevant, unwanted, or unused text: reexamine, ignore, or reassess the coding scheme. Interpret results carefully as conceptual content analysis can only quantify the information. Typically, general trends and patterns can be identified.

Relational Analysis

Relational analysis begins like conceptual analysis, where a concept is chosen for examination. However, the analysis involves exploring the relationships between concepts. Individual concepts are viewed as having no inherent meaning and rather the meaning is a product of the relationships among concepts.

To begin a relational content analysis, first identify a research question and choose a sample or samples for analysis. The research question must be focused so the concept types are not open to interpretation and can be summarized. Next, select text for analysis. Select text for analysis carefully by balancing having enough information for a thorough analysis so results are not limited with having information that is too extensive so that the coding process becomes too arduous and heavy to supply meaningful and worthwhile results.

There are three subcategories of relational analysis to choose from prior to going on to the general steps.

Affect extraction: an emotional evaluation of concepts explicit in a text. A challenge to this method is that emotions can vary across time, populations, and space. However, it could be effective at capturing the emotional and psychological state of the speaker or writer of the text.

Proximity analysis: an evaluation of the co-occurrence of explicit concepts in the text. Text is defined as a string of words called a “window” that is scanned for the co-occurrence of concepts. The result is the creation of a “concept matrix”, or a group of interrelated co-occurring concepts that would suggest an overall meaning.

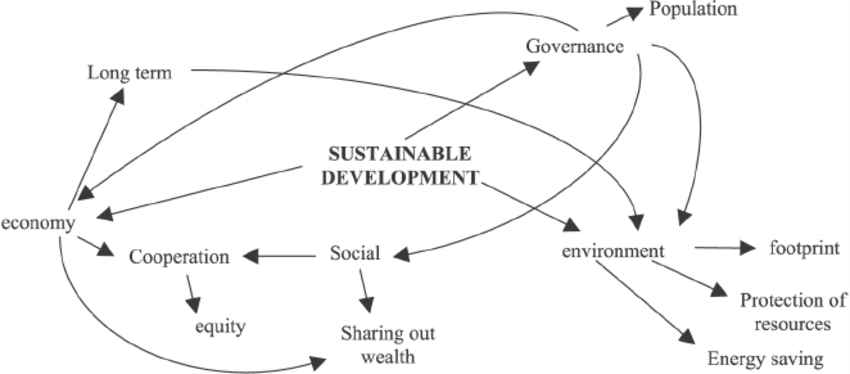

Cognitive mapping: a visualization technique for either affect extraction or proximity analysis. Cognitive mapping attempts to create a model of the overall meaning of the text such as a graphic map that represents the relationships between concepts.

General steps for conducting a relational content analysis:

1. Determine the type of analysis: Once the sample has been selected, the researcher needs to determine what types of relationships to examine and the level of analysis: word, word sense, phrase, sentence, themes. 2. Reduce the text to categories and code for words or patterns. A researcher can code for existence of meanings or words. 3. Explore the relationship between concepts: once the words are coded, the text can be analyzed for the following:

Strength of relationship: degree to which two or more concepts are related.

Sign of relationship: are concepts positively or negatively related to each other?

Direction of relationship: the types of relationship that categories exhibit. For example, “X implies Y” or “X occurs before Y” or “if X then Y” or if X is the primary motivator of Y.

4. Code the relationships: a difference between conceptual and relational analysis is that the statements or relationships between concepts are coded. 5. Perform statistical analyses: explore differences or look for relationships among the identified variables during coding. 6. Map out representations: such as decision mapping and mental models.

Reliability and Validity

Reliability : Because of the human nature of researchers, coding errors can never be eliminated but only minimized. Generally, 80% is an acceptable margin for reliability. Three criteria comprise the reliability of a content analysis:

Stability: the tendency for coders to consistently re-code the same data in the same way over a period of time.

Reproducibility: tendency for a group of coders to classify categories membership in the same way.

Accuracy: extent to which the classification of text corresponds to a standard or norm statistically.

Validity : Three criteria comprise the validity of a content analysis:

Closeness of categories: this can be achieved by utilizing multiple classifiers to arrive at an agreed upon definition of each specific category. Using multiple classifiers, a concept category that may be an explicit variable can be broadened to include synonyms or implicit variables.

Conclusions: What level of implication is allowable? Do conclusions correctly follow the data? Are results explainable by other phenomena? This becomes especially problematic when using computer software for analysis and distinguishing between synonyms. For example, the word “mine,” variously denotes a personal pronoun, an explosive device, and a deep hole in the ground from which ore is extracted. Software can obtain an accurate count of that word’s occurrence and frequency, but not be able to produce an accurate accounting of the meaning inherent in each particular usage. This problem could throw off one’s results and make any conclusion invalid.

Generalizability of the results to a theory: dependent on the clear definitions of concept categories, how they are determined and how reliable they are at measuring the idea one is seeking to measure. Generalizability parallels reliability as much of it depends on the three criteria for reliability.

Advantages of Content Analysis

Directly examines communication using text

Allows for both qualitative and quantitative analysis

Provides valuable historical and cultural insights over time

Allows a closeness to data

Coded form of the text can be statistically analyzed

Unobtrusive means of analyzing interactions

Provides insight into complex models of human thought and language use

When done well, is considered a relatively “exact” research method

Content analysis is a readily-understood and an inexpensive research method

A more powerful tool when combined with other research methods such as interviews, observation, and use of archival records. It is very useful for analyzing historical material, especially for documenting trends over time.

Disadvantages of Content Analysis

Can be extremely time consuming

Is subject to increased error, particularly when relational analysis is used to attain a higher level of interpretation

Is often devoid of theoretical base, or attempts too liberally to draw meaningful inferences about the relationships and impacts implied in a study

Is inherently reductive, particularly when dealing with complex texts

Tends too often to simply consist of word counts

Often disregards the context that produced the text, as well as the state of things after the text is produced

Can be difficult to automate or computerize

Textbooks & Chapters

Berelson, Bernard. Content Analysis in Communication Research.New York: Free Press, 1952.

Busha, Charles H. and Stephen P. Harter. Research Methods in Librarianship: Techniques and Interpretation.New York: Academic Press, 1980.

de Sola Pool, Ithiel. Trends in Content Analysis. Urbana: University of Illinois Press, 1959.

Krippendorff, Klaus. Content Analysis: An Introduction to its Methodology. Beverly Hills: Sage Publications, 1980.

Fielding, NG & Lee, RM. Using Computers in Qualitative Research. SAGE Publications, 1991. (Refer to Chapter by Seidel, J. ‘Method and Madness in the Application of Computer Technology to Qualitative Data Analysis’.)

Methodological Articles

Hsieh HF & Shannon SE. (2005). Three Approaches to Qualitative Content Analysis.Qualitative Health Research. 15(9): 1277-1288.

Elo S, Kaarianinen M, Kanste O, Polkki R, Utriainen K, & Kyngas H. (2014). Qualitative Content Analysis: A focus on trustworthiness. Sage Open. 4:1-10.

Application Articles

Abroms LC, Padmanabhan N, Thaweethai L, & Phillips T. (2011). iPhone Apps for Smoking Cessation: A content analysis. American Journal of Preventive Medicine. 40(3):279-285.

Ullstrom S. Sachs MA, Hansson J, Ovretveit J, & Brommels M. (2014). Suffering in Silence: a qualitative study of second victims of adverse events. British Medical Journal, Quality & Safety Issue. 23:325-331.

Owen P. (2012).Portrayals of Schizophrenia by Entertainment Media: A Content Analysis of Contemporary Movies. Psychiatric Services. 63:655-659.

Choosing whether to conduct a content analysis by hand or by using computer software can be difficult. Refer to ‘Method and Madness in the Application of Computer Technology to Qualitative Data Analysis’ listed above in “Textbooks and Chapters” for a discussion of the issue.

QSR NVivo: http://www.qsrinternational.com/products.aspx

Atlas.ti: http://www.atlasti.com/webinars.html

R- RQDA package: http://rqda.r-forge.r-project.org/

Rolly Constable, Marla Cowell, Sarita Zornek Crawford, David Golden, Jake Hartvigsen, Kathryn Morgan, Anne Mudgett, Kris Parrish, Laura Thomas, Erika Yolanda Thompson, Rosie Turner, and Mike Palmquist. (1994-2012). Ethnography, Observational Research, and Narrative Inquiry. Writing@CSU. Colorado State University. Available at: https://writing.colostate.edu/guides/guide.cfm?guideid=63 .

As an introduction to Content Analysis by Michael Palmquist, this is the main resource on Content Analysis on the Web. It is comprehensive, yet succinct. It includes examples and an annotated bibliography. The information contained in the narrative above draws heavily from and summarizes Michael Palmquist’s excellent resource on Content Analysis but was streamlined for the purpose of doctoral students and junior researchers in epidemiology.

At Columbia University Mailman School of Public Health, more detailed training is available through the Department of Sociomedical Sciences- P8785 Qualitative Research Methods.

Join the Conversation

Have a question about methods? Join us on Facebook

- Privacy Policy

Buy Me a Coffee

Home » Content Analysis – Methods, Types and Examples

Content Analysis – Methods, Types and Examples

Table of Contents

Content Analysis

Definition:

Content analysis is a research method used to analyze and interpret the characteristics of various forms of communication, such as text, images, or audio. It involves systematically analyzing the content of these materials, identifying patterns, themes, and other relevant features, and drawing inferences or conclusions based on the findings.

Content analysis can be used to study a wide range of topics, including media coverage of social issues, political speeches, advertising messages, and online discussions, among others. It is often used in qualitative research and can be combined with other methods to provide a more comprehensive understanding of a particular phenomenon.

Types of Content Analysis

There are generally two types of content analysis:

Quantitative Content Analysis

This type of content analysis involves the systematic and objective counting and categorization of the content of a particular form of communication, such as text or video. The data obtained is then subjected to statistical analysis to identify patterns, trends, and relationships between different variables. Quantitative content analysis is often used to study media content, advertising, and political speeches.

Qualitative Content Analysis

This type of content analysis is concerned with the interpretation and understanding of the meaning and context of the content. It involves the systematic analysis of the content to identify themes, patterns, and other relevant features, and to interpret the underlying meanings and implications of these features. Qualitative content analysis is often used to study interviews, focus groups, and other forms of qualitative data, where the researcher is interested in understanding the subjective experiences and perceptions of the participants.

Methods of Content Analysis

There are several methods of content analysis, including:

Conceptual Analysis

This method involves analyzing the meanings of key concepts used in the content being analyzed. The researcher identifies key concepts and analyzes how they are used, defining them and categorizing them into broader themes.

Content Analysis by Frequency

This method involves counting and categorizing the frequency of specific words, phrases, or themes that appear in the content being analyzed. The researcher identifies relevant keywords or phrases and systematically counts their frequency.

Comparative Analysis

This method involves comparing the content of two or more sources to identify similarities, differences, and patterns. The researcher selects relevant sources, identifies key themes or concepts, and compares how they are represented in each source.

Discourse Analysis

This method involves analyzing the structure and language of the content being analyzed to identify how the content constructs and represents social reality. The researcher analyzes the language used and the underlying assumptions, beliefs, and values reflected in the content.

Narrative Analysis

This method involves analyzing the content as a narrative, identifying the plot, characters, and themes, and analyzing how they relate to the broader social context. The researcher identifies the underlying messages conveyed by the narrative and their implications for the broader social context.

Content Analysis Conducting Guide

Here is a basic guide to conducting a content analysis:

- Define your research question or objective: Before starting your content analysis, you need to define your research question or objective clearly. This will help you to identify the content you need to analyze and the type of analysis you need to conduct.

- Select your sample: Select a representative sample of the content you want to analyze. This may involve selecting a random sample, a purposive sample, or a convenience sample, depending on the research question and the availability of the content.

- Develop a coding scheme: Develop a coding scheme or a set of categories to use for coding the content. The coding scheme should be based on your research question or objective and should be reliable, valid, and comprehensive.

- Train coders: Train coders to use the coding scheme and ensure that they have a clear understanding of the coding categories and procedures. You may also need to establish inter-coder reliability to ensure that different coders are coding the content consistently.

- Code the content: Code the content using the coding scheme. This may involve manually coding the content, using software, or a combination of both.

- Analyze the data: Once the content is coded, analyze the data using appropriate statistical or qualitative methods, depending on the research question and the type of data.

- Interpret the results: Interpret the results of the analysis in the context of your research question or objective. Draw conclusions based on the findings and relate them to the broader literature on the topic.

- Report your findings: Report your findings in a clear and concise manner, including the research question, methodology, results, and conclusions. Provide details about the coding scheme, inter-coder reliability, and any limitations of the study.

Applications of Content Analysis

Content analysis has numerous applications across different fields, including:

- Media Research: Content analysis is commonly used in media research to examine the representation of different groups, such as race, gender, and sexual orientation, in media content. It can also be used to study media framing, media bias, and media effects.

- Political Communication : Content analysis can be used to study political communication, including political speeches, debates, and news coverage of political events. It can also be used to study political advertising and the impact of political communication on public opinion and voting behavior.

- Marketing Research: Content analysis can be used to study advertising messages, consumer reviews, and social media posts related to products or services. It can provide insights into consumer preferences, attitudes, and behaviors.

- Health Communication: Content analysis can be used to study health communication, including the representation of health issues in the media, the effectiveness of health campaigns, and the impact of health messages on behavior.

- Education Research : Content analysis can be used to study educational materials, including textbooks, curricula, and instructional materials. It can provide insights into the representation of different topics, perspectives, and values.

- Social Science Research: Content analysis can be used in a wide range of social science research, including studies of social media, online communities, and other forms of digital communication. It can also be used to study interviews, focus groups, and other qualitative data sources.

Examples of Content Analysis

Here are some examples of content analysis:

- Media Representation of Race and Gender: A content analysis could be conducted to examine the representation of different races and genders in popular media, such as movies, TV shows, and news coverage.

- Political Campaign Ads : A content analysis could be conducted to study political campaign ads and the themes and messages used by candidates.

- Social Media Posts: A content analysis could be conducted to study social media posts related to a particular topic, such as the COVID-19 pandemic, to examine the attitudes and beliefs of social media users.

- Instructional Materials: A content analysis could be conducted to study the representation of different topics and perspectives in educational materials, such as textbooks and curricula.

- Product Reviews: A content analysis could be conducted to study product reviews on e-commerce websites, such as Amazon, to identify common themes and issues mentioned by consumers.

- News Coverage of Health Issues: A content analysis could be conducted to study news coverage of health issues, such as vaccine hesitancy, to identify common themes and perspectives.

- Online Communities: A content analysis could be conducted to study online communities, such as discussion forums or social media groups, to understand the language, attitudes, and beliefs of the community members.

Purpose of Content Analysis

The purpose of content analysis is to systematically analyze and interpret the content of various forms of communication, such as written, oral, or visual, to identify patterns, themes, and meanings. Content analysis is used to study communication in a wide range of fields, including media studies, political science, psychology, education, sociology, and marketing research. The primary goals of content analysis include:

- Describing and summarizing communication: Content analysis can be used to describe and summarize the content of communication, such as the themes, topics, and messages conveyed in media content, political speeches, or social media posts.

- Identifying patterns and trends: Content analysis can be used to identify patterns and trends in communication, such as changes over time, differences between groups, or common themes or motifs.

- Exploring meanings and interpretations: Content analysis can be used to explore the meanings and interpretations of communication, such as the underlying values, beliefs, and assumptions that shape the content.

- Testing hypotheses and theories : Content analysis can be used to test hypotheses and theories about communication, such as the effects of media on attitudes and behaviors or the framing of political issues in the media.

When to use Content Analysis

Content analysis is a useful method when you want to analyze and interpret the content of various forms of communication, such as written, oral, or visual. Here are some specific situations where content analysis might be appropriate:

- When you want to study media content: Content analysis is commonly used in media studies to analyze the content of TV shows, movies, news coverage, and other forms of media.

- When you want to study political communication : Content analysis can be used to study political speeches, debates, news coverage, and advertising.

- When you want to study consumer attitudes and behaviors: Content analysis can be used to analyze product reviews, social media posts, and other forms of consumer feedback.

- When you want to study educational materials : Content analysis can be used to analyze textbooks, instructional materials, and curricula.

- When you want to study online communities: Content analysis can be used to analyze discussion forums, social media groups, and other forms of online communication.

- When you want to test hypotheses and theories : Content analysis can be used to test hypotheses and theories about communication, such as the framing of political issues in the media or the effects of media on attitudes and behaviors.

Characteristics of Content Analysis

Content analysis has several key characteristics that make it a useful research method. These include:

- Objectivity : Content analysis aims to be an objective method of research, meaning that the researcher does not introduce their own biases or interpretations into the analysis. This is achieved by using standardized and systematic coding procedures.

- Systematic: Content analysis involves the use of a systematic approach to analyze and interpret the content of communication. This involves defining the research question, selecting the sample of content to analyze, developing a coding scheme, and analyzing the data.

- Quantitative : Content analysis often involves counting and measuring the occurrence of specific themes or topics in the content, making it a quantitative research method. This allows for statistical analysis and generalization of findings.

- Contextual : Content analysis considers the context in which the communication takes place, such as the time period, the audience, and the purpose of the communication.

- Iterative : Content analysis is an iterative process, meaning that the researcher may refine the coding scheme and analysis as they analyze the data, to ensure that the findings are valid and reliable.

- Reliability and validity : Content analysis aims to be a reliable and valid method of research, meaning that the findings are consistent and accurate. This is achieved through inter-coder reliability tests and other measures to ensure the quality of the data and analysis.

Advantages of Content Analysis

There are several advantages to using content analysis as a research method, including:

- Objective and systematic : Content analysis aims to be an objective and systematic method of research, which reduces the likelihood of bias and subjectivity in the analysis.

- Large sample size: Content analysis allows for the analysis of a large sample of data, which increases the statistical power of the analysis and the generalizability of the findings.

- Non-intrusive: Content analysis does not require the researcher to interact with the participants or disrupt their natural behavior, making it a non-intrusive research method.

- Accessible data: Content analysis can be used to analyze a wide range of data types, including written, oral, and visual communication, making it accessible to researchers across different fields.

- Versatile : Content analysis can be used to study communication in a wide range of contexts and fields, including media studies, political science, psychology, education, sociology, and marketing research.

- Cost-effective: Content analysis is a cost-effective research method, as it does not require expensive equipment or participant incentives.

Limitations of Content Analysis

While content analysis has many advantages, there are also some limitations to consider, including:

- Limited contextual information: Content analysis is focused on the content of communication, which means that contextual information may be limited. This can make it difficult to fully understand the meaning behind the communication.

- Limited ability to capture nonverbal communication : Content analysis is limited to analyzing the content of communication that can be captured in written or recorded form. It may miss out on nonverbal communication, such as body language or tone of voice.

- Subjectivity in coding: While content analysis aims to be objective, there may be subjectivity in the coding process. Different coders may interpret the content differently, which can lead to inconsistent results.

- Limited ability to establish causality: Content analysis is a correlational research method, meaning that it cannot establish causality between variables. It can only identify associations between variables.

- Limited generalizability: Content analysis is limited to the data that is analyzed, which means that the findings may not be generalizable to other contexts or populations.

- Time-consuming: Content analysis can be a time-consuming research method, especially when analyzing a large sample of data. This can be a disadvantage for researchers who need to complete their research in a short amount of time.

About the author

Muhammad Hassan

Researcher, Academic Writer, Web developer

You may also like

Cluster Analysis – Types, Methods and Examples

Discriminant Analysis – Methods, Types and...

MANOVA (Multivariate Analysis of Variance) –...

Documentary Analysis – Methods, Applications and...

ANOVA (Analysis of variance) – Formulas, Types...

Graphical Methods – Types, Examples and Guide

Have a language expert improve your writing

Run a free plagiarism check in 10 minutes, automatically generate references for free.

- Knowledge Base

- Methodology

Content Analysis | A Step-by-Step Guide with Examples

Published on 5 May 2022 by Amy Luo . Revised on 5 December 2022.

Content analysis is a research method used to identify patterns in recorded communication. To conduct content analysis, you systematically collect data from a set of texts, which can be written, oral, or visual:

- Books, newspapers, and magazines

- Speeches and interviews

- Web content and social media posts

- Photographs and films

Content analysis can be both quantitative (focused on counting and measuring) and qualitative (focused on interpreting and understanding). In both types, you categorise or ‘code’ words, themes, and concepts within the texts and then analyse the results.

Table of contents

What is content analysis used for, advantages of content analysis, disadvantages of content analysis, how to conduct content analysis.

Researchers use content analysis to find out about the purposes, messages, and effects of communication content. They can also make inferences about the producers and audience of the texts they analyse.

Content analysis can be used to quantify the occurrence of certain words, phrases, subjects, or concepts in a set of historical or contemporary texts.

In addition, content analysis can be used to make qualitative inferences by analysing the meaning and semantic relationship of words and concepts.

Because content analysis can be applied to a broad range of texts, it is used in a variety of fields, including marketing, media studies, anthropology, cognitive science, psychology, and many social science disciplines. It has various possible goals:

- Finding correlations and patterns in how concepts are communicated

- Understanding the intentions of an individual, group, or institution

- Identifying propaganda and bias in communication

- Revealing differences in communication in different contexts

- Analysing the consequences of communication content, such as the flow of information or audience responses

Prevent plagiarism, run a free check.

- Unobtrusive data collection

You can analyse communication and social interaction without the direct involvement of participants, so your presence as a researcher doesn’t influence the results.

- Transparent and replicable

When done well, content analysis follows a systematic procedure that can easily be replicated by other researchers, yielding results with high reliability .

- Highly flexible

You can conduct content analysis at any time, in any location, and at low cost. All you need is access to the appropriate sources.

Focusing on words or phrases in isolation can sometimes be overly reductive, disregarding context, nuance, and ambiguous meanings.

Content analysis almost always involves some level of subjective interpretation, which can affect the reliability and validity of the results and conclusions.

- Time intensive

Manually coding large volumes of text is extremely time-consuming, and it can be difficult to automate effectively.

If you want to use content analysis in your research, you need to start with a clear, direct research question .

Next, you follow these five steps.

Step 1: Select the content you will analyse

Based on your research question, choose the texts that you will analyse. You need to decide:

- The medium (e.g., newspapers, speeches, or websites) and genre (e.g., opinion pieces, political campaign speeches, or marketing copy)

- The criteria for inclusion (e.g., newspaper articles that mention a particular event, speeches by a certain politician, or websites selling a specific type of product)

- The parameters in terms of date range, location, etc.

If there are only a small number of texts that meet your criteria, you might analyse all of them. If there is a large volume of texts, you can select a sample .

Step 2: Define the units and categories of analysis

Next, you need to determine the level at which you will analyse your chosen texts. This means defining:

- The unit(s) of meaning that will be coded. For example, are you going to record the frequency of individual words and phrases, the characteristics of people who produced or appear in the texts, the presence and positioning of images, or the treatment of themes and concepts?

- The set of categories that you will use for coding. Categories can be objective characteristics (e.g., aged 30–40, lawyer, parent) or more conceptual (e.g., trustworthy, corrupt, conservative, family-oriented).

Step 3: Develop a set of rules for coding

Coding involves organising the units of meaning into the previously defined categories. Especially with more conceptual categories, it’s important to clearly define the rules for what will and won’t be included to ensure that all texts are coded consistently.

Coding rules are especially important if multiple researchers are involved, but even if you’re coding all of the text by yourself, recording the rules makes your method more transparent and reliable.

Step 4: Code the text according to the rules

You go through each text and record all relevant data in the appropriate categories. This can be done manually or aided with computer programs, such as QSR NVivo , Atlas.ti , and Diction , which can help speed up the process of counting and categorising words and phrases.

Step 5: Analyse the results and draw conclusions

Once coding is complete, the collected data is examined to find patterns and draw conclusions in response to your research question. You might use statistical analysis to find correlations or trends, discuss your interpretations of what the results mean, and make inferences about the creators, context, and audience of the texts.

Cite this Scribbr article

If you want to cite this source, you can copy and paste the citation or click the ‘Cite this Scribbr article’ button to automatically add the citation to our free Reference Generator.

Luo, A. (2022, December 05). Content Analysis | A Step-by-Step Guide with Examples. Scribbr. Retrieved 9 April 2024, from https://www.scribbr.co.uk/research-methods/content-analysis-explained/

Is this article helpful?

Other students also liked

How to do thematic analysis | guide & examples, data collection methods | step-by-step guide & examples, qualitative vs quantitative research | examples & methods.

- Technical Support

- Find My Rep

You are here

The Content Analysis Guidebook

- Kimberly A. Neuendorf - Cleveland State University, USA

- Description

Available with Perusall —an eBook that makes it easier to prepare for class Perusall is an award-winning eBook platform featuring social annotation tools that allow students and instructors to collaboratively mark up and discuss their SAGE textbook. Backed by research and supported by technological innovations developed at Harvard University, this process of learning through collaborative annotation keeps your students engaged and makes teaching easier and more effective. Learn more .

See what’s new to this edition by selecting the Features tab on this page. Should you need additional information or have questions regarding the HEOA information provided for this title, including what is new to this edition, please email [email protected] . Please include your name, contact information, and the name of the title for which you would like more information. For information on the HEOA, please go to http://ed.gov/policy/highered/leg/hea08/index.html .

For assistance with your order: Please email us at [email protected] or connect with your SAGE representative.

SAGE 2455 Teller Road Thousand Oaks, CA 91320 www.sagepub.com

Useful resource- readable and accessible for diverse student groups

The book discusses one of the most popular communication research methods, which is discussed with students.

The book provides a practical and valuable toolkit for students of different Levels doing Content Analysis

This is an excellent book for undergraduate students interested in doing content analysis for their dissertations. It is straightforward and covers content at the appropriate level.

An excellent text for encouraging students to think beyond questionnaires and interviews when considering how they can collect and analyse data to say something about the social world.

Content analysis is one of the most used research methods in education. This book does nice job to introduce it.

It is a very good guide to content analysis which makes a nice job explaining core concepts and techniques.

This is an excellent and comprehensive guidebook for students, researchers and teachers.

I've waited a long time for the new version of this book. The new additions relating to the content analysis of the online environment are very successful (already in many of my syllabuses for next year). This is undoubtedly a must-read for any methodological course. Excellent reference book for any researcher analyzes content.

KEY FEATURES

- Numerous examples from across numerous disciplines give readers the ability to explain findings and predict future outcomes in a variety contexts.

- Sidebars descriptions of innovative and wide-ranging content analysis projects , from both academia and commercial research, illustrate the interdisciplinary utility of content analysis.

- Pedagogical tools in an easy to understand format help readers unravel the complicated aspects of content analysis.

NEW TO THIS EDITION

- A new chapter on " Content Analysis in the Interactive Media Age " (Ch.7) shows readers how to create, aquire, archive and code interactive media content.

- The " Integrative Model of Content Analysis ," which explains how content analysis may be linked with source and/or receiver characteristics, has been revised to clarify a difference between "data links" and "logical links" among source-message-receiver components.

- New examples and updated references throughout keep readers up-to-date with the latest scholarship in content analysis and its application to everyday life.

- A new section focused specifically on validity gives readers a deeper understanding of measurement and llustrates how the standards of validity interrelate.

- A new resource section devoted to Computer Aided Text Analysis (CATA) programs such as Yoshikoder introduce readers to a growing set of options for automated analyses.

Sample Materials & Chapters

For instructors, select a purchasing option, related products.

This title is also available on SAGE Knowledge , the ultimate social sciences online library. If your library doesn’t have access, ask your librarian to start a trial .

This title is also available on SAGE Research Methods , the ultimate digital methods library. If your library doesn’t have access, ask your librarian to start a trial .

Encyclopedia of Quality of Life and Well-Being Research pp 1258–1261 Cite as

Content Analysis

- Anat Zaidman-Zait 3

- Reference work entry

1860 Accesses

7 Citations

Content analysis is a research method that has been used increasingly in social and health research. Content analysis has been used either as a quantitative or a qualitative research method. Over the years, it expanded from being an objective quantitative description of manifest content to a subjective interpretation of text data dealing with theory generation and the exploration of underlying meaning.

Description

Content analysis is a research method that has been used increasingly in social and health research, including quality of life and well-being. Content analysis has been generally defined as a systematic technique for compressing many words of text into fewer content categories based on explicit rules of coding (Berelson, 1952 ; Krippendorff, 1980 ; Weber, 1990 ). Historically, content analysis was defined as “the objective, systematic and quantitative description of the manifest content of communication” (Berelson, 1952 , p. 18). Initially, the manifest content was...

This is a preview of subscription content, log in via an institution .

Buying options

- Available as PDF

- Read on any device

- Instant download

- Own it forever

- Available as EPUB and PDF

- Durable hardcover edition

- Dispatched in 3 to 5 business days

- Free shipping worldwide - see info

Tax calculation will be finalised at checkout

Purchases are for personal use only

Berelson, B. (1952). Content analysis in communication research . Glencoe, IL: Free Press.

Google Scholar

Burns, N., & Grove, S. K. (2005). The practice of nursing research: Conduct, critique & utilization . St. Louis, MO: Elsevier Saunders.

Elo, S., & Kyngas, H. (2007). The qualitative content analysis process. Journal of Advanced Nursing, 62 , 107–115.

Gadermann, A. M., Guhn, M., & Zumbo, B. D. (2011). Investigating the substantive aspect of construct validity for the satisfaction with life scale adapted for children: A focus on cognitive processes. Social Indicators Research, 100 , 37–60.

Graneheim, U. H., & Lundman, B. (2004). Qualitative content analysis in nursing research: Concepts, procedures and measures to achieve trustworthiness. Nurse Education Today, 24 , 105–112.

Hsieh, H., & Shannon, S. E. (2005). Three approaches to qualitative content analysis. Qualitative Health Research, 15 , 1277–1288.

Krippendorff, K. (1980). Content analysis: An introduction to its methodology . Beverly Hills, CA: Sage.

Neundork, K. (2002). The content analysis guidebook . Thousand Oaks, CA: Sage.

Norris, C. M., & King, K. (2009). A qualitative examination of the factors that influence women’s quality of life as they live with coronary artery disease. Western Journal of Nursing Research, 31 , 513–524.

Spurgin, K. M., & Wildemuth, B. M. (2009). Content analysis. In B. Wildemuth (Ed.), Applications of social research methods to questions in information and library (pp. 297–307). Westport, CT: Libraries Unlimited.

Walsh, T. R., Irwin, D. E., Meier, A., Varni, J. W., & DeWalt, D. A. (2008). The use of focus groups in the development of the PROMIS pediatric item bank. Quality of Life Research, 17 , 725–735.

Weber, R. P. (1990). Basic content analysis . Beverly Hills, CA: Sage.

Willig, C. (2008). Introducing qualitative research in psychology . Berkshire, UK: McGraw-Hill.

Zhang, Y., & Wildemuth, B. M. (2009). Qualitative analysis of content. In B. Wildemuth (Ed.), Applications of social research methods to questions in information and library (pp. 308–319). Westport, CT: Libraries Unlimited.

Download references

Author information

Authors and affiliations.

Tel-Aviv University, Tel-Aviv, Israel

Anat Zaidman-Zait

You can also search for this author in PubMed Google Scholar

Corresponding author

Correspondence to Anat Zaidman-Zait .

Editor information

Editors and affiliations.

University of Northern British Columbia, Prince George, BC, Canada

Alex C. Michalos

(residence), Brandon, MB, Canada

Rights and permissions

Reprints and permissions

Copyright information

© 2014 Springer Science+Business Media Dordrecht

About this entry

Cite this entry.

Zaidman-Zait, A. (2014). Content Analysis. In: Michalos, A.C. (eds) Encyclopedia of Quality of Life and Well-Being Research. Springer, Dordrecht. https://doi.org/10.1007/978-94-007-0753-5_552

Download citation

DOI : https://doi.org/10.1007/978-94-007-0753-5_552

Publisher Name : Springer, Dordrecht

Print ISBN : 978-94-007-0752-8

Online ISBN : 978-94-007-0753-5

eBook Packages : Humanities, Social Sciences and Law

Share this entry

Anyone you share the following link with will be able to read this content:

Sorry, a shareable link is not currently available for this article.

Provided by the Springer Nature SharedIt content-sharing initiative

- Publish with us

Policies and ethics

- Find a journal

- Track your research

- What is content analysis?

Last updated

20 March 2023

Reviewed by

Miroslav Damyanov

When you're conducting qualitative research, you'll find yourself analyzing various texts. Perhaps you'll be evaluating transcripts from audio interviews you've conducted. Or you may find yourself assessing the results of a survey filled with open-ended questions.

Streamline content analysis

Bring all your qualitative research into one place to code and analyze with Dovetail

Content analysis is a research method used to identify the presence of various concepts, words, and themes in different texts. Two types of content analysis exist: conceptual analysis and relational analysis . In the former, researchers determine whether and how frequently certain concepts appear in a text. In relational analysis, researchers explore how different concepts are related to one another in a text.

Both types of content analysis require the researcher to code the text. Coding the text means breaking it down into different categories that allow it to be analyzed more easily.

- What are some common uses of content analysis?

You can use content analysis to analyze many forms of text, including:

Interview and discussion transcripts

Newspaper articles and headline

Literary works

Historical documents

Government reports

Academic papers

Music lyrics

Researchers commonly use content analysis to draw insights and conclusions from literary works. Historians and biographers may apply this approach to letters, papers, and other historical documents to gain insight into the historical figures and periods they are writing about. Market researchers can also use it to evaluate brand performance and perception.

Some researchers have used content analysis to explore differences in decision-making and other cognitive processes. While researchers traditionally used this approach to explore human cognition, content analysis is also at the heart of machine learning approaches currently being used and developed by software and AI companies.

- Conducting a conceptual analysis

Conceptual analysis is more commonly associated with content analysis than relational analysis.

In conceptual analysis, you're looking for the appearance and frequency of different concepts. Why? This information can help further your qualitative or quantitative analysis of a text. It's an inexpensive and easily understood research method that can help you draw inferences and conclusions about your research subject. And while it is a relatively straightforward analytical tool, it does consist of a multi-step process that you must closely follow to ensure the reliability and validity of your study.

When you're ready to conduct a conceptual analysis, refer to your research question and the text. Ask yourself what information likely found in the text is relevant to your question. You'll need to know this to determine how you'll code the text. Then follow these steps:

1. Determine whether you're looking for explicit terms or implicit terms.

Explicit terms are those that directly appear in the text, while implicit ones are those that the text implies or alludes to or that you can infer.

Coding for explicit terms is straightforward. For example, if you're looking to code a text for an author's explicit use of color, you'd simply code for every instance a color appears in the text. However, if you're coding for implicit terms, you'll need to determine and define how you're identifying the presence of the term first. Doing so involves a certain amount of subjectivity and may impinge upon the reliability and validity of your study .

2. Next, identify the level at which you'll conduct your analysis.

You can search for words, phrases, or sentences encapsulating your terms. You can also search for concepts and themes, but you'll need to define how you expect to identify them in the text. You must also define rules for how you'll code different terms to reduce ambiguity. For example, if, in an interview transcript, a person repeats a word one or more times in a row as a verbal tic, should you code it more than once? And what will you do with irrelevant data that appears in a term if you're coding for sentences?

Defining these rules upfront can help make your content analysis more efficient and your final analysis more reliable and valid.

3. You'll need to determine whether you're coding for a concept or theme's existence or frequency.

If you're coding for its existence, you’ll only count it once, at its first appearance, no matter how many times it subsequently appears. If you're searching for frequency, you'll count the number of its appearances in the text.

4. You'll also want to determine the number of terms you want to code for and how you may wish to categorize them.

For example, say you're conducting a content analysis of customer service call transcripts and looking for evidence of customer dissatisfaction with a product or service. You might create categories that refer to different elements with which customers might be dissatisfied, such as price, features, packaging, technical support, and so on. Then you might look for sentences that refer to those product elements according to each category in a negative light.

5. Next, you'll need to develop translation rules for your codes.

Those rules should be clear and consistent, allowing you to keep track of your data in an organized fashion.

6. After you've determined the terms for which you're searching, your categories, and translation rules, you're ready to code.

You can do so by hand or via software. Software is quite helpful when you have multiple texts. But it also becomes more vital for you to have developed clear codes, categories, and translation rules, especially if you're looking for implicit terms and concepts. Otherwise, your software-driven analysis may miss key instances of the terms you seek.

7. When you have your text coded, it's time to analyze it.

Look for trends and patterns in your results and use them to draw relevant conclusions about your research subject.

- Conducting a relational analysis

In a relational analysis, you're examining the relationship between different terms that appear in your text(s). To do so requires you to code your texts in a similar fashion as in a relational analysis. However, depending on the type of relational analysis you're trying to conduct, you may need to follow slightly different rules.

Three types of relational analyses are commonly used: affect extraction , proximity analysis , and cognitive mapping .

Affect extraction

This type of relational analysis involves evaluating the different emotional concepts found in a specific text. While the insights from affect extraction can be invaluable, conducting it may prove difficult depending on the text. For example, if the text captures people's emotional states at different times and from different populations, you may find it difficult to compare them and draw appropriate inferences.

Proximity analysis

A relatively simpler analytical approach than affect extraction, proximity analysis assesses the co-occurrence of explicit concepts in a text. You can create what's known as a concept matrix, which is a group of interrelated co-occurring concepts. Concept matrices help evaluate and determine the overall meaning of a text or the identification of a secondary message or theme.

Cognitive mapping

You can use cognitive mapping as a way to visualize the results of either affect extraction or proximity analysis. This technique uses affect extraction or proximity analysis results to create a graphic map illustrating the relationship between co-occurring emotions or concepts.

To conduct a relational analysis, you must start by determining the type of analysis that best fits the study: affect extraction or proximity analysis.

Complete steps one through six as outlined above. When it comes to the seventh step, analyze the text according to the relational analysis type they've chosen. During this step, feel free to use cognitive mapping to help draw inferences and conclusions about the relationships between co-occurring emotions or concepts. And use other tools, such as mental modeling and decision mapping as necessary, to analyze the results.

- The advantages of content analysis

Content analysis provides researchers with a robust and inexpensive method to qualitatively and quantitatively analyze a text. By coding the data, you can perform statistical analyses of the data to affirm and reinforce conclusions you may draw. And content analysis can provide helpful insights into language use, behavioral patterns, and historical or cultural conventions that can be valuable beyond the scope of the initial study.

When content analyses are applied to interview data, the approach provides a way to closely analyze data without needing interview-subject interaction, which can be helpful in certain contexts. For example, suppose you want to analyze the perceptions of a group of geographically diverse individuals. In this case, you can conduct a content analysis of existing interview transcripts rather than assuming the time and expense of conducting new interviews.

What is meant by content analysis?

Content analysis is a research method that helps a researcher explore the occurrence of and relationships between various words, phrases, themes, or concepts in a text or set of texts. The method allows researchers in different disciplines to conduct qualitative and quantitative analyses on a variety of texts.

Where is content analysis used?

Content analysis is used in multiple disciplines, as you can use it to evaluate a variety of texts. You can find applications in anthropology, communications, history, linguistics, literary studies, marketing, political science, psychology, and sociology, among other disciplines.

What are the two types of content analysis?

Content analysis may be either conceptual or relational. In a conceptual analysis, researchers examine a text for the presence and frequency of specific words, phrases, themes, and concepts. In a relational analysis, researchers draw inferences and conclusions about the nature of the relationships of co-occurring words, phrases, themes, and concepts in a text.

What's the difference between content analysis and thematic analysis?

Content analysis typically uses a descriptive approach to the data and may use either qualitative or quantitative analytical methods. By contrast, a thematic analysis only uses qualitative methods to explore frequently occurring themes in a text.

Get started today

Go from raw data to valuable insights with a flexible research platform

Editor’s picks

Last updated: 21 December 2023

Last updated: 16 December 2023

Last updated: 6 October 2023

Last updated: 5 March 2024

Last updated: 25 November 2023

Last updated: 15 February 2024

Last updated: 11 March 2024

Last updated: 12 December 2023

Last updated: 6 March 2024

Last updated: 10 April 2023

Last updated: 20 December 2023

Latest articles

Related topics, log in or sign up.

Get started for free

- Search Menu

- Browse content in Arts and Humanities

- Browse content in Archaeology

- Anglo-Saxon and Medieval Archaeology

- Archaeological Methodology and Techniques

- Archaeology by Region

- Archaeology of Religion

- Archaeology of Trade and Exchange

- Biblical Archaeology

- Contemporary and Public Archaeology

- Environmental Archaeology

- Historical Archaeology

- History and Theory of Archaeology

- Industrial Archaeology

- Landscape Archaeology

- Mortuary Archaeology

- Prehistoric Archaeology

- Underwater Archaeology

- Urban Archaeology

- Zooarchaeology

- Browse content in Architecture

- Architectural Structure and Design

- History of Architecture

- Residential and Domestic Buildings

- Theory of Architecture

- Browse content in Art

- Art Subjects and Themes

- History of Art

- Industrial and Commercial Art

- Theory of Art

- Biographical Studies

- Byzantine Studies

- Browse content in Classical Studies

- Classical History

- Classical Philosophy

- Classical Mythology

- Classical Literature

- Classical Reception

- Classical Art and Architecture

- Classical Oratory and Rhetoric

- Greek and Roman Epigraphy

- Greek and Roman Law

- Greek and Roman Papyrology

- Greek and Roman Archaeology

- Late Antiquity

- Religion in the Ancient World

- Digital Humanities

- Browse content in History

- Colonialism and Imperialism

- Diplomatic History

- Environmental History

- Genealogy, Heraldry, Names, and Honours

- Genocide and Ethnic Cleansing

- Historical Geography

- History by Period

- History of Emotions

- History of Agriculture

- History of Education

- History of Gender and Sexuality

- Industrial History

- Intellectual History

- International History

- Labour History

- Legal and Constitutional History

- Local and Family History

- Maritime History

- Military History

- National Liberation and Post-Colonialism

- Oral History

- Political History

- Public History

- Regional and National History

- Revolutions and Rebellions

- Slavery and Abolition of Slavery

- Social and Cultural History

- Theory, Methods, and Historiography

- Urban History

- World History

- Browse content in Language Teaching and Learning

- Language Learning (Specific Skills)

- Language Teaching Theory and Methods

- Browse content in Linguistics

- Applied Linguistics

- Cognitive Linguistics

- Computational Linguistics

- Forensic Linguistics

- Grammar, Syntax and Morphology

- Historical and Diachronic Linguistics

- History of English

- Language Acquisition

- Language Evolution

- Language Reference

- Language Variation

- Language Families

- Lexicography

- Linguistic Anthropology

- Linguistic Theories

- Linguistic Typology

- Phonetics and Phonology

- Psycholinguistics

- Sociolinguistics

- Translation and Interpretation

- Writing Systems

- Browse content in Literature

- Bibliography

- Children's Literature Studies

- Literary Studies (Asian)

- Literary Studies (European)

- Literary Studies (Eco-criticism)

- Literary Studies (Romanticism)

- Literary Studies (American)

- Literary Studies (Modernism)

- Literary Studies - World

- Literary Studies (1500 to 1800)

- Literary Studies (19th Century)

- Literary Studies (20th Century onwards)

- Literary Studies (African American Literature)

- Literary Studies (British and Irish)

- Literary Studies (Early and Medieval)

- Literary Studies (Fiction, Novelists, and Prose Writers)

- Literary Studies (Gender Studies)

- Literary Studies (Graphic Novels)

- Literary Studies (History of the Book)

- Literary Studies (Plays and Playwrights)

- Literary Studies (Poetry and Poets)

- Literary Studies (Postcolonial Literature)

- Literary Studies (Queer Studies)

- Literary Studies (Science Fiction)

- Literary Studies (Travel Literature)

- Literary Studies (War Literature)

- Literary Studies (Women's Writing)

- Literary Theory and Cultural Studies

- Mythology and Folklore

- Shakespeare Studies and Criticism

- Browse content in Media Studies

- Browse content in Music

- Applied Music

- Dance and Music

- Ethics in Music

- Ethnomusicology

- Gender and Sexuality in Music

- Medicine and Music

- Music Cultures

- Music and Religion

- Music and Media

- Music and Culture

- Music Education and Pedagogy

- Music Theory and Analysis

- Musical Scores, Lyrics, and Libretti

- Musical Structures, Styles, and Techniques

- Musicology and Music History

- Performance Practice and Studies

- Race and Ethnicity in Music

- Sound Studies

- Browse content in Performing Arts

- Browse content in Philosophy

- Aesthetics and Philosophy of Art

- Epistemology

- Feminist Philosophy

- History of Western Philosophy

- Metaphysics

- Moral Philosophy

- Non-Western Philosophy

- Philosophy of Science

- Philosophy of Language

- Philosophy of Mind

- Philosophy of Perception

- Philosophy of Action

- Philosophy of Law

- Philosophy of Religion

- Philosophy of Mathematics and Logic

- Practical Ethics

- Social and Political Philosophy

- Browse content in Religion

- Biblical Studies

- Christianity

- East Asian Religions

- History of Religion

- Judaism and Jewish Studies

- Qumran Studies

- Religion and Education

- Religion and Health

- Religion and Politics

- Religion and Science

- Religion and Law

- Religion and Art, Literature, and Music

- Religious Studies

- Browse content in Society and Culture

- Cookery, Food, and Drink

- Cultural Studies

- Customs and Traditions

- Ethical Issues and Debates

- Hobbies, Games, Arts and Crafts

- Lifestyle, Home, and Garden

- Natural world, Country Life, and Pets

- Popular Beliefs and Controversial Knowledge

- Sports and Outdoor Recreation

- Technology and Society

- Travel and Holiday

- Visual Culture

- Browse content in Law

- Arbitration

- Browse content in Company and Commercial Law

- Commercial Law

- Company Law

- Browse content in Comparative Law

- Systems of Law

- Competition Law

- Browse content in Constitutional and Administrative Law

- Government Powers

- Judicial Review

- Local Government Law

- Military and Defence Law

- Parliamentary and Legislative Practice

- Construction Law

- Contract Law

- Browse content in Criminal Law

- Criminal Procedure

- Criminal Evidence Law

- Sentencing and Punishment

- Employment and Labour Law

- Environment and Energy Law

- Browse content in Financial Law

- Banking Law

- Insolvency Law

- History of Law

- Human Rights and Immigration